Clickers: Enhancing the residency selection process

Published online: 2 January, TAPS 2019, 4(1), 51-54

DOI: https://doi.org/10.29060/TAPS.2019-4-1/SC1052

Jill Cheng Sim Lee1, Muhammad Fairuz Abdul Rahman1, Weng Yan Ho1, Mor Jack Ng2, Kok Hian Tan1,2 & Bernard Su Min Chern1,2

1Division of Obstetrics and Gynaecology, KK Women’s and Children’s Hospital, Singapore; 2SingHealth Duke-NUS Obstetrics and Gynaecology (OBGYN) Academic Clinical Program, SingHealth Duke-NUS Academic Medical Centre, Singapore

Abstract

Background: Residency selection panels commonly use time consuming manual voting processes which are easily subjected to bias and influence of others to select successful candidates. We explored the use of an electronic audience response system (ARS) or ‘clickers’ in obstetrics and gynaecology resident selection; studying the voting process and examiner feedback on confidentiality and efficiency.

Methods: All 10 interviewers were provided with clickers to vote for each of the 25 candidates at the end of the residency selection interview. Votes were cast using a 5-point Likert scale. The number of clickers provided to each interviewer was weighted according to the rank of the interviewer. Voting scores and time for each candidate was recorded by the ARS and interviewers completed a questionnaire evaluating their experience of using clickers for resident selection.

Results: The 10 successful candidates scored a mean of 4.28 (SD 0.27, range 3.86-4.73), compared to 2.99 (SD 0.71, 1.50–3.79) for the 15 unsuccessful candidates (p<0.001). Average voting time was 26 seconds per candidate. Total voting time for all candidates was 650 seconds. All interviewers favoured the use of clickers, for its confidentiality, instantaneous results, and more discerning graduated response.

Conclusion: Clickers provide a rapid and anonymous method of collating interviewer decisions following a rigorous selection process. It was well-received by interviewers and highly recommended for use by other residencies in their selection process.

Keywords: Resident Selection, Clickers, Electronic Audience Response System

PRACTICE HIGHLIGHTS

- Clickers provide a time-efficient way of collating resident selection interview outcomes following a rigorous structured selection process.

- It is important that individual interviewers are able to select successful candidates anonymously to reduce risk of bias from external influences as use of multiple observers rather than single interviewers improves reliability of the resident selection interview.

- Numerical ratings using clickers provide objective and transparent data easily available should an inquiry arise about the resident selection process.

- Clickers are increasingly becoming standard educational tools within the medical classroom. Faculty and resident familiarity with audience response systems allows development of creative extensions of clickers beyond the classroom context.

I. INTRODUCTION

There is increasing evidence that radiofrequency electronic Audience Response System (ARS) or “clickers” are useful in undergraduate and postgraduate medical education (Caldwell, 2007). ARS instantly collects real-time data through hand-held keypads and graphs participant responses. Each clicker unit has a unique signal allowing answers from each assessor to be identified and recorded.

Clickers bridge the communication gap between speaker and audience, and is used to assess understanding, engage attention, enhance learner enjoyment and interaction, improve knowledge retention and encourage clinical reasoning and problem solving. The positive uptake, feedback and experience of clickers by the medical education community has led to its introduction in innovative new areas, providing solutions to many problems within medical education. Outside medicine, clickers have been used for research data collection, enhanced social norms marketing campaigns and surveying vulnerable, low literacy groups.

The SingHealth Obstetrics and Gynaecology (OBGYN) Residency Program recently explored clickers as a way to improve efficiency and efficacy in the residency selection process. Traditional selection interviews typically concluded with a voting process where members of the selection panel raised hands to decide on the best candidates. This was time-consuming and individuals’ voting decisions could be swayed by openly visible votes of other panel members. Furthermore, hand-raising only allowed binary responses. This paper describes the OBGYN resident selection process using clickers and studies interviewer feedback on its confidentiality and efficiency. To our knowledge, no literature exists on the use of ARS during recruitment interviews.

II. METHODS

The SingHealth OBGYN residency selection process was conducted over two interview sessions assessing 25 candidates for selection for the academic year of 2015. Prior to this, candidates were shortlisted from an annual national specialty training application process open to final year medical students, house officers and medical officers. Following review of applications, portfolios, medical school grades and letters of support, candidates participated in a national level multiple mini interview prior to undergoing selection at individual sponsoring institutions.

The SingHealth selection panel comprised ten interviewers; the Program Director (PD), two Associate Program Directors (APDs), Academic Chair, four core faculty and two chief residents. The selection format comprised a round robin three-station interview; a large panel interview with the PD, Academic Chair, an APD, a core faculty member and chief resident; a small panel interview with an APD and one core faculty; and a less formal ‘bull pen’ interview with two core faculty and a chief resident. Candidates were interviewed alone in the large panel, where they were asked about self-appraisal and reactions to residency and healthcare industry. In the small panel, they were interviewed about goals, ambitions and work experience. Candidates awaiting panel interviews were interviewed about personal information and life questions in a group in the ‘bull pen’. Interviewers convened at the end to discuss candidate performances and review the multisource feedback obtained from within SingHealth OBGYN. Interviewers then scored each candidate using clickers.

All interviewers were given clickers to vote for each candidate. The ARS in this study was the Classroom Performance Systems Pulse, utilising the INTERWRITERESPONSE® 6.0 software. The number of clickers given to each interviewer was weighted, with the PD receiving three and APDs and Academic Chair receiving two each. Other interviewers each received one. This weightage policy was decided by the program in recognition of leadership and experience. Program administrators allocated numbered clickers to each interviewer. Interviewers were blinded regarding which clickers were allocated to other interviewers.

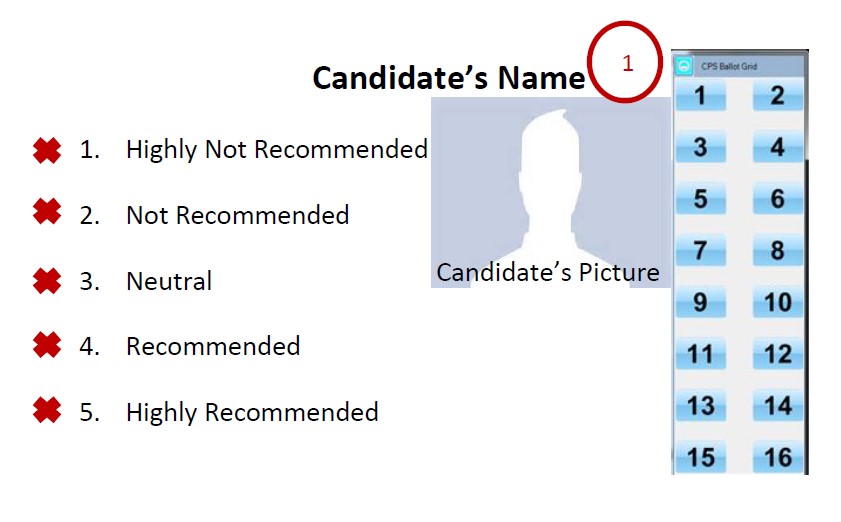

Candidates’ names and photographs would be shown on screen (Figure 1) and interviewers were asked to vote using a 5-point Likert scale, with 1 being “Highly Not Recommended”, 2, “Not Recommended”, 3, “Neutral”, 4, “Recommended” and 5, “Highly Recommended”. The ARS instantly processed and displayed results to interviewers, enabling immediate visualisation of scores and ranking of candidates. Time taken for interviewers to vote for each candidate was recorded as part of the ARS. No limit was imposed to the voting time taken by interviewers to decide on each candidate. The total time taken for voting each candidate was measured as the time from which ARS voting was activated for the candidate until all clicker responses were recorded. Interviewers were not allowed to abstain from voting. The 10 candidates with the best mean score were admitted into the SingHealth OBGYN Residency Program.

All interviewers were asked the following questions via email a week later:

- Did you like the anonymity of the response system?

- Did you like the graduated response allowed by the clickers instead of binary “Yes” or “No” responses?

- Did you like the instantaneous results generated by the clicker software?

Microsoft Excel 2010 was used to analyse differences in scores between successful and unsuccessful candidates using t-test. Data on time and questionnaire responses were analysed using descriptive statistics.

This study was undertaken as part of a larger study to understand residency selection interviews in OBGYN. SingHealth Centralised Institutional Review Board exempted this study from further review.

Figure 1: Example of voting display screen

III. RESULTS

Ten interviewers participated in the selection process of which a total of 15 clickers were utilised in accordance with the weightage described above.

The mean score for all 25 candidates was 3.51 (SD 0.86, 1.50-4.73), with a combined score of 87.75. The mean score for the 10 successful candidates was 4.28 (SD 0.27, range 3.86-4.73), compared to 2.99 (SD 0.71, 1.50–3.79) for the 15 unsuccessful candidates (p<0.001). The total time taken to vote for 25 candidates was 650 seconds with a mean of 26 seconds per candidate. All interviewers recorded decisions within two minutes.

All (100%) ten interviewers returned the questionnaire and answered “Yes” to the three questions, favouring the confidentiality, instantaneous results, and graduated response provided by ARS.

IV. DISCUSSION

Clickers are increasingly used in medical education and familiar to most college faculty members (Lewin, Vinson, Stetzer, & Smith, 2016). The ARS in this study is a shared system used by all SingHealth OBGYN faculty and residents. The familiarity of the interviewers with clickers made it a rapid and accurate way for administrators to process votes following the interview. The total time taken for all interviewers to record votes for all 25 candidates was under 11 minutes. Average cost for the recruitment of one postgraduate year 1 position was US$9899, of which 96% were attributed to efforts, and therefore time, contributed by the faculty, chief residents, and administrative staff (Brummond et al., 2013). Time-saving strategies are crucial to reduce the growing costs of resident recruitment. No studies were available studying time efficiency following implementation of clickers apart from one that reported no difference in time spent lecturing between clicker and non-clicker classes in the University-level science, technology, engineering and mathematics setting (Lewin et al., 2016). However, our use of clickers did not involve lecturing and further studies are needed to compare the time difference between traditional and clicker voting methods in resident selection.

The interviewers liked the anonymity provided by clickers. Anonymity to vulnerable groups through clickers has been echoed in non-medical literature (Keifer, Reyes, Liebman, & Juarez-Carrillo, 2014). In the selection interview context, it reduces uncontrolled swaying of votes by dominant individuals causing severe bias to voting results. We believe clickers allow investigators to control the influence of each assessor with the use of predetermined and prior agreed weighted votes through the allocation of greater numbers of clickers to residency leaders in recognition of their greater experience. In other situations, equal weightage for each voter may be more appropriate and can be manipulated to suit needs. Immediate tabulation of results further adds transparency. Should an inquiry arise about the resident selection process, data can easily be reviewed through the program administrator as to decisions recorded by each interviewer.

Interviewers liked the instant response provided by clickers. This mirrors feedback of other ARS users such as a graduate student population study which reported the primary benefit of clickers related to providing immediate feedback (Benson, Szucs, & Taylor, 2016).

This study has limitations. This single cohort study utilised a single ARS which may not reflect practices of other programs or ARS platforms. However, many currently available ARS platforms share common functions. We did not compare the time taken to record the decisions of interviewers during interview sessions which did not utilise clickers nor did we evaluate reasons for delay in decision-making time beyond the mean. Further study of these factors may identify individual and system-based problems such as interviewers’ variation in familiarity with clickers and coping with multiple keypads.

V. CONCLUSION

In summary, study of interviewer feedback suggests that clickers enhance residency selection by providing a rapid and anonymous method of collating interviewer decisions following a rigorous selection process. The introduction of clickers to the selection process was well-received by all interviewers in this study and highly recommended for similar use by other residencies.

This simple, novel extension to the use of clickers beyond the classroom illustrates how we can extend the use of facilities already available within our medical institutions to improve existing systems. Such attitudes need to continue to be encouraged within healthcare services.

Research is currently in progress to study the multi-station interview process and its correlation with success during residency.

Notes on Contributors

Dr. Jill Cheng Sim Lee is a senior resident in OBGYN at SingHealth and former Chief Resident for Education within her department. She has a Master of Science in Clinical Education and is involved in undergraduate medical education at Lee Kong Chian School of Medicine, Nanyang Technological University.

Dr. Muhammad Fairuz Abdul Rahman is a 4th year resident in OBGYN at SingHealth.

Dr. Weng Yan Ho is a senior resident in OBGYN at SingHealth and former Chief Resident for Administration within her department.

Mor Jack Ng is the Manager of the SingHealth-Duke-National University of Singapore (NUS) OBGYN Academic Clinical Program (ACP).

Prof. Kok Hian Tan is Senior Associate Dean of Academic Medicine at Duke-NUS, Group Director of Academic Medicine at SingHealth and Head of Perinatal Audit and Epidemiology at KK Women’s and Children’s Hospital (KKH). He is also editor-in-chief of the Singapore Journal of Obstetrics and Gynaecology.

A/Prof. Bernard Su Min Chern is Chairman of Division of OBGYN and Head and Senior Consultant of both the Department of OBGYN and Minimally Invasive Surgery Unit at KKH. He is also Chairman of the SingHealth-Duke-NUS OBGYN ACP and was formerly Program Director of the SingHealth OBGYN Residency Program.

Ethical Approval

This study was undertaken as part of a larger study to understand residency selection interviews in OBGYN. SingHealth Centralised Institutional Review Board approved this study with an exempt status.

Acknowledgements

The authors would like to acknowledge the support and cooperation provided by the faculty and staff at the SingHealth OBGYN Residency Program during the period of this study.

Funding

Funding for this study was borne internally by SingHealth OBGYN Residency Program. No external funding sources were required.

Declaration of Interest

All authors have no potential conflicts of interest.

References

Benson, J. D., Szucs, K. A., & Taylor, M. (2016). Student Response Systems and Learning: Perceptions of the Student. Occupational Therapy In Health Care, 30(4), 406–414. https://doi.org/10.1080/07380577.2016.1222644.

Brummond, A., Sefcik, S., Halvorsen, A. J., Chaudhry, S., Arora, V., Adams, M., … Reed, D. A. (2013). Resident Recruitment Costs: A National Survey of Internal Medicine Program Directors. The American Journal of Medicine, 126(7), 646–653. https://doi.org/10.1016/j.amjmed.2013.03.018.

Caldwell, J. E. (2007). Clickers in the large classroom: Current research and best-practice tips. CBE-Life Sciences Education, 6(1), 9–20.

Keifer, M. C., Reyes, I., Liebman, A. K., & Juarez-Carrillo, P. (2014). The use of audience response system technology with limited-english-proficiency, low-literacy, and vulnerable populations. Journal of Agromedicine, 19(1), 44–52.

Lewin, J. D., Vinson, E. L., Stetzer, M. R., & Smith, M. K. (2016). A Campus-Wide Investigation of Clicker Implementation: The Status of Peer Discussion in STEM Classes. Cell Biology Education, 15(1), ar6,1-ar6,12. https://doi.org/10.1187/cbe.15-10-0224.

*Dr Jill C. S. Lee

Email: jill.lee.c.s.@singhealth.com.sg

Division of Obstetrics and Gynaecology,

KK Women’s and Children’s Hospital,

100 Bukit Timah Road, Singapore 229899

Tel: +65 6225 5554

Published online: 2 January, TAPS 2019, 4(1), 48-50

DOI: https://doi.org/10.29060/TAPS.2019-4-1/SC1067

Nattabborn Bunplook1, Mechita Kongphiromchuen1, Priyapat Phatinawin1 & Panadda Rojpibulstit2

1Medical Education Centre Buddhasothorn Hospital, Faculty of Medicine, Thammasat University, Thailand; 2Department of Biochemistry, Preclinical Science Institute, Faculty of Medicine, Thammasat University, Thailand

Abstract

Aim: To encourage first year medical students to have better health by drinking more water and being role models in health promoting lifestyle.

Methods: The campaign was launched in February 2016 with seven main activities, convinced via social networking apps (Line and Facebook). The pre and post questionnaires were launched via Google Forms regarding the effective activities and participants’ drinking behaviour. Data were analysed by descriptive statistics and dependent t-test.

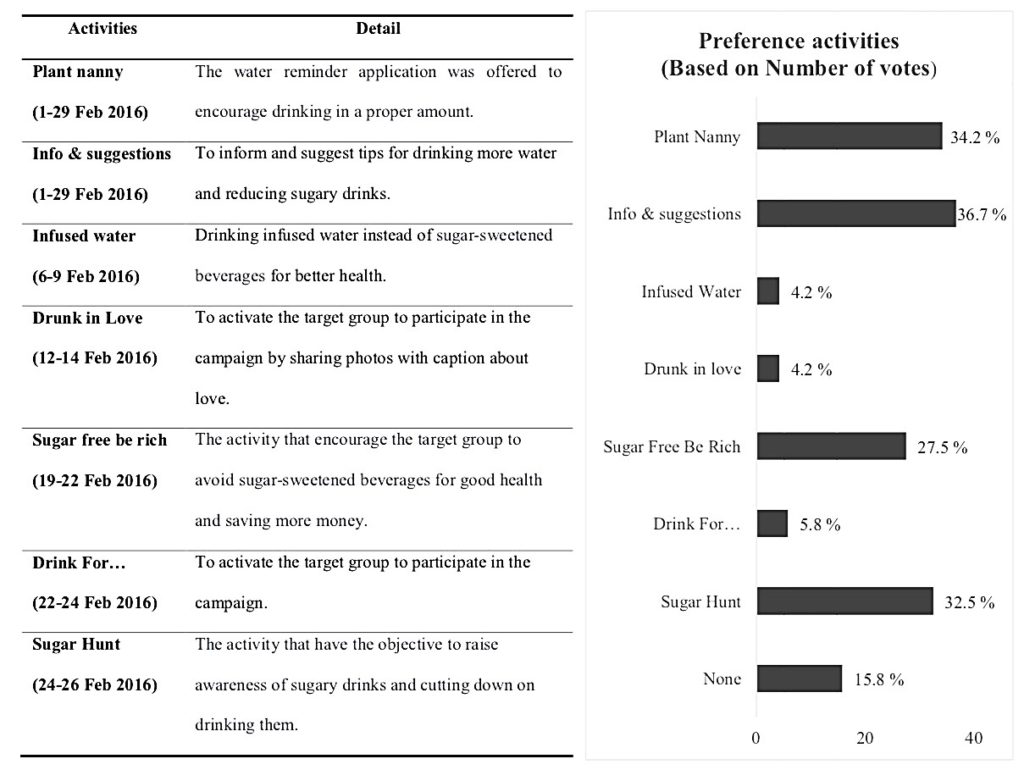

Results: Response rate for the questionnaire was 74.71% (127/170). After 4 weeks of the campaign, the average water intake was significantly increased (t = 6.359, p < 0.05). The average consumption of sugar-sweetened beverages was significantly decreased (t = -8.256, p < 0.05). Additionally, when comparing from the seven activities, the most top three preference activities are ‘Info & suggestions’, ‘Sugar Hunt’ and ‘Sugar Free Be Rich’.

Conclusions: Nowadays, people are preferring to consume sugary drinks instead of regular water and many may assume that computer games and social media are prevalent issues. But in reality if we use technology wisely, it would even provide us with a much more efficient method to reach the target group. Our study, thereby, then brings upon the appropriate aspect of games and social media in order to resolve the current issues. However, some activities have to be improve to attract more of the target group and the duration of the campaign should be increased for better long-term behavioural change.

Keywords: Health Promotion, Medical Students, Obesity, Lifestyle

I. INTRODUCTION

Global prevalence of non-communicable diseases (NCDs) is prominently increasing worldwide and kills more than 36 million people each year (World Health Organization, 2016). The 4 main types of non-communicable diseases are cardiovascular diseases, cancers, chronic pulmonary diseases and diabetes. These diseases are caused as a direct result of life style and environmental factors that include genetics, ageing, poverty and globalisation of unhealthy lifestyle. For example, poverty is a barrier to accessing health service and globalisation of unhealthy life style like physical inactivity or expose to harmful products.

Up to this present, changing of the world leads to unhealthy lifestyles like physical inactivity, smoking, alcohol abuse and eating unhealthy diets. Eating and drinking behaviour have been changed. Changing of eating behaviour are overeating, eating high calories or fast food. In addition, when considering on drinking behaviour, a variety of beverages which are easily accessible, delicious and inexpensive causes the preference of sugar-sweetened beverages (SSB) over pure water. Drinking a much more level of these SSB increases the risk of overweight, obesity, and diabetes (Al-Qahtani, 2016; Shah et al., 2014).

Despite the fact that the medical students are the upcoming doctors who should be the role models of health promotion, many studies have found that medical students have unhealthy dietary habits especially drinking SSB (Frank, Carrera, Elon, & Hertzberg, 2006).

Therefore, the campaign “You are what you drink” was launched in order to encourage the medical students to consume water instead of sweetened beverages. The promising outcome from these promoted activities is to drive them to have better health and additionally help them to be role models in health promoting lifestyle.

II. METHODS

A purposive sampling method was used to recruit 170 of first year medical students, Thammasat University, to participate the interventional study which designed by 7 main activities as shown in the details below. In brief, there were (1) Plant Nanny, (2) Info & suggestions, (3) Infused water, (4) Drunk in love, (5) Sugar Free be rich, (6) Drink for… and (7) Sugar hunt. All of these activities were launched via social networking apps (Line and Facebook) so these activities are directly broadcast to the target group.

Additionally, before starting and after finishing the interventional period, the participants were assigned to complete pre and post self-administered questionnaires were launched via Google Forms. The online questionnaires consist of 5 items regarding the SSB consumption and the effective activities that could improve the beverage consumption. These questionnaires were used to collect the participants’ drinking behaviour data which were analysed by descriptive statistics and dependent t-test.

Importantly, to attract the participants’ attention, prizes was offered in each activity. The students who mark their water intake every day will have a chance to win the special prizes at the end of the project.

III. RESULTS

The number of participated first year medical students was 127 from 170 (response rate of 74.71%). 36 of the participants are male and 91 are female. They have mean age of 17.62+0.64 year old. After 4 weeks of the campaign, the average water intake was significantly higher than the beginning (t = 6.359, p < 0.05). Additionally, the average consumption of SSB was statistically significant decreased (t = -8.256, p < 0.05). Moreover, the most preference activities based on number of votes were also verified, there were ‘Info & suggestions’, ‘Sugar Hunt’ and ‘Sugar Free Be Rich’.

Figure 1. The 7 main activities to encourage medical student (left) and

the percentage of the preference activities based on number of votes (right)

IV. DISCUSSION

From the results of the campaign, we found that the participants’ average water intake was increased and the average consumption of SSB was decreased. Importantly, the most preference activities were ‘Info & suggestions’, ‘Sugar Hunt’ and ‘Sugar Free Be Rich’.

First of all, ‘Info & suggestions’, it might be because the participants received the information and suggested tips for drinking more water and reducing SSB consumption, so this activity is one of the most popular activities in our campaign.

Second, ‘Sugar Hunt’, this activity is one of the most preference activities due to its incentive of winning the competition.

Third, ‘Sugar Free Be Rich’, a reason of the activity’s popularity might be its ability to raise participants’ awareness of amount of money wasted on SSB.

However, some activities were not suitable for them such as ‘Infused water’. It might be because finding fruits is hard work for them. In addition, ‘Drunk in Love’ during the Valentine’s week and ‘Drink for…’ did not improve the drinking behaviour because its influence was in the short duration; therefore, they could not create enough impact.

V. CONCLUSION

In the current era of the fast changing world, people are preferring to consume SSB instead of regular water and many may assume that computer games and social media are prevalent issue. But in reality if we use technology wisely, it would even provide us with a much more efficient method to reach the target group. Our study, thereby, then brings upon the appropriate aspect of games and social media in order to resolve the current issues and improve the quality of life. The issues are not only being the high rate of SSB consumption, but the collective social matter as a whole.

Notes on Contributors

Nattabhorn Bunplook, Mechita Kongphiromcheu, Priyapat Phatinawin are medical students from Collaborative Project to Increase Production of Rural Doctor (CPIRD) at Medical Education Centre Buddhasothorn Hospital. All of them contributed equally in the conception, experimental design and implementation of the study.

Panadda Rojpibulstit is an Associate Professor in Biochemistry and a former Vice Dean of Student Affairs and Health Promotion of the Faculty of Medicine, Thammasat University. She contributed in drafting and revision the manuscript.

All authors read and approved the final manuscript.

Ethical Approval

This study was approved by the Human Ethics committee of Thammasat University No. I (Faculty of Medicine) (IRB No. 097/2560).

Funding

There was no funding support.

Acknowledgements

We would like to appreciate all of the participants; 1st year medical students in Thammasat University (MEDTU26). We would like to acknowledge the Faculty of Medicine, Thammasat University and the Foundation for Medicine and Public Health, CPIRD Bhuddhasothorn Thailand for the traveling grants to join with 14th APMEC conference, 2017.

Declaration of Interest

The authors declare that they have no competing interests.

References

Al-Qahtani, M. H. (2016). Dietary Habits of Saudi Medical Students at University of Dammam. International Journal of Health Sciences, 10(3), 353-362.

Frank, E., Carrera, J. S., Elon, L., & Hertzberg, V. S. (2006). Basic demographics, health practices, and health status of U.S. medical students. American Journal of Preventive Medicine, 31(6), 499–505. https://doi.org/10.1016/j.amepre.2006.08.009.

Shah, T., Purohit, G., Nair, S. P., Patel, B., Rawal, Y. & Shah, R. M. (2014). Assessment of Obesity, Overweight and Its Association with the Fast Food Consumption in Medical Students. Journal of Clinical and Diagnostic Research, 8(5), CC05-CC07. https://doi.org/10.7860/JCDR/2014/7908.4351.

World Health Organization. (2016). Global Report on Diabetes. Retrieved from http://apps.who.int/iris/bitstream/10665/204871/1/9789241565257_eng.pdf.

*Panadda Rojpibulstit

Biochemistry Department, Preclinical Sciences Institutes,

Faculty of Medicine, Thammasat University,

Pathumthani, Thailand, 12121

Tel: +66 9269710

Email: panadda@tu.ac.th

Published online: 7 May, TAPS 2019, 4(2), 52-57

DOI: https://doi.org/10.29060/TAPS.2019-4-2/SC2035

Marianne Meng Ann Ong1 & Sandy Cook2

1Department of Restorative Dentistry, National Dental Centre, Singapore; 2Academic Medicine Education Institute, Duke-NUS Medical School, Singapore

Abstract

Aim: To describe residents’ expectations of faculty using the One-Minute Preceptor (OMP) in microskills and their ratings of faculty performing them during clinical sessions.

Methods: Prior to the start of residency, residents were invited to participate in a survey on residents’ expectations of faculty performing the OMP microskills in clinical teaching activities using a 4-point Likert scale. At the end of Year 1, they rated faculty on their use of the OMP microskills using a 4-point Likert scale using a second survey.

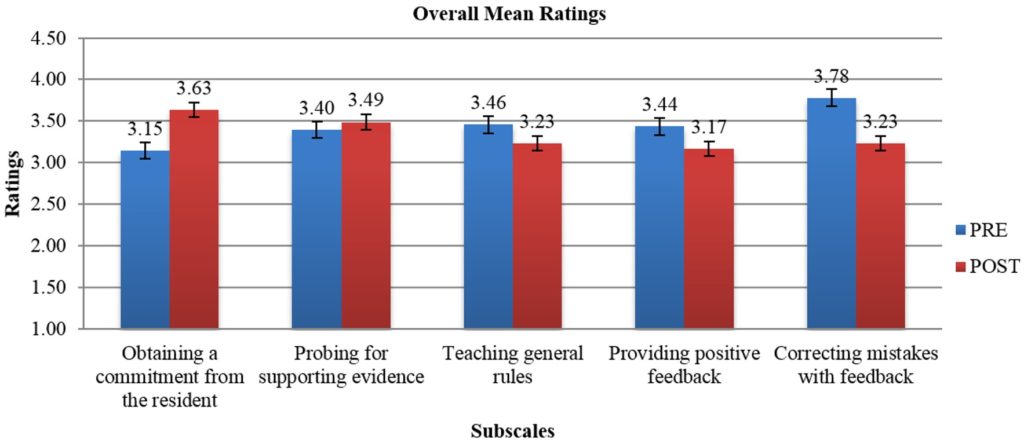

Results: Sixteen Year 1 residents completed the first survey and 15 residents completed the second survey. Prior to residency, correcting mistakes with feedback was the highest rated microskill (3.78) and obtaining a commitment was the lowest rated (3.15). At the end of Year 1, residents rated faculty performing getting a commitment as the highest (3.63) and giving feedback the lowest (3.17).

Conclusion: In this small cohort of residents, expectations were high around the OMP microskills. Residents felt faculty performed them well in their first year of residency. However, residents view of the importance of the five OMP microskills by faculty differed from their perception of how well the faculty demonstrated them. Future studies could explore if residents’ perceptions of importance changed over time or were related to their view on the quality of performance by faculty. Faculty will be further encouraged to employ the five OMP microskills to maximise their teaching moments with residents managing patients in busy outpatient clinics in National Dental Centre Singapore.

Keywords: Learner Perception, Expectation, Evaluation, Clinical Teaching

I. INTRODUCTION

Some challenges faced by learners and faculty in clinical teaching include work demands, time constraints, multiple levels of learners and lack of active participation of learners (Spencer, 2003). The use of the One Minute Preceptor (OMP) in microskills in clinical teaching has been shown to be easy to learn and effective in helping faculty improve their teaching (Furney et al., 2001) in addition to maximising their teaching moments with learners in busy outpatient clinics (Neher, Gordon, Meyer, & Stevens, 1992). The OMP framework consists of five microskills: obtaining a commitment (OAC); probing for supporting evidence (PSE); teaching general rules (TGR); providing positive feedback (PPF); and correcting mistakes with feedback (CMF). Depending on the situation, each microskill can be used either on its own or in any sequence to cater to different learning contexts. Earlier studies done in National Dental Centre Singapore (NDCS) assessed whether OMP in microskills faculty development workshops had an impact on in-flight residents’ perceptions of clinical teaching (Ong, Yow, Tan, & Compton, 2017) and obtained information on past residents’ perceptions on the importance and frequency of the OMP microskills in residency clinics (Ong, Woo, & Cook, 2016). However, we found no studies in the literature that explored incoming dental residents’ expectations of faculty using the OMP microskills during clinical sessions in their residency programmes, nor their perceptions of faculty performing them in their first year of the programme. This descriptive study thus describes Year 1 residents’ expectations and perceptions of clinical teaching activities performed by faculty.

II. METHODS

A. Study Design

This is a descriptive study on residents’ expectations and perceptions of clinical teaching activities performed by faculty. The protocol was sent to SingHealth CIRB (Ref: 2018/2137) and it was deemed exempt from review.

B. Subjects

During the Academic Year (AY) 2015 orientation session in June 2015, prior to the start of residency programmes, all sixteen residents in five dental specialities (Endodontics, Oral and Maxillofacial Surgery, Orthodontics, Periodontics and Prosthodontics) were invited to participate in this survey.

C. Surveys

Two surveys were developed to explore Year 1 residents’ expectations and perceptions of clinical teaching activities in relation to the OMP microskills. The first survey (PRE) (refer Appendix A) had 14-items grouped by the microskills: OAC (3 items), PSE (3 items), TGR (3 items), PPF (3 items) and CMF (2 items). Residents rated the importance they placed on faculty engaging in teaching activities related to them on a 4-point Likert scale (1= not important to 4= very important).

The second survey (POST) (refer Appendix B), administered at the end of their first year (June 2016), had 13-items that were also grouped by the microskills: OAC (4 items), PSE (3 items), TGR (2 items), PPF (2 items) and CMF (2 items). While similar in the microskills classification as the PRE survey, these items were focused on how well faculty performed them on a 4-point Likert scale (1= inadequate to 4= excellent) and included slightly different phrasing. Both survey items were reviewed by 2 residents in Year 2 of their residency programmes for relevance, ease of use, language and clarity of items.

D. Administration of Surveys

At the AY 2015 orientation session, paper copies of the PRE survey were handed out to residents after the overview briefing session of NDCS residency training programmes by the Director of Education. At the end of Year 1, during the annual programme evaluation session, paper copies of the POST survey were handed out to residents to fill in by an Academic Clinical Programme (ACP) office executive.

E. Data Analysis

The PRE and POST survey data were collated by an executive in the ACP office. De-identified data were sent to the staff of Duke-NUS Office of Education to provide basic descriptive statistics. Individual items in each microskill category for both surveys were averaged to get a general sense of the residents’ views by category. No further statistical analysis was done as different rating scales were used and there was slight variation in the phrasing of items in both surveys.

III. RESULTS

Sixteen Year 1 residents (100% response rate) completed the PRE with overall mean ratings of importance ranging from 3.15 (OAC) to 3.78 (CMF) for the five microskills (Figure 1). Fifteen residents (93.75% response rate) completed the POST (1 resident dropped out of a residency programme before the end of Year 1) with overall mean ratings of quality ranging from 3.17 (PPF) to 3.63 (OAC) (Figure 1).

Note: While the broad categories are similar, rating scales and individual items that were averaged by microskills category were different on each survey. The PRE scale was 1=not important to 4=very important. The POST scale was 1=inadequate to 4=excellent).

Figure 1. Overall mean ratings on the importance of OMP activities (PRE) and mean ratings on quality of faculty performing OMP activities (POST)

IV. DISCUSSION

In this study, clinical teaching was defined as performance of teaching activities related to OMP microskills. Residents in this cohort rated faculty CMF (PRE: 3.78) as the element of most importance of the five OMP microskills prior to the start of residency. Yet, it was not one of the OMP microskills rated as done well (POST: 3.23) as the rest. This is an area NDCS faculty can improve upon. The OMP microskill rated as best performed by faculty was OAC (POST: 3.63), was actually the least important to residents (PRE: 3.15). But overall residents perceived faculty performing the five OMP microskills well (>3.0) in clinical teaching.

Earlier data obtained from past learners in the residency programmes from a 2015 general survey done in NDCS had revealed they valued the use of the OMP microskills in clinical teaching. The two microskills with the highest mean ratings on importance were OAC and CMF (Ong et al., 2016). The frequently demonstrated microskills in that survey were OAC and TGR. In contrast, a survey done with in-flight residents in 2014 exploring the short-term follow up after an OMP in microskills workshop for faculty, had revealed TGR as the most adequately performed microskill by faculty (Ong et al., 2017). The differences in perceptions in OMP microskills in clinical teaching by faculty can be attributed to the different cohorts of learners surveyed at different periods of time. It is not unexpected that learners at various stages of their residency would have different expectations and perceptions of the five microskills in clinical sessions.

Limitations of this study include its small sample size, residents responded to survey items based on their own subjective expectation of faculty demonstrating the OMP microskills in clinical teaching and their own subjective assessment of how well faculty performed them during their first year of residency in the clinics. The nature of the study, different rating scales and variation in the phrasing of the survey items limited our ability to determine any change in perceptions on importance and quality in the OMP microskills.

V. CONCLUSION

In this small cohort of residents, expectations were high around certain of the OMP microskills. Residents felt the faculty performed them well. However, residents view of the importance of the five OMP microskills by faculty differed from their perception of how well the faculty demonstrated them. Future studies could explore if residents’ perceptions of importance changed over time or were related to their view on the quality of performance by faculty. Faculty will be further encouraged to employ the five OMP microskills in clinical teaching to maximise their teaching moments with residents managing patients in busy outpatient clinics in NDCS.

Notes on Contributors

Dr Marianne M. A. Ong, Cert Perio (Michigan), MS (Michigan); Adjunct Associate Professor, Duke-NUS Medical School, Singapore; Senior Consultant & Director, Education National Dental Centre Singapore.

Dr Sandy Cook, PhD; Senior Associate Dean, Deputy Head of Office of Education; Deputy Director, Academic Medicine Education Institute; Professor, Duke-NUS Medical School.

Ethical Approval

The protocol was sent to SingHealth CIRB (Ref: 2018/2137) and was deemed not requiring a formal review as it reports on residents’ expectations and perceptions of clinical teaching activities performed by faculty and satisfaction with their first-year experience.

Acknowledgements

The authors would like to give special thanks to Ms Kia-Mun Woo from the MD Programme Department for her help in the data analysis.

Funding

The authors have not received any funding or benefits from industry or elsewhere to conduct this study.

Declaration of Interest

The authors have no conflict of interest.

References

Furney, S. L., Orsini, A. N., Orsetti, K. E., Stern, D. T., Gruppen, L. D., & Irby, D. M. (2001). Teaching the one-minute preceptor: A randomized controlled trial. Journal of General Internal Medicine, 16(9), 620-624.

https://doi.org/10.1046/j.1525-1497.2001.016009620.x

Neher, J. O., Gordon, K. C., Meyer, B., & Stevens, N. (1992). A five-step “microskills” model of clinical teaching. Journal of the American Board of Family Practice, 5(4), 419-424. Retrieved from https://www.jabfm.org/content/5/4/419

Ong, M. M., Woo, K. M., & Cook, S. (2016). A general survey on learners and faculty perspectives on educational activities held in the National Dental Centre, Singapore. Proceedings of Singapore Healthcare. 25(3), 158-168.

https://doi.org/10.1177/2010105816642246

Ong, M. M., Yow, M., Tan, J., & Compton, S. (2017). Perceived effectiveness of one-minute preceptor in microskills by residents in dental residency training at National Dental Centre Singapore. Proceedings of Singapore Healthcare, 26(1), 35-41. https://doi.org/10.1177/2010105816666294

Spencer, J. (2003). Learning and teaching in the clinical environment. British Medical Journal, 326(7389), 591-594. https://doi.org/10.1136/bmj.326.7389.591

*Marianne Ong

Department of Restorative Dentistry

5 Second Hospital Avenue, Singapore 168938

National Dental Centre Singapore

Tel: +65 63248925

E-mail: marianne.ong.m.a@singhealth.com.sg

Published online: 7 May, TAPS 2019, 4(2), 48-51

DOI: https://doi.org/10.29060/TAPS.2019-4-2/SC2070

Zhun Wei Mok, Jill Cheng Sim Lee & Manisha Mathur

Division of Obstetrics and Gynaecology, KK Women’s and Children’s Hospital, Singapore

Abstract

Introduction: At KK Women’s and Children’s Hospital’s (KKWCH) Department of Obstetrics and Gynaecology (O&G), a junior doctor’s handbook exists to guide safe practice. A challenge remains in ensuring relevant, current, and readily accessible content. The onus of re-editing is left to senior clinicians with heavy clinical and supervisory roles, leading to a lack of sustainability. Mobile applications (apps) can be a sustainable ‘just-in-time’ learning resource for junior doctors as they balance new responsibilities with relative inexperience.

Methods: The app was developed in-house with the Residency’s EduTech Office. A focus group comprising junior doctors identified content deemed useful. The alpha version was launched in August 2017 and trialled amongst the wider junior doctor population. Data on usefulness were collected through serial focus groups and analysed using grounded theory.

Results: An online survey disseminated to all 100 junior doctors showed that 100% owned a smartphone. 97.1% supported this new resource. Consultative discussions recommended inclusion of (i) Procedural and consent information; (ii) Risk calculators; and (iii) Clinical pathways and management algorithms. Mobile learning apps entreat the user to immediately reflect and conceptualise their concrete experiences, and actively experiment with the content to build on his/her current knowledge. Learners become stakeholders in creating their own learning material. Qualitative feedback indicated a continued interest to contribute, underscoring the app’s sustainability potential.

Conclusions: Apps can be a sustainable on-the-go resource developed by junior doctors, for junior doctors. Learners become stakeholders in creating their own learning material through continued reflection, conceptualisation and active experimentation. This can be scaled for wider clinical use.

Keywords: Sustainable Mobile Learning, Mobile Applications, On-the-Go Resource, Junior Doctors, Obstetrics and Gynaecology

I. INTRODUCTION

Mobile technology is an integral part of an ongoing technological revolution within medical practice. Junior doctors are often the first-line medical staff in contact with patients, tasked to provide adequate patient counselling and uphold standards of care in the ambulatory and inpatient settings while negotiating a fresh learning curve. Harnessing this technology in the form of mobile apps serves as an on-the-go resource when needed, and supports the new doctors’ preparation for patient encounters as they balance newly increased responsibilities with relative inexperience (Bullock et al., 2015; Guze, 2015).

At the KK Women’s and Children’s Hospital (KKWCH) Department of Obstetrics and Gynaecology (O&G), whilst a physical handbook of departmental guidelines currently exists to guide safe practice, there remains a challenge in ensuring sustainability on three levels: relevance to existing knowledge gaps, currency with new evidence, and accessibility on demand. In addition, the onus of re-editing was often left to senior clinicians, who had to undertake this portfolio in addition to heavy clinical and supervisory roles, leading to a challenge in sustainability. Given the widespread use of smartphones and apps in the workplace, harnessing this technology may change the learning ecology and transform this into a sustainable on-the-go resource to access much-needed knowledge amongst junior doctors.

Thus, a pilot project aimed at developing a sustainable mobile app for junior doctors for easy consultation of key knowledge to support their clinical practice was mooted. Central to this was the basis that the understanding of common O&G procedures, their risks and complications, access to department-specific guidelines, algorithms and risk calculators will ideally translate into better counselling of patients and ensure standards of care. This is especially so now that the newly updated Singapore Medical Council (SMC) Ethical Code and Ethical Guidelines 2016 has identified the provision of informed consent to be a cornerstone of every new doctor’s practice.

Following the proof-of-concept of this tool, this will be the first step towards making learning more accessible outside the conventional pedagogical setting, and be responsive to the juniors’ developing knowledge. Learners can be engaged to take a stake in creating and sustaining their own learning material.

II. METHODS

An online survey on the use of an app to replace the existing handbook was first disseminated electronically to all junior O&G doctors (House Officer, Medical Officers, Residents and Registrars) in KKWCH, which aimed to assess the feasibility of this new tool, and the receptivity towards it. An invitation was simultaneously extended to interested junior doctors to form a focus group to identify initial content deemed best able to fill existing knowledge gaps.

Preliminary discussions were then undertaken with the Programme Director, Academic Chair, SingHealth Designated Institutional Officer and Faculty. The app was developed in-house with the SingHealth Residency’s EduTech Office with funding from the Academic Clinical Program (ACP). The focus group were asked to identify content deemed most useful for themselves and their peers. The alpha version was launched in August 2017 and was trialled amongst the wider junior doctor audience. Data on usefulness and weaknesses were collected through serial focus groups and analysed using grounded theory. Pre- and post-implementation surveys will be conducted to determine the usefulness of the app in daily clinical practice, with a view for broadening the scope of content and scalability to other departments.

III. RESULTS

In September 2016, 100 KKWCH O&G junior doctors were invited to participate in an online survey, with a response rate of 70%. It showed that 100% of respondents currently own a smartphone, had experience of using apps and 98.6% use their smartphones at work. Of these, 77.1% use an iOS platform and the remaining 22.9% use an Android platform. An overwhelming majority (97.1%) supported the use of a mobile app for teaching of common O&G topics/procedures. 13 respondents agreed to participate in the working focus group to develop this resource.

Results of the pilot survey lent credence to the conceptualisation of a mobile learning app to meet the needs of the user population.

Following the launch of the alpha version in August 2017, serial 3-monthly focus groups were recruited to trial the alpha version for iterative improvements. This was not restricted to the original focus group members, and any junior doctor with suggestions could attend. Between 6 and 14 participants were present at each meeting, with a mix of recurrent and new focus group attendees. This represented an ongoing interest in the continued development of the app, and in future, may reflect the self-sustaining capability of the app with a constant inflow and outflow of junior personnel who will be able to access this knowledge base and contribute towards its expansion.

Topics of commonest reference amongst O&G junior doctors were identified and these key areas were recommended for inclusion:

i. Procedural and consent information

ii. Risk calculators for ambulatory counselling

iii. Clinical pathways and algorithms for inpatient and ambulatory management

Practice guidelines unique to the department were deemed most pertinent, as this obviated the need for internet referencing, especially in filtering content that was not specific to their needs. These recommendations were incorporated into the beta version. Clinical content for the app was prepared by junior doctors from the focus groups. Technical changes were made to improve the ease and intuitiveness of use. Beta-testing is currently underway with ongoing qualitative data collection as part of post-implementation tracking.

IV. DISCUSSION

The development of this mobile app ties in with the theory of a learning ecology, where there is a diverse variety of learning options, allowing each clinician to access and learn according to their own immediate needs (Rashid-Doubell, Mohamed, Elmusharaf, & O’Neill, 2016). The use of technology has been noted as a key element of learning by the US-based Accreditation Council for Continuing Medical Education (ACCME). In their 2015 report, it has been estimated that physicians spend an estimated 993 hours of instruction on internet searching and learning, which may be better served by the use of a dedicated mobile app serving their area of practice. The provision of evidence-based information in a mobile app is in alignment with the Best Evidence Medical Education (BEME) Collaboration, in the development of evidence-based education in the medical and health professions. To date, leading medical institutions such as Johns Hopkins Medicine have put in place mobile apps such as the ‘Hopkins Guides’ – a medical mobile app with monthly updates, covering clinically relevant topics such as antibiotic stewardship, HIV, Diabetes and Psychiatry. The development of this mobile app is based upon previous successful use of technology in these hospitals.

Based on preliminary focus group findings, harnessing this form of mobile technology can be well utilised as a ‘just-in-time’ information resource in daily clinical practice, particularly when other sources are not available. This provides timely access to key facts and encourages learning in context.

While this should not be seen as a replacement for conventional pedagogical learning, mobile learning will complement the existing learning platforms through textbooks and e-learning portals in existence which may not be easily accessible on the ward or at the bedside.

This use of mobile technology is affiliated with Kolb’s learning cycle (Bullock & Webb, 2015; Kolb, 1984) where the app is an adjunct to experiential and active learning. Mobile learning apps provide on-the go knowledge which allows the user to immediately reflect and conceptualise their concrete clinical experiences and then through active experimentation, using the content of the mobile app, come up with a decision and solution that he/she uses to build on his/her current knowledge.

In addition to its benefits on learning, this project allows learners to become stakeholders in creating their own learning material that is responsive to their knowledge gaps. Common themes extracted from qualitative feedback at the focus groups included the app being ‘current and readily available’, and the idea of taking ownership of their learning. The app can also overcome the issue of sustainability. Many have indicated interest in continuing to contribute and encouraging their peers to contribute as it is seen to be ‘for the greater good especially for incoming juniors’. The consistent mix of new and experienced attendees at the 3-monthly focus groups underscores the potential of the app’s sustainability, which is driven by the very users of the app.

Upon completion of Beta testing, the uptake of this app is expected to be seamless in view of the almost ubiquitous use of mobile and smartphones in the clinician population, with easy access to the internet and medical ‘apps’. Its user rate and utility can be further assessed, and thus made scalable for use in other departments within our sponsoring institution. Continued funding for this project has been promised by the ACP.

V. CONCLUSION

App development can provide a sustainable on-the-go knowledge resource developed by junior doctors, for junior doctors. Following the proof-of-concept through the alpha version and focus group data, its use as an up-to-date knowledge resource tailored to the individual needs of junior clinicians is justified. Learners can be engaged to take a stake in creation of their own learning material through reflection, conceptualisation and active experimentation, and ensure its sustainability. This resource can be easily replicated and provide benefit to wider areas of clinical care.

Notes on Contributors

Z. W. Mok reviewed the literature, and co-wrote the article with J. C. S. Lee; M. Mathur reviewed the article. All authors approved the final version.

Acknowledgements

The authors acknowledge the funding support, inputs from Faculty and support from the junior clinicians in their valuable feedback towards app improvement.

The authors would also like to acknowledge the technical assistance and expertise provided by A. D. H. Lu, I. C. Y. Goh, C. H. C. Tan, W. S. Tey, and M. J. Ng from the SingHealth Residency, Education Technology (EduTech), Centre for Resident and Faculty Development (CRAFD) in developing and improving the app.

Ethical Approval

A waiver of ethical approval was obtained from the Centralised Institutional Review Board at SingHealth Research.

Funding

Funding support was obtained from the Academic Clinical Program (ACP) to engage web development personnel.

Declaration of Interest

There was no conflict of interest in this paper.

References

Bullock, A., Dimond, R., Webb, K., Lovatt, J., Hardyman, W., & Stacey, M. (2015). How a mobile app supports the learning and practice of newly qualified doctors in the UK: An intervention study. BioMed Central Medical Education, 15, 71. https://doi.org/10.1186/s12909-015-0356-8

Bullock, A., & Webb, K. (2015). Technology in postgraduate medical education: A dynamic influence on learning? Postgraduate Medical Journal, 91(1081), 646-650. https://doi.org/10.1136/postgradmedj-2014-132809

Guze, P. A. (2015). Using technology to meet the challenges of medical education. Transactions of the American Clinical and Climatological Association, 126, 260-270.

Kolb, D. A. (1984). Experiential learning: Experience as the source of learning and development. Englewood Cliffs, NJ: Prentice‐Hall.

Rashid-Doubell, F., Mohamed, S., Elmusharaf, K., & O’Neill, C. S. (2016). A balancing act: A phenomenological exploration of medical students’ experiences of using mobile devices in the clinical setting. British Medical Journal Open, 6(5), e011896. https://doi.org/10.1136/bmjopen-2016-011896

*Zhun Wei Mok

100 Bukit Timah Road,

Singapore 229899

KK Women’s and Children’s Hospital

Tel: 6225 5554

Published online: 7 January, TAPS 2020, 5(1), 70-75

DOI: https://doi.org/10.29060/TAPS.2020-5-1/SC2065

Carmel Tepper, Jo Bishop & Kirsty Forrest

Faculty of Health Sciences and Medicine, Bond University, Australia

Abstract

Bond University Medical Program recognises the importance of workplace based assessment as an integrated, authentic form of assessment. In partnership with a software company, the Bond Medical Program has designed and implemented an online Student Clinical ePortfolio, utilising a mobile-enabled, secure, digital platform available on multiple devices from any location allowing a range of clinically relevant assessments “at the patient bedside”. The innovative dashboard allows meaningful aggregation of student assessment to provide an accurate picture of student competency. Students are also able to upload evidence of compliance documentation and record attendance and training hours using their mobile phone.

Assessment within hospitals encourages learning within hospitals, and the Student Clinical ePortfolio provides evidence of multiple student-patient interactions and procedural skill competency. Students also have enhanced interprofessional learning opportunities where nurses and allied health staff, in conjunction with supervising clinicians, can assess and provide feedback on competencies essential to becoming a ‘work-ready’ doctor.

Keywords: Authentic Assessment, Interprofessional Learning, Technology-Enhanced Learning, Feedback, Workplace-Based Assessment

I. INTRODUCTION

The medical education community is rapidly embracing workplace based assessment (WBA) as a more authentic form of assessment of medical students’ clinical competence. These clinical interactions are complex, with integrated competencies observed in real-life settings. For the safety of patients, however, it is essential that medical schools have evidence that their graduates have attained sufficient standards in core skills and activities as indicated by their relevant accrediting institutions’ graduating doctor competency frameworks. This includes evidence not only of sufficient maintenance of compliance documentation, attendance in clinical settings and teaching sessions, but also the ability of the student to interact competently with a variety of patients.

Student clinical placements within medical schools are often undertaken in multiple locations with a variety of clinical supervisors. At Bond University, Australia this process involves over 150 locations with up to 800 clinical supervisors observing, assessing and providing feedback on student performance. Previous manual, paper-based processes were inefficient, time-consuming, prone to error, and limited the opportunity for real-time feedback to students. Difficulty aggregating information resulted in difficulty making pass-fail decisions on student performance on rotation and delayed intervention for students requiring remediation for either compliance, attendance or clinical performance.

Whilst clear and validated documentation of proficiency required of a “work-ready” graduate is often challenging to obtain, this aggregation of multiple data points to build a more complete picture of student competence is central to the concept of programmatic assessment (van der Vleuten, 2016). A portfolio of evidence with timely feedback on performance is seen as essential for demonstrating the growing development of student clinical skills.

An electronic, or ePortfolio, represents the technological evolution from paper-based to electronic clinical assessments (Garrett, McPhee, & Jackson, 2013). There are multiple ePortfolios and learning management systems available which can be used in the workplace and electronically collect in-progress assessments and accomplishments (Kinash, Wood, & McLean, 2012). Some ePortfolios also allow students to manage continuing professional development. Bond Medical Program, however, sought to develop an ePortfolio specifically designed for undergraduate medical students that could aggregate not only attendance and compliance but also competency assessment data in a meaningful way to build an accurate picture of student competency in the hospital setting.

The aim of this short communication is to describe why and how a new version of a bespoke electronic portfolio was designed and implemented.

II. METHODS

Bond University partnered with a software company, which had healthcare experience, in the development of a digital student Clinical ePortfolio. The business requirement specification was for a fully mobile-enabled, secure, digital platform available on any device from any location that would allow a range of clinically relevant WBAs to be captured by clinicians “at the bedside” with the ability to provide immediate feedback to students. In addition, the software was to contain a process for students to provide evidence of compliance documentation and attendance at compulsory teaching sessions and on rostered placement shifts. The initial plan was to replicate all paper-based processes onto an electronic platform. The development of the software was iterative to the needs of the university using a road cycle improvement process. An app-based product was developed to house the clinical portfolio.

| Feature | Benefit | Replacing |

| Tablet and mobile phone-enabled clinical assessment | Readily available, user-friendly, allows for opportunistic assessment

Guest assessors (allied health and nursing) can participate in medical student education |

Paper assessment which had to be collected and collated |

| Compliance | Simple to scan and upload by students

Dashboard shows aggregate of compliance completion to ensure all required documentation has been provided |

Time-consuming, laborious paper trail of compliance documentation |

| Attendance with GPS tracking | Students take responsibility for being on rotation when rostered

Specific number of absences can trigger early student support processes Accurate record of which students attended compulsory classes |

Paper sign-on forms |

| Dashboard – Summary data | Student and clinical staff can view aggregated summary data showing attendance, compliance, student patient logs and WBAs | Multiple individual paper WBAs that could not be aggregated |

| Personal student learning | Students can log personal patient interactions as a record of their learning on rotation | Paper patient logs |

| Learning Modules with associated procedural skills assessment | Students watch a ‘best-practice’ learning module, demonstrate their understanding by answering a short quiz and then generate an assessment for a clinical supervisor. The clinical assessor guides and observes the skill performance and then provides a ‘trust level’ competency rating. Students can repeat the assessment until competency achieved | Skills performed in hospital setting not formally captured |

| Feedback to student | Voice recorded or typed, feedback is provided to student as soon as submitted by the assessor – timely and relevant to the performance | Verbal feedback or occasional comment on a form |

| CPD | Students can log personal continuing professional development to capture more fully their learning journey |

Table 1. Bond eportfolio features

In August 2017, the compliance portion of the portfolio was piloted with a single clinical year cohort of medical students and supervisors. In 2018, attendance and WBAs were conducted at the bedside of patients across all clinical years.

Delivering the project across many sites required the support of all supervisors, along with timely stakeholder engagement, and change management considerations. The needs of busy clinicians were surveyed, and a low-key launch by way of an online training video was preferred by the majority, with face-to-face on-site training available upon request. There are several barriers to timely feedback in the busy clinical environment with ‘opportunistic assessment’, multiple demands on clinician time and multiple students and/or trainees under supervision at any one time (Algiraigri, 2014). Feedback using the ePortfolio can be provided in the moment, recorded as either typing or voice recording and reviewed by students within their own time. Feedback from clinicians described it as “easy to complete on the go” and “easy to assess then and there (at the bedside)”. Table 1 describes the features and benefits of the Bond ePortfolio.

An example of the compliance dashboard, and the assessment portfolio front page is shown in the Appendix.

III. RESULTS

The new platform successfully delivered the required features through the Bond Student Clinical Portfolio. The Portfolio is accessible to both student and supervising clinicians using mobile phones or office desktop computers. Students indicate their attendance using a GPS geolocating attendance application. Compliance documents, clerked cases, reflections and other assessment components including the final in-training assessment are uploaded for supervisor assessment, whilst Mini-Clinical Evaluation Exercises are now completed by supervisors using a mobile phone at the patient bedside. All assessments are housed in one cloud-based portal, accessible to the decision-making committees.

An added advantage of this system is improving student digital literacy and self-directed learning, assisting them to become familiar with the process of self-documenting evidence of competence and skills obtained a valuable and highly sought-after skill for a graduated doctor.

A. Workplace Based Assessment

Evaluation of the 2018 pilot demonstrated significant efficiencies in documentation collection of WBA. Previously, professional staff would have collated 2,350 components of high-stakes assessment per year to be reviewed and presented to the Board of Examiners (BoE). Faculty can now track student progress during clinical rotation, with a process in place to identify students who require additional support to succeed. Faculty receive automatic notifications for review of submitted assessment items. During meetings of decision-making committees such as the BoE, student assessment items can be viewed by the committee to verify students who are borderline or those who receive commendations.

B. Attendance

Key members of the medical programme have a ‘dashboard’ on their homepage with ‘live’ attendance data. The Professional Staff Team can run reports when required but the platform will monitor students who meet the nominated ‘concern’ percentage of missed sessions which notifies the team that a support email may be required. In our experience, concerns around student well-being often present with non-attendance patterns. Supervisors in the clinical setting can now electronically track the progress of students allocated to their teams during rotations. In addition, they can identify students who require additional support in a timelier manner, helping to provide the best education experience possible.

C. Feedback

The clinicians’ ability to utilise their preferred method of feedback delivery allows flexibility and improved engagement in the process. For instance, the ability to voice record was introduced, enabling students to immediately access assessor feedback. This has resulted in increased communication between students and their assessors and a very positive response from the student body.

Feedback on students’ experience of this platform has been sought through ongoing discussion with the initial pilot group, and regular updates on their learning management system, and representative year specific feedback through staff-student liaison committees. The attendance monitoring has had mixed reviews from students who “appreciate not having to sign in on paper” but have been impacted by technical issues around non-syncing with certain mobile devices.

IV. DISCUSSION

Our belief is that assessment within hospitals will encourage learning within hospitals. Our intention is to remove Objective Structured Clinical Examinations (OSCEs) from the final year assessment to be replaced with authentic WBAs that are reliable and valid. OSCEs will continue to be used in the earlier years of the medical programme. There may be limitations as the very nature of the hospital environment is opportunistic. Students will have multiple patient (data) interactions to support their developing portfolio with evidence of competencies achieved. Students can personalise their studies and identify areas of focus for skill development during placement, to ultimately build confidence in their work readiness as a day one doctor. Ultimately, assessment information “should tell a story about the learner” (van der Vleuten, 2016, p. 888).

This platform offers many advantages over other platforms. The selection of the software partner was a competitive process. A full needs analysis and tender process was performed which for brevity has not been presented here. The advantages over other platforms identified at procurement were the opportunity to customise and the ability to have all the processes (compliance, attendance, placement and assessment) on one platform. Subsequent advantages made clear after implementation, and not delivered by other platforms included; the ability for students to take the portfolio into the workforce, a dashboard for attendance, and working with a partner based in health care who understood all stakeholder requirements, with an emphasis on safe patient care.

The next step will be to utilise the platform for training, progression and maintenance of competency of procedural skills before graduation. Specific procedural skills, required by accrediting bodies and relevant to the year of learning, will be assigned to the student for completion during a rotation. The student, in addition to the routine clinical practice of for example intravenous cannulation, will observe an interactive learning module about that skill, complete a short assessment to test their understanding of the module, and the system will then generate an assessment assigned to a clinical supervisor. The student will then perform the skill on the patient and the supervisor will submit the completed assessment on a ‘trust level’ scale of competency (ten Cate, 2013). If the student is not yet able to perform the skill sufficiently independently, there are opportunities to practice and repeat the assessment until competency is obtained.

V. CONCLUSION

Digitising the processes for monitoring attendance, conducting and collating compliance documentation, clinical assessment and delivering feedback at sites of clinical exposure has created significant efficiencies in the delivery of our programme. Preliminary feedback indicates that this leads to a vastly improved student experience with real-time, enhanced feedback on assessment performance and timely student remediation to assist students in becoming safe and competent ‘work-ready’ doctors. Live updates that notify of absenteeism allow for more timely support and personalised care. The aggregation of data into one personalised student clinical ePortfolio will allow decision-making bodies to make intelligent and safe pass-fail decisions based on evidence of student clinical performance.

Notes on Contributors

Carmel Tepper is the Academic Assessment Lead at Bond University. She has a special interest in exam blueprinting, item analysis and assessment technologies.

Jo Bishop is the Academic Curriculum Lead and Associate Dean, Student Affairs and Service Quality at Bond University. Jo is an expert on curriculum planning and development and has a passion for enhancing the student experience.

Kirsty Forrest is the Dean of Medicine at Bond University. She has been involved in medical educational research for 15 years and is co-author and editor of several best-selling medical textbooks including ‘Medical Education at a Glance’ and ‘Understanding Medical Education: Evidence Theory and Practice’.

Ethical Approval

Ethical approval was not required.

Acknowledgements

An e-poster presentation on some of this work was presented at 15th anniversary APMEC and awarded a merit.

Funding

There is no funding involved for this paper.

Declaration of Interest

Other institutional uptake of the new designed Student Clinical Portfolio may financially benefit Bond University.

References

Algiraigri, A. H. (2014). Ten tips for receiving feedback effectively in clinical practice. Medical Education Online, 19(1), 25141. https://doi.org/10.3402/meo.v19.25141

Garrett, B. M., MacPhee, M., & Jackson, C. (2013). Evaluation of an eportfolio for the assessment of clinical competence in a baccalaureate nursing program. Nurse Education Today, 33(10), 1207-1213. https://doi.org/10.1016/j.nedt.2012.06.015

Kinash, S., Wood, K., & McLean, M. (2013, April 22). The whys and why nots of ePortfolios [Education technology publication]. Retrieved from https://educationtechnologysolutions.com/2013-/04/the-whys-and-why-nots-of-eportfolios/

ten Cate, O. (2013). Nuts and bolts of entrustable professional activities. Journal of Graduate Medical Education, 5(1), 157-158. https://doi.org/10.4300/JGME-D-12-00380.1

van der Vleuten, C. P. M. (2016). Revisiting ‘Assessing professional competence: From methods to programmes’. Medical Education, 50(9), 885-888. https://doi.org/10.1111/medu.12632

*Carmel Tepper

Faculty of Health Sciences,

Bond University,

14 University Drive, Robina QLD,

4226 Australia

E-mail: ctepper@bond.edu.au

Announcements

- Best Reviewer Awards 2025

TAPS would like to express gratitude and thanks to an extraordinary group of reviewers who are awarded the Best Reviewer Awards for 2025.

Refer here for the list of recipients. - Most Accessed Article 2025

The Most Accessed Article of 2025 goes to Analyses of self-care agency and mindset: A pilot study on Malaysian undergraduate medical students.

Congratulations, Dr Reshma Mohamed Ansari and co-authors! - Best Article Award 2025

The Best Article Award of 2025 goes to From disparity to inclusivity: Narrative review of strategies in medical education to bridge gender inequality.

Congratulations, Dr Han Ting Jillian Yeo and co-authors! - Best Reviewer Awards 2024

TAPS would like to express gratitude and thanks to an extraordinary group of reviewers who are awarded the Best Reviewer Awards for 2024.

Refer here for the list of recipients. - Most Accessed Article 2024

The Most Accessed Article of 2024 goes to Persons with Disabilities (PWD) as patient educators: Effects on medical student attitudes.

Congratulations, Dr Vivien Lee and co-authors! - Best Article Award 2024

The Best Article Award of 2024 goes to Achieving Competency for Year 1 Doctors in Singapore: Comparing Night Float or Traditional Call.

Congratulations, Dr Tan Mae Yue and co-authors! - Best Reviewer Awards 2023

TAPS would like to express gratitude and thanks to an extraordinary group of reviewers who are awarded the Best Reviewer Awards for 2023.

Refer here for the list of recipients. - Most Accessed Article 2023

The Most Accessed Article of 2023 goes to Small, sustainable, steps to success as a scholar in Health Professions Education – Micro (macro and meta) matters.

Congratulations, A/Prof Goh Poh-Sun & Dr Elisabeth Schlegel! - Best Article Award 2023

The Best Article Award of 2023 goes to Increasing the value of Community-Based Education through Interprofessional Education.

Congratulations, Dr Tri Nur Kristina and co-authors! - Best Reviewer Awards 2022

TAPS would like to express gratitude and thanks to an extraordinary group of reviewers who are awarded the Best Reviewer Awards for 2022.

Refer here for the list of recipients. - Most Accessed Article 2022

The Most Accessed Article of 2022 goes to An urgent need to teach complexity science to health science students.

Congratulations, Dr Bhuvan KC and Dr Ravi Shankar. - Best Article Award 2022

The Best Article Award of 2022 goes to From clinician to educator: A scoping review of professional identity and the influence of impostor phenomenon.

Congratulations, Ms Freeman and co-authors.