Education of medical students in child and adolescent psychiatry

Submitted: 14 March 2020

Accepted: 20 July 2020

Published online: 5 January, TAPS 2021, 6(1), 30-39

https://doi.org/10.29060/TAPS.2021-6-1/OA2235

Yit Shiang Lui, Abigail HY Loh, Tji Tjian Chee, Jia Ying Teng, John Chee Meng Wong & Celine Hsia Jia Wong

Department of Psychological Medicine, National University Health System, Singapore

Abstract

Introduction: A good understanding of basic child-and-adolescent psychiatry (CAP) is important for general medical practice. The undergraduate psychiatry teaching programme included various adult and CAP topics within a six-week time frame. A team of psychiatry tutors developed two new teaching formats for CAP and obtained feedback from the students about these teaching activities.

Methods: Medical students were introduced to CAP via small group teaching in two different modes. One mode was the “Clinical Vignettes Tutorial” (CVT) and the other mode “Observed Clinical Interview Tutorial” (OCIT). In CVT, tutors would discuss clinical vignettes of real patients with the students, followed by explanations about theoretical concepts and management strategies. OCIT involved simulated-patients (SPs) who assisted by acting as patients presenting with problems related to CAP, or as parents for such patients. At each session, students were given the opportunity to interview “patients” and “parents”. Feedback was given following these interviews. The students then completed surveys about the teaching methods.

Results: Students rated very-positive feedback for the teaching of CAP in small groups. Almost all found these small groups enjoyable and that it helped them apply what they had learnt. Majority agreed that the OCIT sessions increased their level of confidence in speaking with adolescents and parents. Some students agreed that these sessions had stimulated their interest to know more about CAP.

Conclusion: Small group teaching in an interactive manner enhanced teaching effectiveness. Participants reported a greater degree of interest towards CAP, and enhanced confidence in treating youths with mental health issues as well as engaging their parents.

Keywords: Child Adolescent Psychiatry, Medical Education, Small Group, Teaching

Practice Highlights

- Psychiatric disorders are among the most common medical conditions experienced by children and adolescents, and data from the Singapore Mental Health survey conducted in 2010 had shown the prevalence rates of emotional and behavioural problems among Singaporean youth to be at 12.5%.

- Most medical students had limited exposure to Child & Adolescent Psychiatry (CAP) in their medical curriculum due to reduced proportions of teaching time and opportune clinical exposures allocated to CAP programmes.

- This would be further compounded by the limited number of child and adolescent psychiatrists involved in teaching at medical schools and supervising clinical postings.

- This manuscript described synergistic teaching methods employed in educating medical students within the field of Child & Adolescent Psychiatry and examined the effectiveness and acceptability of CAP teaching using small-group teaching classes.

- The CAP small group interactive teaching sessions for medical students received good feedback from majority of the participants and translated to applicability and skillsets transferability.

I. INTRODUCTION

Psychiatric disorders are among the most common medical conditions experienced by children and adolescents during their developmental years. Epidemiological data from developed countries demonstrated transitions from acute and infectious diseases to chronic conditions, that included mental health problems as well (Baranne & Falissard, 2018; Kyu et al., 2016; World Health Organization, 2014). Recent global health surveys had estimated the median prevalence of psychiatric disorders present in children and adolescents to be about 12% (Costello, Egger, & Angold, 2005). Data from the Singapore Mental Health survey conducted in 2010 had shown the prevalence rates of emotional and behavioural problems among Singaporean youth to be at 12.5% which was comparable with global data (Lim, Ong, Chin, & Fung, 2015). Some studies had also demonstrated a growing trend of a burgeoning proportion of disabilities in children and adolescents that would be attributable to mental health disorders. Therefore, increasingly more health resources would be expected to meet these demands (Baranne & Falissard, 2018; Erskine et al., 2015). This would largely come in the form of services focusing on prevention, identification, and management of child and adolescent psychiatric disorders (Baranne & Falissard, 2018; Costello et al., 2005; Erskine et al., 2015). There is hence a demand to fill the gap for escalating mental health needs in this population of children and adolescents. Delays in accessing prompt and adequate assessment may incur socio-economic costs and bring about further psychiatric comorbidities.

Increasing the numbers of trained child and adolescent psychiatrists may be necessary to meet the current and projected needs in youth mental health (Baranne & Falissard, 2018; Breton, Plante, & St-Georges, 2005; Thomas & Holzer, 2006). Globally, as well as in Singapore, the number of such specialists fell short of meeting the demands, and increased recruitment was needed to address this workforce shortage (Breton et al., 2005; Lim et al., 2015; Thomas & Holzer, 2006). Hence, there had been moves in recent years to increase exposure to, and interest in, child and adolescent psychiatry (CAP) among medical students (Hunt, Barrett, Grapentine, Liguori, & Trivedi, 2008; Malloy, Hollar, & Lindsey, 2008; Plan, 2002; Thomas & Holzer, 2006). Most medical students had limited exposure to CAP in their medical curriculum due to reduced proportions of teaching time and opportune clinical exposures allocated to CAP programmes. This would be further compounded by the limited number of child and adolescent psychiatrists involved in teaching at medical schools and supervising clinical postings (Dingle, 2010; Lim et al., 2015; Plan, 2002; Sawyer & Giesen, 2007). It remained important however that medical students were taught CAP, given the burden of mental health disorders in our youths today (Dingle, 2010; Hunt et al., 2008; Kaplan & Lake, 2008; Sawyer & Giesen, 2007; Thomas & H, 2006). Other specialist practitioners such as family medicine specialists and paediatricians also frequently managed youths with psychiatric problems. Understanding early childhood development, critical milestones in childhood and adolescents would be essential in any specialty that had to interact and manage children as part of routine practice (Hunt et al., 2008; Plan, 2002). This would form the basis why CAP would be taught in medical schools as part of regular and wider curricula (Dingle, 2010; Hunt et al., 2008; Kaplan & Lake, 2008; Malloy et al., 2008; Plan, 2002; Sawyer & Giesen, 2007). The current medical school pedagogy may have underestimated the salience of teaching CAP in the undergraduate curriculum. This resulted in allocating much less time, attention as well as teaching resources towards CAP. Curriculum designers will also have severely under-appreciated the transferability of skillset due to the inherent challenges in undertaking interviews with children and their parents.

A. The Curriculum and Teaching Methods

In Yong Loo Lin School of Medicine at the National University of Singapore, CAP teaching would be embedded within a six-week General Psychiatry clerkship for Fourth-Year medical students. CAP teaching would consist of a period of 20-hour centralised teaching at the affiliated National University Hospital, together with clinical attachments to the outpatient child psychiatry clinics in other restructured hospitals. The 20-hour teaching would include online lectures made accessible through students’ Intranet, didactic lectures delivered in large group setting by clinical tutors, as well as small group teaching classes. In this paper, the authors examined the effectiveness and acceptability of CAP teaching using these small group teaching classes.

A comprehensive CAP education will ensure the following domains are included such as emotional symptomatology (e.g. depression, anxiety, enuresis), conduct and disruptive behavioural problems (e.g. attention deficit disorder, conduct disorder, bullying), developmental delays (e.g. specific learning, speech or autistic spectrum) and relationship difficulties, personal habits and injuries (e.g. abuse, suicide, digital overuse). Knowledge will include normal child developmental psychology as well as the assessment and management of common CAP conditions. Practice imparts interview skills of CAP and counselling of young parents.

Small group teaching sessions consisted of several components in its general pedagogic approach. The aim of these sessions was to cover the teaching of core knowledge and practices in common CAP cases, as well as training of interview skills required in communicating with children, adolescents, and their parents. Each session would start off with a series of lectures on four major domains of CAP: (1) emotional symptoms, (2) conduct and disruptive behavioural problems, (3) developmental delays, and (4) relationship difficulties, personal habit, and injuries. The lectures would be followed by both “Clinical Vignettes Tutorial” (CVT) and “Observed Clinic Interview Tutorials” (OCIT). The teaching sessions were structured as such in view of time constraints in the undergraduate curriculum that precluded comprehensive clinical exposure—a combination of didactics and simulated practice was designed to maximise the transferability of necessary theoretical knowledge and practical skills set for the students.

In the CVT, tutors would discuss clinical vignettes derived from real-life patients, and their underpinning theoretical concepts for about 2½ hours. This teaching activity would have covered the principles of psychopharmacology in the youths, as well as three distinct childhood conditions: a) Adolescent Depression with self-harm behaviour, b) Post-traumatic Stress Disorder in an adolescent and c) Adjustment Disorder in an adolescent with chronic medical illnesses. The anonymised vignettes were based on actual patient profiles. During each interactive discussion of these clinical presentations, students were encouraged by tutors to raise critical questions as pertinent portions of the history unfolded to enhance their analytic thinking of the cases and remember these teachable moments.

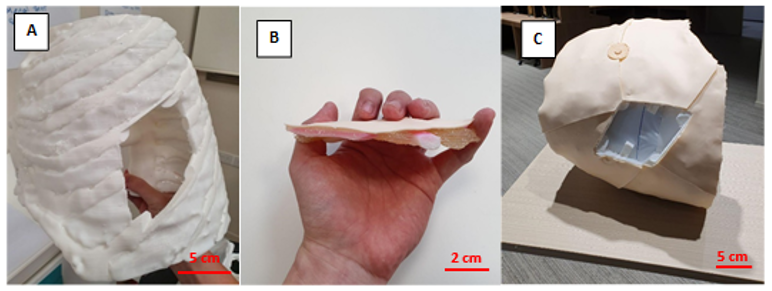

The second teaching activity of the OCITs would take place after a second series of lectures on other CAP conditions had been conducted. During this three-hour long OCIT, students would be provided opportunities to interview simulated patients (SPs). Each group would comprise of 12 to 18 students led by one clinical tutor.

The four pre-prepared clinical scenarios included one case of an adolescent with Anorexia Nervosa; another of an adolescent with Social Anxiety Disorder; a parent of a child with Attention Deficit Hyperactivity Disorder; and last but not least a parent of a child with features of Autism Spectrum Disorder. Each of these scenarios would include a case template that comprised an interesting title, the learning and assessment objective, the student’s task and the script for the SP complete with an opening statement, standard statements and character presentation (behaviour, affect and mannerism).

Students would take turn to interview the SPs in attempts to collate accurate and adequate clinical information to arrive at provisional diagnoses. The students were then tasked to discuss the possible differential diagnoses, to provide treatment options as well as to formulate prognoses of the conditions with the SPs. The SPs were in turn invited to comment on the interactions they had with the students. The clinical tutors would also conduct follow-up discussions to provide feedback to the students on aspects of their interviewing techniques and knowledge of the clinical conditions. The discussions also focused on the differential diagnoses and management strategies for various conditions.

II. METHODS

Paper and pen self-report surveys for both the CVT and OCIT sessions were done to evaluate the student participants’ learning, experience, and interest in CAP (Appendix A). Student participants were asked to grade responses on a five-point Likert scale (1 = Strongly disagree, 2 = Disagree, 3 = Neutral, 4 = Agree and 5 = Strongly agree), in relation to statements such as “I found the session enjoyable” and “The case scenarios were relevant”. The surveys were completed and submitted anonymously at the end of each teaching session. The surveys also included a free–text segment for any open feedback, in which the question asked the student participants to list down “The best things about the session” and “Some ways which I think can make the sessions better”. The surveys utilised for each teaching session differed slightly owing to varied content validity of the teaching methods, but the questions were largely identical for most of the surveys. Implied informed consent was provided for by the participating students during the surveys.

For the current study, the authors analysed data from the surveys completed by the Fourth-Year undergraduate medical students who were rotated to the six-week Psychiatry clerkship period of five months between July and November in 2017.

Descriptive statistics were used to analyse the findings of the survey.

III. RESULTS

A total of 289 students completed the survey between July 2017 and November 2017. With regards to the CVT, majority of the students agreed or strongly agreed that the sessions were enjoyable (90.7%) and beneficial to their overall learning (90.7%; Table 1). They provided feedback that the session had helped them to apply what they had learnt (95.8%), and that the case scenarios were relevant (98.2%).

|

Survey Statement |

Participants Who Indicated “Agree” Or “Strongly Agree” |

||

|

|

|

N |

% |

|

1 |

“I found the session enjoyable…”

|

262 |

90.7 |

|

2 |

“The session helped me to apply what I have learnt…”

|

277 |

95.8 |

|

3 |

“The case scenarios were relevant…”

|

284 |

98.2 |

|

4 |

“My clinical tutor was effective in facilitating the session…”

|

281 |

97.2 |

|

5 |

“The session stimulated my interest in Child and Adolescent Psychiatry…”

|

247 |

85.5 |

|

6 |

“There was sufficient time for each section…”

|

272 |

94.1 |

|

7 |

“Overall, I found the session beneficial…”

|

262 |

90.7 |

Table 1. Survey results for the Clinical Vignettes Tutorial (CVT)

For the OCIT, most of the survey respondents agreed or strongly agreed that the activity had helped them to learn psychiatric interviewing skills (97.7%), increased their confidence in speaking with adolescents or parents (95.1%) (Table 2). Most of the students who responded to the survey had reported that the simulated patients’ performances were realistic (97.7%). A large proportion of the respondents indicated that the teaching session had met their learning objectives (98.5%).

|

Survey Statement |

Participants Who Indicated “Agree” Or “Strongly Agree” |

||

|

|

|

N |

% |

|

1 |

“The session helped me to learn psychiatric interviewing skills…”

|

260 |

97.7 |

|

2 |

“The session increased my confidence in speaking to adolescents/parents…”

|

253 |

95.1 |

|

3 |

“The session helped me to apply what I have learnt…”

|

248 |

96.9 |

|

4 |

“The session stimulated my interest in Child and Adolescent Psychiatry…”

|

219 |

83.3 |

|

5 |

“My clinical tutor provided useful feedback…”

|

259 |

97.3 |

|

6 |

“The simulated patients’ performances felt realistic…”

|

258 |

97.7 |

|

7 |

“There was sufficient time for each case…”

|

256 |

95.9 |

|

8 |

“Overall, the session met the learning objectives…”

|

257 |

98.5 |

Table 2. Survey results for the Observed Clinical Interview Tutorial (OCIT)

Examining the effectiveness of these teaching activities in stimulating the students’ interest towards CAP, 83.3% of the respondents indicated that the CVT had done so, while a slightly higher proportion (85.5%) of the respondents reported that the OCIT stimulated their interest in CAP.

Majority of the respondents indicated that the clinical tutors were effective in facilitating the CVT (97.2%). Similarly, most of the respondents reported that the clinical tutors provided useful feedback during the OCIT (97.3%).

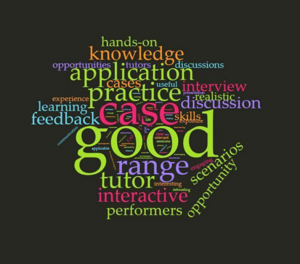

Entries in the free-text feedback section about what the students liked best about the CVT and OCIT included comments such as “good for application”, session allowed for “practice of interviewing skills” and “helped consolidate knowledge” (Figure 1). Several students liked the “interactive” nature of the interviews and discussions, as well as “feedback” from tutors, which also helped in their learning.

Figure. 1. Open comment feedback to the survey question “The best things about the sessions were…”

In areas that the students indicated for further improvement, they had cited for a “shorter” duration in each teaching session (Figure 2). This was likely due to the nature of a full day programme of CAP teaching which could last eight hours in a day with a one-hour lunch break. Others had shared that they preferred “smaller” groups so students could get more chances to practice interviewing the SPs and also be provided “more time for discussion” to allow more in-depth feedback as well as discussion of each clinical condition. Some students remarked that Objective Structured Clinical Examination (OSCE) styled marking schemes could help enhance their learning experiences as this method might be more structured, compared to an open discussion.

Figure 2. Open comment feedback to the survey question “Some ways which I think can make the sessions better are…”

IV. DISCUSSION

This study evaluated the effectiveness and acceptability of small group tutorials for CAP conditions, which are packaged inseparably as part of a medical undergraduate psychiatry teaching programme. CVT and OCIT are synergistically designed to complement each other in the curriculum. The surveys used to compile the medical undergraduates’ responses had focused on their learning experience with the CAP curriculum. The effectiveness of the teaching methods namely CVT and OCIT would be determined from transferability of the requisite knowledge base and the clinical skills, as well as availability of opportunities to experience interviewing for the participants. The survey responses were also used to gauge the performance of the SPs and the clinical tutors’ usefulness. In addition, the degree of how impactful the teaching sessions had in generating interest towards CAP was also evaluated.

The fourth-year medical students gave good feedback for the small group teaching sessions. They reported that the CVT were enjoyable, beneficial and had allowed them to apply what they had learnt. For the OCIT, most of the respondents indicated that the session had helped them to learn psychiatric interviewing skills, increased their level of confidence in speaking with adolescents and parents, and had helped them to apply in clinical scenarios what they had learnt. There is discernible difference between the feedback for CVT and OCIT. The students’ feedback for CVT affirmed applicability of the knowledge content of CAP whereas those for OCIT concurred with transferability of interviewing skills in terms of confidence level.

In the open feedback segment of the survey, respondents reported that they had particularly liked the interactive and hands-on aspect of the session, the frequent opportunities for evaluation and feedback, as well as for practice. However, they highlighted that certain factors such as the size of grouping, the length of the sessions and random allocation of conditions could be improved further to enhance their learning experience. Overall, their feedback still indicated positive experiences in these small group sessions, and this translated to an increased knowledge base, a heightened level of confidence, and burgeoned interest in CAP among the student participants.

This study’s limitations included the challenges inherent with attempting to accurately assess the students’ genuine experiences and feelings towards the sessions; with possible biases (recall and Hawthorne effect) in responding to questionnaires; and the lack of correlation to actual performances in real-world settings. Furthermore, what remained unanswered was how such sessions might truly generate interest leading to possibly pursuit of a career in CAP. In addition, it is uncertain whether changing the teaching methods with the curriculum could inspire more medical students and young doctors to consider specialising in this field and raise the number of residency applications. The data from our study did appear to be consistent with findings from other CAP clinical teaching programmes. In these programmes, more exposure to CAP and increased clinical opportunities did correlate with changes in impressions towards and appreciation of clinical interactions with children, increased positive views of CAP as part of medical practice, and heightened interest in CAP as a field of medical specialty (Dingle, 2010; Kaplan & Lake, 2008; Malloy et al., 2008; Martin, Bennett, & Pitale, 2005).

In the current undergraduate medical curriculum, the amount of time allocated to teaching CAP is relatively small compared to other topics. Child and adolescent psychiatric cases can be particularly complex and their management demand sensitive handling, which may pose challenges to real world practice. Youth patients and their parents may value privacy and sometimes do not allow medical students to be involved in initial assessments and subsequent follow-up consultations. These factors collectively pose unique challenges to teaching and equipping medical students with the skills and knowledge to address child and adolescent mental health disorders. While clinical contact and patient experience would be preferred and desirable for training, it may be impractical given the various constraints mentioned above (Kaplan & Lake, 2008). Hence, other creative methods of “exposure” to CAP patients should be incorporated into teaching rotations to offer medical students the opportunities to expand this knowledge base, apply the knowledge to practice scenarios, and further their clinical and communication skills. Small group sessions such as the CVTs and OCITs are teaching activities that can be used to overcome some of these challenges.

Our study showed that small group interactive teaching is effective in helping medical students to apply what they have learnt about CAP, increase their confidence in speaking to adolescents as patients and learn psychiatric interviewing skills. It also exposes them to a wide range of relevant CAP cases to which they can apply their theoretical knowledge and practice interview and management techniques. Furthermore, we have found that all this can be adequately achieved in a tailored environment that is conducive for learning. The collective constructive feedback had been used to further improve the content and deliverability style so as to enhance implementation in future batches. It has also been conceptualised to compare CVT and OCIT as individual teaching methods for future scholarly research.

V. CONCLUSION

The CAP small group interactive teaching sessions for medical students received good feedback from majority of the participants. This positive validation would spur the authors on to explore further how this pedagogy could help spark interests in Child and Adolescent Psychiatry among medical students given the shortfall of child and adolescent psychiatrists worldwide.

Notes on Contributors

AHYL analysed and interpreted data. CHJW, together with TJY and JCMW planned and conducted the child psychiatry small group teaching and collected feedback data from the medical students. TJY developed the feedback questionnaire. YSL, together with AHYL, CHJW and TTC planned and wrote the manuscript. All authors read and approved the final manuscript.

Ethical Approval

NHG DSRB reference number 2019/00431 for exemption.

Data Availability

Datasets generated and/or analysed during the current study are available from corresponding author on reasonable request.

Acknowledgements

The authors wish to thank the team from Centre for Healthcare Simulation, Yong Loo Lin School of Medicine, National University of Singapore for the invaluable support in recruiting and training the simulated patients for the CAP teaching program. We appreciate the participation of the simulated patients and medical students in the teaching programme.

Funding

There is no funding for this paper.

Declaration of Interest

As far as all the authors are concerned, we do not know of, or foresee any future competing interests. We are not aware of any issues relating to journal policies in submitting this manuscript. All the authors have approved of the manuscript for submission. The authors declare that they have no competing interests.

References

Baranne, M. L., & Falissard, B. (2018). Global burden of mental disorders among children aged 5–14 years. Child and Adolescent Psychiatry and Mental Health, 12(1), 19.

Breton, J. J., Plante, M. A., & St-Georges, M. (2005). Challenges facing child psychiatry in Quebec at the dawn of the 21st Century. The Canadian Journal of Psychiatry, 50(4), 203-212.

Costello, E. J., Egger, H., & Angold, A. (2005). 10-year research update review: The epidemiology of child and adolescent psychiatric disorders: I. Methods and public health burden. Journal of the American Academy of Child & Adolescent Psychiatry, 44(10), 972-986.

Dingle, A. D. (2010). Child psychiatry: What are we teaching medical students? Academic Psychiatry, 34(3), 175-182.

Erskine, H. E., Moffitt, T. E., Copeland, W. E., Costello, E. J., Ferrari, A. J., Patton, G., … & Scott, J. G. (2015). A heavy burden on young minds: The global burden of mental and substance use disorders in children and youth. Psychological Medicine, 45(7), 1551-1563.

Hunt, J., Barrett, R., Grapentine, W. L., Liguori, G., & Trivedi, H. K. (2008). Exposure to child and adolescent psychiatry for medical students: Are there optimal “teaching perspectives”?. Academic Psychiatry, 32(5), 357-361.

Kaplan, J. S., & Lake, M. (2008). Exposing medical students to child and adolescent psychiatry: A case-based seminar. Academic Psychiatry, 32(5), 362-365.

Kyu, H. H., Pinho, C., Wagner, J. A., Brown, J. C., Bertozzi-Villa, A., Charlson, F. J., … & Fitzmaurice, C. (2016). Global and national burden of diseases and injuries among children and adolescents between 1990 and 2013: Findings from the global burden of disease 2013 study. JAMA Pediatrics, 170(3), 267-287.

Lim, C. G., Ong, S. H., Chin, C. H., & Fung, D. S. S. (2015). Child and adolescent psychiatry services in Singapore. Child and Adolescent Psychiatry and Mental Health, 9(1), 7.

Malloy, E., Hollar, D., & Lindsey, B. A. (2008). Increasing interest in child and adolescent psychiatry in the third-year clerkship: Results from a post-clerkship survey. Academic Psychiatry, 32(5), 350-356.

Martin, V. L., Bennett, D. S., & Pitale, M. (2005). Medical students’ perceptions of child psychiatry: Pre-and post-psychiatry clerkship. Academic Psychiatry, 29(4), 362-367.

Plan, S. (2002). A Call to Action: Children Need Our Help! American Academy of Child & Adolescent Psychiatry. Retrieved from https://www.aacap.org/app_themes/aacap/docs/resources_for_primary_care/workforce_issues/AACAP_Call_to_Action.pdf

Sawyer, M., & Giesen, F. (2007). Undergraduate teaching of child and adolescent psychiatry in Australia: Survey of current practice. Australian & New Zealand Journal of Psychiatry, 41(8), 675-681.

Thomas, C. R., & Holzer, C. E., 3rd (2006). The continuing shortage of child and adolescent psychiatrists. Journal of the American Academy of Child & Adolescent Psychiatry, 45(9), 1023-1031.

World Health Organization. (2014). Adolescent health epidemiology. Retrieved from http://www.who.int/maternal_child_adolescent/epidemiology/adolescence/en/

*Yit Shiang Lui

1E Kent Ridge Road

Tower Block, Level 9,

Singapore 119228

Tel: 6772 6331

Email address: yit_shiang_lui@nuhs.edu.sg

Submitted: 14 February 2020

Accepted: 1 July 2020

Published online: 5 January, TAPS 2021, 6(1), 40-48

https://doi.org/10.29060/TAPS.2021-6-1/OA2227

Shirley Beng Suat Ooi1,2, Clement Woon Teck Tan3,4 & Janneke M. Frambach5

1Emergency Medicine Department, National University Hospital, National University Health System, Singapore; 2Department of Surgery, Yong Loo Lin School of Medicine, National University of Singapore, Singapore; 3Department of Ophthalmology, National University Hospital, National University Health System, Singapore; 4Yong Loo Lin School of Medicine, National University of Singapore, Singapore; 5School of Health Professions Education, Faculty of Health, Medicine and Life Sciences, Maastricht University, The Netherlands

Abstract

Introduction: Almost all published literature on effective clinical teachers were from western countries and only two compared medical students with residents. Hence, this study aims to explore the perceived characteristics of effective clinical teachers among medical students compared to residents graduating from an Asian medical school, and specifically whether there are differences between cognitive and non-cognitive domain skills, to inform faculty development.

Methods: This qualitative study was conducted at the National University Health System (NUHS), Singapore involving six final year medical students at the National University of Singapore, and six residents from the NUHS Residency programme. Analysis of the semi-structured one-on-one interviews was done using a 3-step approach based on principles of Grounded Theory.

Results: There are differences in the perceptions of effective clinical teachers between medical students and residents. Medical students valued a more didactic spoon-feeding type of teacher in their earlier clinical years. However final year medical students and residents valued feedback and role-modelling at clinical practice. The top two characteristics of approachability and passion for teaching are in the non-cognitive domains. These seem foundational and lead to the acquisition of effective teaching skills such as the ability to simplify complex concepts and creating a conducive learning environment. Being exam-oriented is a new characteristic not identified before in “Western-dominated” publications.

Conclusion: The results of this study will help to inform educators of the differences in a learner’s needs at different stages of their clinical development and to potentially adapt their teaching styles.

Keywords: Clinical Teachers, Medical Students, Residents, Cognitive/Non-Cognitive, Asian Healthcare, Faculty Development

Practice Highlights

- Approachability and teaching passion are foundational non-cognitive skills in effective clinical teachers.

- These foundational skills are more important for undergraduate than postgraduate teaching.

- Procedural residents can accept less ‘warm’ teachers if they can learn advanced clinical skills.

- Medical students value didactic ‘spoon-feeding’ type of teachers in their earlier clinical years.

- Final year medical students and residents value feedback and role-modelling at clinical practice.

I. INTRODUCTION

“The transformation of our students requires the engagement of innovative and outstanding clinician-teachers who not only supervise students in their development of technical skills and applied knowledge but also serve as role models of the values and attributes of the profession and of the life of a professional” (Sutkin, Wagner, Harris, & Schiffer, 2008). This statement nicely encapsulates the very important role played by outstanding clinical teachers in helping students to ultimately become professionals with the attributes our healthcare system desires. Previous research has extensively investigated characteristics of effective clinical teachers to inform faculty development (e.g. Branch, Osterberg, & Weil, 2015; Hatem et al., 2011; Hillard, 1990; Kernan, Lee, Stone, Freudigman, & O’Connor, 2000; Paukert & Richards, 2000; Singh et al., 2013; Sutkin et al., 2008; White & Anderson, 1995). However, despite the large body of existing research on effective clinical teaching, two issues related to the needs of different groups of learners need further investigation to enable more tailored faculty development.

First, effective clinical teaching may look different in undergraduate as compared with postgraduate education. In many healthcare institutions, clinical teachers are expected to teach across the medical education continuum, i.e., undergraduate medical students, graduate doctors in training, as well as part of continuing medical education, and teaching abilities are a necessary prerequisite in an academic environment (Hatem et al., 2011). Based on the conceptual framework of constructivism (Bednar, Cunningham, Duffy, & Perry, 1991)—a theory which equates learning with creating meaning from experience or contextual learning—Jonassen (1991) argues that constructive learning environments are most effective for acquiring knowledge in the advanced stage of knowledge, the stage between introductory and expert. According to Jonassen (1991), the initial or introductory stage of knowledge acquisition occurs when learners have very little directly transferable prior knowledge about a skill or content area. In this stage, knowledge is best acquired through more objectivistic approaches which can be described as ‘spoon-feeding’. Medical students in general would fit into this introductory stage, in varying degrees depending on their seniority and individual progress in learning. Jonassen’s (1991) second stage is advanced knowledge acquisition where the domains are ill-structured and more knowledge-based. This is in contrast to his third or final stage of knowledge acquisition of experts that require very little instructional support but are able to deal with elaborate structures, schematic patterns and seeing the interconnectedness in knowledge through experience. The stage of junior doctors in training would be fit into the second or advanced stage of learning. Constructivist teachers help students construct knowledge to become active learners rather than passive recipients of knowledge from the teachers or textbooks. In view of this constructivist framework, it appears logical to postulate that as medical students mature to become practicing doctors, their perceptions of effective clinical teachers may change from one who ‘spoon-feeds’ them with medical knowledge to one who encourages them to actively construct new meaning as they become clinically more experienced and have to deal with complex and ill-defined problems. Low, Khoo, Kuan, and Ooi (2020) showed that although the top four characteristics of effective medical teachers are consistent across all 5 years of medical school, characteristics that facilitate active learner participation are emphasised in the clinical years consistent with constructivist learning theory. However, as there is a paucity of comparative research on perceptions of effective clinical teachers among undergraduates as compared to postgraduates to plan more focused faculty development to address the attributes the learners look for in their clinical teachers, this warrants further research.

The second issue relates to potential differences in the clinical teaching role between Asian and Western settings. In Western studies, as noted above, effective clinical teachers are encouraged to stimulate students’ intellectual curiosity leading to more self-directed learning (Hillard, 1990; Kernan et al., 2000; White & Anderson, 1995). In contrast, feelings of uncertainty about the independence required in self-directed learning, a focus on tradition that respects ‘old ways’, hierarchy expecting ‘truths’ to come from persons of higher status, and an achievement orientation to pass and excel in examinations have been identified as more prominent in non-Western than in Western cultures (Frambach, Driessen, Chan, & van der Vleuten, 2012). This is despite the recent introductions of more student-centred education methods. In Singapore for example, there is a move in the Yong Loo Lin School of Medicine (YLLSoM) to try to embed students into healthcare teams (Jacobs & Samarasekera, 2012) and implement newer methods of learning such as flipped classroom. However, many teachers still employ traditional methods of lectures and small group tutorials focused on exam preparation. A comprehensive review study of 68 articles on effective clinical teaching (Sutkin et al., 2008), comprised only one article that reported research from a non-Western setting (Elzubeir & Rizk, 2001). In this article, originating from the United Arab Emirates, there is no discussion on whether there is a difference in the perception of a role model between medical students in Asian countries compared to the West (Elzubeir & Rizk, 2001). Another study conducted in Asia showed differences in the perceptions of first-year and fifth-year medical students in Singapore on what makes an effective medical teacher (Kua, Voon, Tan, & Goh, 2006). More first-year students preferred handouts in contrast to fifth-year students who were less reliant on ‘spoon-feeding’. Research on effective clinical teaching is growing in the Asian setting (Ciraj et al., 2013; Haider, Snead, & Bari, 2016; Kikukawa et al., 2013; Mohan & Chia, 2017; Nishiya et al., 2019; Venkataramani et al., 2016) though there is still a paucity of literature in the Asian setting compared with studies conducted in the West and there are none that directly compared medical students with residents.

Another issue that deserves further attention is the role of non-cognitive domain skills in clinical teaching. Sutkin et al.’s (2008) review study described three main categories of characteristics of good clinical teachers: 1) physician characteristics, 2) teacher characteristics, and 3) human characteristics (Table 1). Approximately two-thirds of the characteristics were in non-cognitive domains (such as those involving relationship skills, emotional states, and personality types), and one-third in cognitive domains (such as those involving reasoning, memory, judgment, perception, and procedural skills). The article noted that cognitive abilities can be taught and learned, in contrast to non-cognitive attributes which are more difficult to develop and teach. Faculty development programmes currently often focus on traditional cognitive skills, such as curriculum design, large-group teaching, and assessment of learners (Searle, Hatem, Perkowski, & Wilkerson, 2006). In contrast, if non-cognitive domains are more important in contributing to outstanding teaching, they might need greater emphasis in the curricula of these workshops. The good news is that according to Schiffer, Rao, and Fogel (2003), non-cognitive behaviours are both measurable and alterable. Most of them have underlying neural networks which are entering our sphere of understanding. Hence non-cognitive skills, although much more challenging to develop than cognitive skills, have a potential to be developed. It is not clear whether there are differences in the distribution between cognitive and non-cognitive domains skills between the perceptions of medical students compared to residents of an effective clinical teacher.

The aim of this qualitative study is to explore the perceived characteristics of an effective clinical teacher among medical students compared to residents graduating from an Asian medical school and whether there is a difference regarding cognitive and non-cognitive domain skills.

II. METHODS

A. Participants

The participants consisted of final/fifth year medical students (M5s) from the Yong Loo Lin School of Medicine (YLLSoM), National University of Singapore (NUS) who were posted to the National University Hospital (NUH) to do their student internship posting in 2016. To ensure sufficient working experience, the National University Health System (NUHS) residents who had graduated from the YLLSoM and who had recently completed their intermediate specialty examinations were recruited. These were third to fifth year residents in different programmes. Maximal variation sampling of the M5s and the residents of both gender, different ethnic groups and from different specialties (for residents only) was done.

B. Design

A pragmatic qualitative research design (Savin-Baden & Howell Major, 2013) was used to get the participants to reflect on their own learning journey affecting their perceptions of the qualities that make an effective clinical teacher from the time they were first exposed to clinical medicine in year 3 (M3) of medical school to final year (M5) for the students, and to residency for the residents.

C. Data Collection

Semi-structured one-on-one interviews using open-ended questions were conducted. A list of M5s doing their student internship programme in the various departments in NUH was invited via an e-mail invitation to participate in this study. To ensure maximal variation sampling, M5s of both gender and as far as possible different ethnic groups were recruited. As for the residents, through the Graduate Medicine Education Office in NUH, residents of both gender, from different ethnic groups and different specialties (both procedural and non-procedural) were selected from those who responded voluntarily to the invitation to participate in this study to ensure maximal variation sampling as residents from procedural specialties may have different perceptions of effective clinical teachers from non-procedural specialties.

Written consent after reading the Participant Information Sheet was taken from the interviewees before the interview was conducted in a quiet room. The interview was audiotaped and lasted between 30 and 45 minutes.

D. Data Analysis

The audiotaped interviews were transcribed. As all the 12 interviews were conducted by the principal investigator (PI) (SO) and although the coding and official analysis of the interviews were done after all the 12 interviews were transcribed, the PI had taken note of themes emerging and decided on ending the interviews after no substantial new themes had emerged.

In the first phase, open coding, initial categories of the information on characteristics of effective clinical teachers by segmenting information and assigning open codes were formed. In the second coding phase, broader categories were developed through conceptually related ideas. The third phase involved selective coding where the individual categories were counterchecked with Sutkin et al.’s (2008) categories of teacher, physician and human characteristics and whether they were in the cognitive or non-cognitive domains (Table 1). Further related categories according to Sutkin et al.’s (2008) classification were brought together.

Physician Characteristics

|

P1 |

Demonstrates medical/clinical knowledge |

|

P2 |

Demonstrates clinical and technical skills/competence, clinical reasoning |

|

P3 |

Shows enthusiasm for medicine |

|

P4 |

A close doctor-patient relationship |

|

P5 |

Exhibits professionalism |

|

P6 |

Is scholarly (does research) |

|

P7 |

Values teamwork and has collegial skills |

|

P8 |

Is experienced |

|

P9 |

Demonstrates skills in leadership and /or administration |

|

P10 |

Accepts uncertainty in medicine |

|

P11 |

Others |

Teacher Characteristics

|

T1 |

Maintains positive relationships with students and a supportive learning environment |

|

T2 |

Demonstrates enthusiasm for teaching |

|

T3 |

Is accessible/available to students |

|

T4 |

Provides effective explanations, answers to questions, and demonstrations |

|

T5 |

Provides feedback and formative assessment |

|

T6 |

Is organized and communicates objectives |

|

T7 |

Demonstrates knowledge of teaching skills, methods, principles, and their application |

|

T8 |

Stimulates students’ interest in learning and/or subject |

|

T9 |

Stimulates or inspires trainees’ thinking |

|

T10 |

Encourages trainees’ active involvement in clinical work |

|

T11 |

Provides individual attention to students |

|

T12 |

Demonstrates commitment to improvement of teaching |

|

T13 |

Actively involves students |

|

T14 |

Demonstrates learner assessment/evaluation skills |

|

T15 |

Uses questioning skills |

|

T16 |

Stimulates trainees’ reflective practice and assessment |

|

T17 |

Teaches professionalism |

|

T18 |

Is dynamic, enthusiastic, and engaging |

|

T19 |

Emphasizes observation |

|

T20 |

Others |

Human Characteristics

|

H1 |

Communication skills |

|

H2 |

Acts as role model |

|

H3 |

Is an enthusiastic person |

|

H4 |

Is personable |

|

H5 |

Is compassionate/emphathetic |

|

H6 |

Respect others |

|

H7 |

Displays honesty |

|

H8 |

Has wisdom, intelligence, common sense, and good judgement |

|

H9 |

Appreciates culture and different cultural backgrounds |

|

H10 |

Consider other’s perspectives |

|

H11 |

Is patient |

|

H12 |

Balances professional and personal life |

|

H13 |

Is perceived as a virtuous person and a globally good person |

|

H14 |

Maintains health, appearance, and hygiene |

|

H15 |

Is modest and humble |

|

H16 |

Has a good sense of humour |

|

H17 |

Is responsible and conscientious |

|

H18 |

Is imaginative |

|

H19 |

Has self-insight, self-knowledge, and is reflective |

|

H20 |

Is altruistic |

|

H21 |

Others |

Note: Italics denotes cognitive characteristics; Bold denotes non-cognitive characteristics.

Table 1. Classification of characteristics of outstanding clinical teachers (Sutkin et al., 2008)

E. Trustworthiness

To enhance the credibility of the research, member checking on the accuracy of interview transcription was done. The same transcription was coded by the PI (SO) and a co-researcher (CT) and the themes and differences were discussed and resolved together. The themes were then discussed with another co-researcher (JF) who is an outsider to the research setting. To contribute to the dependability of the data, a reflexivity diary was kept to reflect on the process and the PI’s role and influence on this study. This is because the PI is the person overall in charge of the residency training and has vast experience in teaching both undergraduate and postgraduate learners and has observed undergraduates seemingly valuing the willingness of time spent teaching in contrast to postgraduate learners who value effective teaching on the job. The PI emphasised to participants that whatever they mentioned in this study would not affect them in any way in their assessments, selection into a residency programme, job selection nor career progression. As a point of note, none of the interviewees mentioned any of the authors by name in the interviews when describing an effective clinical teacher.

III. RESULTS

A total of six final year medical students from the YLLSoM consisting of three males and three females with a mean age of 23 years old were interviewed. As for the residents group, they consisted of four males and two females. There were two internal medicine year 3 residents, one paediatric year 5 resident, one emergency medicine year 4 resident, one orthopaedic year 3 resident and one urology year 4 resident with a mean age of 29 years (range 26-33 years). All of them were of Chinese ethnicity.

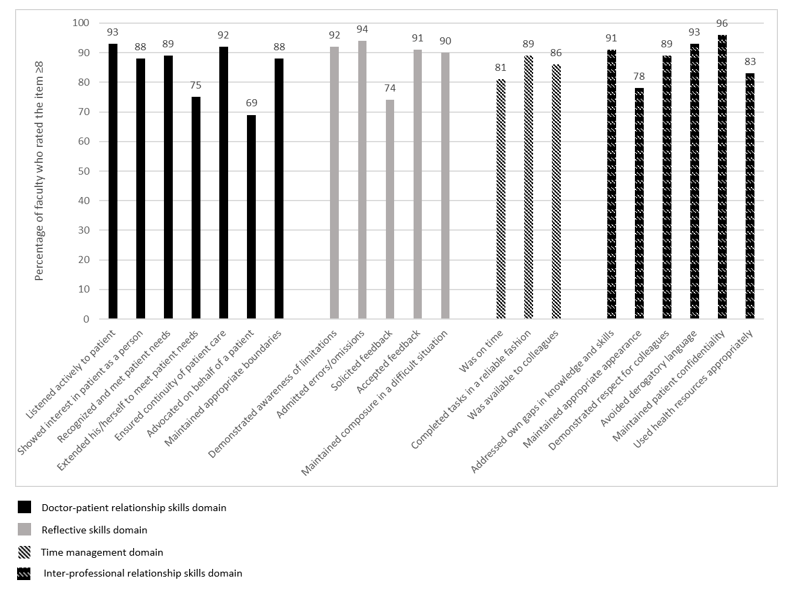

The characteristics of effective teachers were mapped onto Sutkin et al.’s (2008) review paper (Table 1) and while the majority of the characteristics could be mapped, those characteristics not able to be mapped would be considered as new characteristics. Referring to the summary of results in Table 2, the top characteristic identified equally by the medical students and residents group was approachability, in the non-cognitive domain. This was described as being “relatable, personable, forming good rapport, warm, able to remember students’ names, having a sense of humour, sharing personal experience”. Medical student 2 aptly described its importance: “Approachability in being willing to teach is an inborn trait. It acts as a screening tool. It opens the door for a student to decide whether or not this clinical tutor is someone she is likely to approach to learn from.” Interestingly, while both the medical students and residents group unanimously identified the need for a clinical teacher to have a threshold level of clinical competence, followed by a teacher who is warm and approachable with a passion to teach, this latter requirement was emphasised as particularly important in undergraduate teaching. In contrast, a postgraduate trainee/resident was able to accept a less warm but skillful clinician to learn advanced surgical skills from as they were more able to do self-directed learning being already in a training programme and they could observe and learn.

|

Total |

MS |

R |

Characteristics |

Teacher |

Physician |

Human |

Cognitive |

Non-Cognitive |

|

10 |

5 |

5 |

Approachability |

X (T3) |

|

X (H4) |

|

x |

|

9 |

3 |

6 |

Passion/enthusiasm in teaching/engaging |

X (T2) |

|

|

|

x |

|

8 |

5 |

3 |

Provide effective explanations, answers to questions, and demonstrations (T4) Demonstrate clinical and technical skills/competence, clinical reasoning (P2) |

X (T4) |

X (P2) |

|

x |

|

|

7 |

3 |

4 |

Creates conducive learning environment

|

X (T1) |

|

X (H11, H15) |

|

x |

|

7 |

3 |

4 |

Role modeling

|

x |

x |

X (H1, H6) |

|

x |

|

7 |

2 |

5 |

Teach at appropriate level/know learning objectives |

X (T6) |

|

|

x |

|

|

7 |

3 |

4 |

Sacrifice time |

x |

|

|

x |

|

|

6 |

3 |

3 |

Realistic/concrete learning |

X (T6) |

|

|

x |

|

|

6 |

2 |

4 |

Feedback, supervision, assessment for learning |

X (T5, T19) |

|

|

x |

|

|

5 |

2 |

3 |

Knowledgeable/up to date/evidence-based |

|

X (P1) |

|

x |

|

|

5 |

4 |

1 |

Exam-oriented |

x |

|

|

x |

|

|

4 |

2 |

2 |

Inspirational to learning |

X (T8, T9, T18) |

|

|

|

x |

|

4 |

1 |

3 |

Clinical thinking/Demonstrate to impart/pedagogy |

X (T9) |

X (P2) |

|

x |

|

|

3 |

2 |

1 |

Nurturing/encouraging/compassion for students & team |

X (T11) |

|

X |

|

x |

|

2 |

0 |

2 |

Allows hands-on/encourages trainees active involvement in clinical work |

X (T10) |

|

|

|

x |

|

|

|

|

Others: Strict, elocution, fair/moral compass (H13, H7), innovative (T12), directs learners, worldly-wise; empathy (H5), interpersonal skills, humour (H16) |

|

|

|

|

|

Note: (T), (P) and (H) refer to the specific Sutkin et al.’s (2008) classification as given in Table 1.

Table 2. Characteristics of effective teachers identified by Medical Students (MS) and Residents (R) classified into teacher, physician and human characteristics and cognitive vs non-cognitive domains and mapped onto Sutkin et al.’s (2008) Classification (Table 1)

The second most important characteristic identified was having a passion/enthusiasm in teaching, in the non-cognitive domain. This was described as “engaging, enthusiastic to help residents learn, enthusiasm/infectious attitude rubs off, lively, draws out from learners, takes time to explain to students”. Resident 5 explained: “Passion is actually demonstrated in the knowledge you display. Because when you are interested in something, you can go on to explore the depth. People who display passion are able to depict the subject matter in a very interesting, personal and in a lively way. Passion is also about the desire to learn about things and to contribute to things. So in a sense teaching is not a passive tool for the diffusion of students … it’s also the ability to be able to draw things out from the students …draw contribution or ideas…”. Passion as a characteristic was mentioned by all the residents but not by all of the medical students.

The third most important characteristic identified can be summarised as “providing effective explanations, answers to questions, and demonstrations” (a teacher characteristic) and “demonstrates clinical and technical skills/competence, clinical reasoning” (a physician characteristic) in the cognitive domain. This was described as “being able to break down concepts into digestible chunks; being able to synthesise and teach in understandable way; how to think, synthesise and use information; concise, targeted, clear thinking; headings, subheadings, elaborations; clarity in giving instructions and thought so that everyone is on the same page; demonstrate better way of presenting and more accurate way of physical examination”. This was identified more in the medical student group than in the resident group.

Most of the other characteristics generally coincided with Sutkin et al.’s (2008) paper. Among the two cognitive domains skills were “teaching at appropriate level/knowing learning objectives” as well as being willing to “sacrifice time” demonstrating commitment for student education. The teachers who sacrificed their time gave additional teaching sessions and did not rush through. The medical students and the residents identified this characteristic as something they really valued in undergraduate teaching. Another characteristic in the cognitive domain was “Realistic/concrete learning” was described as “bedside teaching; teaching with practical aspect, case-based teaching; use of clinical pictures, electrocardiogram, clinical quiz and learning aids”. This form of learning was identified as being effective by both the medical students and residents equally. In contrast, “feedback, supervision, assessment for learning” described as “being able to discuss in detail as physically present; balance between supervision and resisting urge to take over in an operation; good feedback with balance of positive and negative points done in a fun and nice way” was identified more by the residents than the medical students group.

Being “exam-oriented” i.e., the teacher being able to prepare the students well for exams, was notably a characteristic identified mainly by the medical students but was one not identified at all in Sutkin et al.’s (2008) paper nor other more recent references. To quote medical student 1, “I guess especially for medical students, it is whether this tutor prepares us well for the exams and in terms of meeting our academic objectives.” Medical student 5: “He teaches us very exam focused and he synthesises all the information very succinctly for exams.”

The medical students were specifically asked whether they identified a difference in the characteristics they valued in their teachers between when they were first introduced to clinical medicine in M3 compared to now in M5. The students almost unanimously expressed that in M3, as they had just been exposed to clinical medicine, they identified the need to build up their medical knowledge through more content-heavy didactic style of teaching that could be described as more of spoon-feeding than self-directed learning. Medical student 5 said, “Year 3 is more introductory kind of year so we don’t know anything. So what a good tutor to me in year 3 was whoever can teach me approaches, impart didactic teachings like knowledge.” They valued connections back to the basic sciences taught in their first two years of medical school and teachers who taught them how to approach patients. They were open to the gradual introduction of self-directed learning but it should not hold up the pace of the lesson if the students were unable to answer. In contrast, at the time of interview they were in M5 and they had two main aims. Their first aim was to look for good role-models for their upcoming internship and choice of residency for some. Hence, they appreciated bedside teaching with close supervision and feedback on medical knowledge applied to actual clinical care. Moreover, bedside examination skills and patient communications cannot be studied at home. At M5, they valued more self-directed learning as they were more equipped to search for information themselves unlike when they were in M3. They also greatly valued preparation for their final exams which would involve clinical examination in the form of Objective Structured Clinical Examination. In this aspect, they valued teachers who could teach them clinical reasoning on how to synthesise information to be applied to management of actual patients. The second aim had become more important as their final exams drew near. This feedback was also expressed by the residents when they recalled on what they looked for in their undergraduate years.

For the residents who were in their third year of their residency and beyond, they identified the need for more active, self-directed learning. They mentioned the need to ask the ‘why questions’ and to learn evidence-based clinical practice. They appreciated experienced tutors who shared pearls and personal experience with them. They preferred to learn from good teachings during ward rounds and clinics and mentioned that didactic teaching was less important unlike in their undergraduate days and also as a first year resident where they still appreciated more spoon-feeding. As a more senior resident, they found discussions, greater analysis, asking questions to identify knowledge gaps, opportunity to present and testing useful because they already had a fund of medical knowledge.

IV. DISCUSSION

The results of this study suggest that there are differences in the perceived characteristics of an effective clinical teacher among medical students compared to residents. The results support Jonassen’s theory of constructivism (1991) as seen by the medical students at the beginning of their clinical year (M3) wanting more didactic teaching to ‘spoon-feed’ them with medical knowledge. As these students move on to become more senior in M5, and then residency, they start appreciating teachers who help them become more self-directed learners. These more senior learners also value feedback to help them deal with more complex ill-defined problems that they encounter during their daily clinical work. This is supported by more residents than medical students identifying feedback and supervision as well as clinical decision making/thinking as important characteristics of an effective clinical teacher (Table 2).

It is also interesting to note that the top two characteristics of approachability and passion/enthusiasm in teaching are both in the non-cognitive domains. In fact, they are probably fundamental attributes that make a good teacher into a great one as they lead to a lot of teaching experience coupled with feedback from the learners that make them become good at simplifying and explaining concepts well, especially in undergraduate teaching. For the students beyond a baseline clinical competence, they value clinical teachers who want to teach rather than those who may be excellent top clinicians who do not possess the soft skills and the approachability for the students to want to have the courage to learn effectively from him/her. In contrast, the residents are willing to accept less ‘warm’ teachers if they are able to learn advanced clinical skills from them, particularly in the procedural specialties.

One of the characteristics that has not been identified in any of the references, including Sutkin et al.’s (2008) review paper is that of being exam-oriented. This was a characteristic identified by four of the medical students but only by one of the residents who mentioned it while recalling his undergraduate days. This is not too surprising because Frambach et al. (2012) have found that Asian students tend to strive for success and to rank among the top achievers in an examination. The fact that the YLLSoM is Asia’s leading medical school (QS Top Universities, 2015; Times Higher Education, 2015) and hence the crème de la crème of Singapore’s students study at YLLSoM as seen by both the 10th and 90th percentiles of Medical students getting all A grades in their Singapore-Cambridge GCE A-level admission scores (National University of Singapore, 2019) can explain the exam-orientedness of the students. Moreover, Singapore practices meritocracy (Prime Minister’s Office, 2015) and in a small country of only 719.1 km² with a population of 5.35 million (World Bank, 2015) with only three public healthcare clusters, doing well in exams is seen as a tried and tested way of securing a good future. Failing in a high-stakes exam such as the final Bachelor of Medicine and Bachelor of Surgery (MBBS) exams will delay one’s progression to the next stage of one’s career such as admission to a residency training programme, and in a small country like Singapore where it is perceived to have few opportunities of starting afresh, it is not surprising that so much emphasis is placed on doing well in exams and a teacher who is able to prepare students well for exams is greatly valued.

There are several limitations to this study. Although we had wanted to recruit interviewees from different ethnicity, all 12 who responded to our invitation were Chinese, though participating in a multi-cultural and multi-ethnic public school. Another limitation is that this study only explores the perceptions of the learners themselves. It will be more balanced if the viewpoints of the teachers are obtained as well.

V. CONCLUSION

This study suggests that there are differences in the perceptions of an effective clinical teacher between medical students compared to residents. Medical students valued a more didactic spoon-feeding type of teacher in their earlier clinical years. However, final year medical students and residents valued feedback and role-modelling at clinical practice. The top two characteristics of approachability and passion for teaching are in the non-cognitive domains. The results of this study will help to inform educators of the differences in a learner’s needs at different stages of their clinical development and to potentially adapt their teaching styles. In addition, it is also possible for certain non-cognitive domain skills to be developed through recognition of clinical teachers who are role models in showing by example the art of the practice of Medicine and being able to create a conducive non-threatening learning environment. There are definitely faculty development programmes which target at how to develop a conducive learning environment.

Notes on Contributors

Shirley Ooi, MBBS(S’pore), FRCSEd(A&E), MHPE(Maastricht) is senior consultant emergency physician at NUH and associate professor at NUS. She was the Designated Institutional Official NUHS Residency programme at the time of the study. Currently she is the Associate Dean at NUH. This study was her MHPE thesis. She reviewed the literature, designed the study, conducted the interviews, analysed the transcripts and wrote the manuscript.

Clement Tan, MBBS(S’pore), FRCSEd (Ophth), MHPE(Maastricht), is associate professor, senior consultant and head of the Department of Ophthalmology, NUS and NUH. He was the first author’s local MHPE thesis supervisor. He co-analysed the transcripts and approved the final versions of the manuscripts.

Janneke M. Frambach PhD is assistant professor at the School of Health Professions Education, Faculty of Health, Medicine and Life Sciences, Maastricht University, the Netherlands. She was the first author’s MHPE thesis supervisor. She supervised the study from the beginning to the final stage of manuscript writing with its revisions.

Ethical Approval

This study was reviewed and approved by the NUS Institutional Review Board (approval no. 3172), which considered the letter of invitation for recruitment of participants, participant information sheet, written informed consent for the audio-recordings of the one-on-one interviews, interview guide and confidentiality of participants.

Acknowledgements

The authors would like to thank the following for their help, advice and support, without which this study would not have been possible:

- Medical student, Gerald Tan, for his help in transcribing many of the interviews.

- The six YLLSoM medical students who had willingly come forward to be interviewed for this study.

- The six NUHS residents who had willingly spared their time to be interviewed for this study.

Funding

No grant nor external funding was received for this study.

Declaration of Interest

The PI as the interviewer emphasised to the participants that whatever they mentioned in this study would not affect them in any way in their assessments, selection into a residency programme, job selection nor career progression. Moreover, their participation was entirely voluntary. The other two authors had no conflict of interest.

References

Bednar, A. K., Cunningham, D., Duffy, T. M., & Perry, J. D. (1991). Theory into practice: How do we link? In G.J. Anglin (Ed.), Instructional Technology: Past, Present, and Future. Englewood, CO: Libraries Unlimited.

Branch, W. T., Osterberg, L., & Weil, R. (2015). The highly influential teacher: Recognising our unsung heroes. Medical Education, 49, 1121-27.

Ciraj, A., Abraham, R., Pallath, V., Ramnarayan, K., Kamath, A., & Kamath, R. (2013). Exploring attributes of effective teachers-student perspectives from an Indian medical school. South-East Asian Journal of Medical Education, 7(1), 8-13.

Elzubeir, M. A., & Rizk, D. E. E. (2001). Identifying characteristics that students, interns and residents look for in their role models. Medical Education, 35, 272-277.

Frambach, J. M., Driessen, E. W., Chan, L. C., & van der Vleuten, C. P. M. (2012). Rethinking the globalization of problem-based learning: How culture challenges self-directed learning. Medical Education, 46, 738-747.

Haider, S. I., Snead, D. R., & Bari, M. F. (2016). Medical students’ perceptions of clinical teachers as role model. PloS ONE, 11(3): e0150478. https://doi:10.1371/journal.pone.0150478

Hatem, C. J., Searle, N. S., Gunderman, R., Krane, N. K., Perkowski, L., Schutze, G. E., & Steinert, Y. (2011). The educational attributes and responsibilities of effective medical educators. Academic Medicine, 86(4), 474-480.

Hillard, R. I. (1990). The good and effective teacher as perceived by paediatric residents and faculty. American Journal of Diseases of Childhood, 144, 1106 –1110.

Jacobs, J. L., & Samarasekera, D. D. (2012). How we put into practice the principles of embedding medical students into healthcare teams. Medical Teacher, 34, 1008-1011.

Jonassen, D. H. (1991). Evaluating constructivistic learning. Educational Technology, 31(9), 28-33.

Kernan, W. N., Lee, M. Y., Stone, S. L., Freudigman, K. A., & O’Connor, P. G. (2000). Effective teaching for preceptors of ambulatory care: A survey of medical students. American Journal of Medicine, 108(6), 499-502.

Kikukawa, M., Nabeta, H., Ono, M., Emura, S., Oda, Y., Koizumi, S., & Sakemi, T. (2013). The characteristics of a good clinical teacher as perceived by resident physicians in Japan: A qualitative study. BMC Medical Education, 13(1), 100.

Kua, E. H., Voon, F., Tan, C. H., & Goh, L. G. (2006). What makes an effective medical teacher? Perceptions of medical students. Medical Teacher, 28(8), 738-741.

Low, M. J. W., Khoo, K. S. M., Kuan, W. S., & Ooi, S. B. S. (2020). Cross-sectional study of perceptions of qualities of a good medical teacher among medical students from first to final year. Singapore Medical Journal, 61(1), 28-33.

Mohan, N., & Chia, Y. Y. (2017). Our first steps into surgery: The role of inspiring teachers. The Asia-Pacific Scholar, 2(1), 29-30. https://doi.org/10.29060/TAPS.2017-2-1/PV1027

National University of Singapore. (2019). National University of Singapore Undergraduate Programmes Indicative Grade Profile. Retrieved from http://www.nus.edu.sg/oam/gradeprofile/sprogramme-igp.html

Nishiya, K., Sekiguchi, S., Yoshimura, H., Takamura, A., Wada, H., Konishi, E., Saiki, T., Tsunekawa, K., Fujisaki, K., & Suzuki, Y. (2019). Good clinical teachers in Paediatrics: The perspective of paediatricians in Japan. Paediatrics International, 62(5), 549-555.

Prime Minister’s Office. (2010, May 5). “Old and new citizens get equal chance,” says MM Lee. [Press release].

Paukert, J. L., & Richards, B. F. (2000). How medical students and residents describe the roles and characteristics of their influential clinical teachers. Academic Medicine, 75, 843-845.

QS Top Universities. (2015) QS World University Rankings 2015-20116. Retrieved from: https://www.topuniversities.com/university-rankings/world-university-rankings/2015

Savin-Baden, M., & Howell Major, C. (2013). Pragmatic qualitative research. In M. Savin-Baden & C. H. Major (Eds). Qualitative Research. The Essential Guide to Theory and Practice. London: Routledge.

Schiffer, R. B., Rao, S. M., & Fogel, B. S. (2003). Neuropsychiatry: A Comprehensive Textbook (2nd ed.). Philadelphia, PA: Lippincott Williams and Wilkins.

Searle, N. S., Hatem, C. J., Perkowski, L., & Wilkerson, L. (2006). Why invest in an educational fellowship program? Academic Medicine, 81, 936-940.

Singh, S., Pai, D. R., Sinha, N. K., Kaur, A., Soe, H. H. K., & Barua, A. (2013). Qualities of an effective teacher: What do medical teachers think? BMC Medical Education, 13(128), 1-7.

Sutkin, G., Wagner, E., Harris, I., & Schiffer, R. (2008). What makes a good clinical teacher in Medicine? A review of the literature. Academic Medicine, 83(5), 452-466.

Times Higher Education. (2015). The World University Rankings 2015-16. Retrieved from: https://www.timeshighereducation.com/student/news/best-universities-world-revealed-world-university-rankings-2015-2016

Venkataramani, P., Krishnaswamy, N., Sugathan, S., Sadanandan, T., Sidhu, M., & Gnanasekaran, A. (2016). Attributes expected of a medical teacher by Malaysian medical students from a private medical school. South-East Asian Journal of Medical Education, 10(2), 39-45.

White, J. A., & Anderson, P. (1995). Learning by internal medicine residents: Differences and similarities of perceptions by residents and faculty. Journal of General Internal Medicine, 10, 126-132.

World Bank. (2015). Annual Report 2015. Retrieved from: https://www.worldbank.org/en/about/annual-report-2015

*Shirley Ooi

Emergency Medicine Department,

National University Hospital

9 Lower Kent Ridge Road, Level 4,

National University Centre for Oral Health Building,

Singapore 119085

Tel: (65)6772-2458

Fax: (65)6775-8551

Email: shirley_ooi@nuhs.edu.sg

Submitted: 15 April 2020

Accepted: 5 June 2020

Published online: 5 January, TAPS 2021, 6(1), 49-59

https://doi.org/10.29060/TAPS.2021-6-1/OA2248

Amaya Tharindi Ellawala1, Madawa Chandratilake2 & Nilanthi de Silva2

1Department of Medical Education, Faculty of Medical Sciences, University of Sri Jayewardenepura, Sri Lanka; 2Faculty of Medicine, University of Kelaniya, Sri Lanka

Abstract

Introduction: Professionalism is a context-specific entity, and should be defined in relation to a country’s socio-cultural backdrop. This study aimed to develop a framework of medical professionalism relevant to the Sri Lankan context.

Methods: An online Delphi study was conducted with local stakeholders of healthcare, to achieve consensus on the essential attributes of professionalism for a doctor in Sri Lanka. These were built into a framework of professionalism using qualitative and quantitative methods.

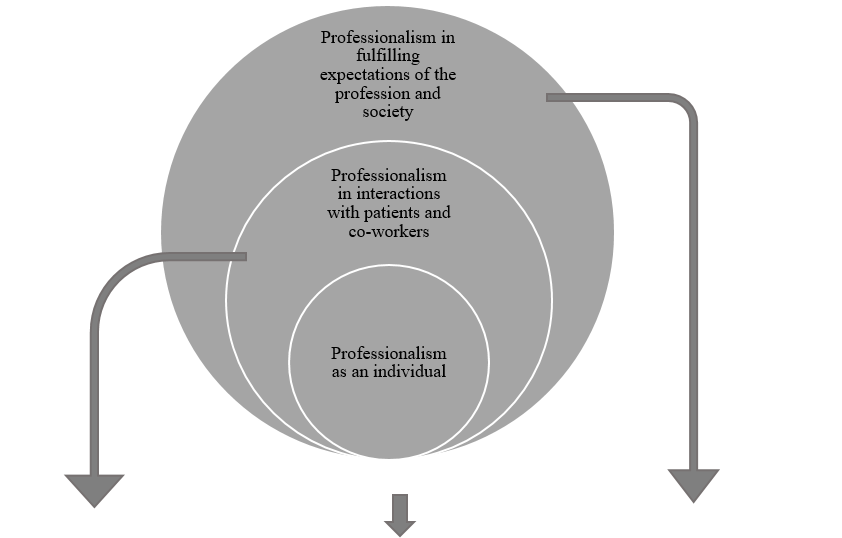

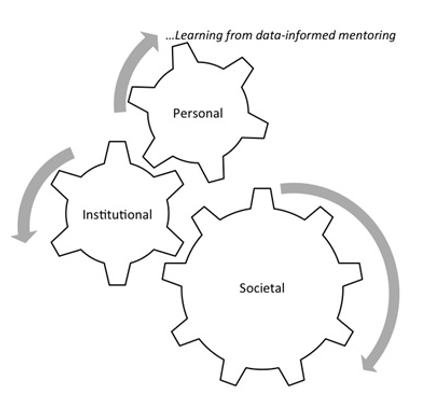

Results: Forty-six attributes of professionalism were identified as essential, based on Content Validity Index supplemented by Kappa ratings. ‘Possessing adequate knowledge and skills’, ‘displaying a sense of responsibility’ and ‘being compassionate and caring’ emerged as the highest rated items. The proposed framework has three domains: professionalism as an individual, professionalism in interactions with patients and co-workers and professionalism in fulfilling expectations of the profession and society, and displays certain characteristics unique to the local context.

Conclusion: This study enabled the development of a culturally relevant, conceptual framework of professionalism as grounded in the views of multiple stakeholders of healthcare in Sri Lanka, and prioritisation of the most essential attributes.

Keywords: Professionalism, Culture, Consensus

Practice Highlights

- Medical professionalism is recognised as a culturally dependent entity.

- This has led to the emergence of definitions unique to socio-cultural settings.

- List-based definitions provide operationalisable means of portraying its meaning.

- A Delphi study was conducted to achieve consensus on locally relevant professionalism attributes.

- Using quantitative and qualitative methods, a conceptual framework of professionalism was developed.

I. INTRODUCTION

There is no single definition of medical professionalism that encompasses its many subtle nuances (Birden et al., 2014). The realisation that professionalism is a dynamic, multi-dimensional entity (Van de Camp, Vernooij-Dassen, Grol, & Bottema, 2004), significantly dependent on context (Van Mook et al., 2009), and cultural backdrop (Chandratilake, Mcaleer, & Gibson, 2012), has led to the emergence of definitions specific to cultures and socio-economic backgrounds.

Many of the current definitions originate from Western societies. Certain Eastern cultures have embraced such definitions, though they are undeniably in conflict with local traditional views (Pan, Norris, Liang, Li, & Ho, 2013). In parallel however, countries such as Egypt, Saudi Arabia, Japan, China and Taiwan have explored how professionalism is conceptualised within their contexts (Al-Eraky, Chandratilake, Wajid, Donkers, & Van Merrienboer, 2014; Leung, Hsu, & Hui, 2012; Pan et al., 2013). Such studies have portrayed the interplay between cultural, socio-economic and religious factors in shaping perceptions on professionalism, further fuelling the notion that professionalism must be “interpreted in view of local traditions and ethos” (Al-Eraky et al., 2014, p. 14).

Culture is the embodiment of elements such as attitudes, beliefs and values that are shared among individuals of a community and is therefore, an entity that distinguishes one group of people from another (Hofstede, 2011). Various cultural theories provide insight into inter-cultural differences across the globe (Hofstede, n.d.; Schwartz, 1999). The Sri Lankan cultural context, while aligned with those of its closest geographical neighbours in South Asia in some ways, differs from them in other important aspects.

Certain attempts have been made to explore the meaning of professionalism in Sri Lanka. Chandratilake et al. (2012) provided a degree of insight while comparing cultural similarities and dissonances in conceptualising professionalism among doctors of several nations. Monrouxe, Chandratilake, Gosselin, Rees, and Ho (2017) built on this work with their analysis of professionalism as viewed by local medical students. The sole regulatory authority of the medical profession in the country, the Sri Lanka Medical Council (SLMC, 2009) has delineated what it expects in terms of professionalism, by outlining the constituents of ‘good medical practice’, many of which converge with elements of professionalism described in the literature.

While the work mentioned here has shed some light on the topic, to our knowledge, there were no studies that focused solely on the local conceptualisation of professionalism, drawing on the views of diverse stakeholders of healthcare.