Exploring online learning interactions among medical students during a self-initiated enrichment year

Submitted: 30 April 2020

Accepted: 8 September 2020

Published online: 4 May, TAPS 2021, 6(2), 66-77

https://doi.org/10.29060/TAPS.2021-6-2/OA2391

Pauline Luk & Julie Chen

The University of Hong Kong, Hong Kong

Abstract

Introduction: A novel initiative allowed third year medical students to pursue experiential learning during a year-long Enrichment Year programme as part of the core curriculum. ‘connect*ed’, an online virtual community of learning was developed to provide learning and social support to students and to help them link their diverse experiences with the common goal of being a doctor. This study examined the nature, pattern, and content of online interactions among medical students within this community of learning to identify features that support learning and personal growth.

Methods: This was a quantitative-qualitative study using platform data analytics, social network analysis, thematic content analysis to analyse the nature and pattern of online interactions. Focus group interviews with the faculty mentors and medical students were used to triangulate the results.

Results: Students favoured online interactions focused on sharing and learning from each other rather than structured tasks. Multimedia content, especially images, attracted more attention and stimulated more constructive discussion. We identified five patterns of interaction. The degree centrality and reciprocity did not affect the team interactivity but mutual encouragement by team members and mentors can promote a positive team dynamic.

Conclusion: Online interactions that are less structured, relate to personal interests, and use of multimedia appear to generate the most meaningful content and teams do not necessarily need to have a leader to be effective. A structured online network that adopts these features can better support learners who are geographically separated and engaged in different learning experiences.

Keywords: Online Learning, Undergraduate, Interaction, Experiential Learning

Practice Highlights

- Image-based messages and less structured online activities focused on experience-sharing engage students and stimulate a more constructive discussion.

- The proactivity of students and mentors can foster a positive team dynamic and learning experience.

- A team or group leader is not always necessary to promote group interaction.

I. INTRODUCTION

Increasingly, medical schools are recognising the potential of a holistic, experiential curriculum to nurture the professional development of their students (Kallail et al., 2020). A growing body of evidence supports the benefits of experiential learning. Experiential learning has been associated with increasing interest in learning (Kallail et al., 2020) a better understanding of career choice (Lyons, 2017), and higher-order critical thinking skills (Alamodi et al., 2018).

Beginning in 2018-19, the Li Ka Shing Faculty of Medicine of The University of Hong Kong (HKUMed) introduced a mandatory, credit-bearing Enrichment Year for all third-year medical students. This initiative provided opportunities for substantive engagement in a personal area of interest related to research, service or humanitarian work, pursuit of a higher degree, or university exchange anywhere in the world in order to further the professional and personal development of students.

Recognising the difficulties students may encounter when they are off-campus and the need to support student experiential learning, we developed an online virtual community of learning called ‘connect*ed’ to provide learning and social support to students and to help them link their diverse experiences with their common goal of becoming a doctor. The idea of an online virtual learning space is well situated within the social constructivist theoretical framework (Vygotsky, 1978) which views social interaction as the basis for learning. Individuals develop and construct knowledge better when interacting with others rather than unilaterally receiving information, thereby conceptualising learning as a collaborative process. Building on this idea, Lave and Wenger discussed ‘communities of practice’ in which socially supported learning takes place (Lave & Wenger, 1991). In this related theory, social learning takes place within communities of practice defined as groups who have a common interest or domain, who engage and interact in shared activities thus developing a relationship. This dialogic interaction among the learner, peers and tutor evolves over time and can take place and be captured in the virtual learning space to support the evolution of work (Greenberg, 2006). In the higher education setting, online discussion forums, or web 2.0 technologies such as blogs and wikis draw on the benefits of social learning and communities of practice giving students time to think, contribute and give and receive feedback to help their learning.

This aim of this study is to examine the nature, pattern, and content of online interactions among medical students within the virtual community of learning, connect*ed, to identify features that support learning and personal growth. Findings will offer insight on how to further optimize collaborative online learning.

II. METHODS

A. Context

During the Enrichment Year, students were allocated to teams with a designated faculty mentor. Team composition was designed to maximise diversity of learning experiences, hence each team would have at least one student who was doing research, one doing service or humanitarian work, and one pursuing an exchange opportunity abroad. This allowed students to benefit from the experiences of their teammates. Prior to departure, a Launch Day was convened in June 2018 to facilitate team cohesion among members and their mentor, to familiarise with the connect*ed objectives, the e-learning platform, mentor and student teammates.

We chose to use the commercially developed e-platform, Workplace by Facebook to house connect*ed after extensive consultation and testing with stakeholders. The interface of Workplace is very similar to Facebook but operates in a closed community only accessible to registered connect*ed users. This helped to address legitimate privacy and confidentiality concerns while providing a user-friendly and familiar platform that students and teachers were willing to use.

Teams were encouraged to share their learning experience with each other and with their mentor on Workplace. Structured learning modules called “Inquiry Pods” (IP) were released online on a regular basis to help facilitate the sharing and discussion. The themes for the inquiry pods were communicator, ethical decision-maker, and global citizen, based on the six educational aims and learning outcomes of the university and the Bachelor of Medicine and Bachelor of Surgery (MBBS) programme (HKU, 2017). Students completed each IP by posting, commenting, and reacting to trigger material provided in the IP or based on their own/others Enrichment Year experience. Most of the posts were photos, video, text, or sharing of online information, via hyperlinks.

connect*ed is a graded component of the Enrichment Year and students must earn a pass (60%) in order to proceed to the next year of study. Team mentors graded each inquiry pod as a formative assessment, and at the end of the year, provided a summative assessment based the overall performance in the IP, online participation and team impact presentation. All the assessments were rubric-based (Appendix 1: Grading rubrics).

B. Study Design

This was a mixed methods quantitative-qualitative study that combined analyses of platform analytic data and qualitative information drawn from student work and focus group discussions (FGD) used to provide a richer understanding of online learning interactions among students (Ma, 2012).

C. Subjects

In the academic year 2018-19, 206 students participated in the Enrichment Year. They participated in 302 activities in Hong Kong and in 23 different cities around the world (Appendix 2: Activities undertaken by students in 2018-2019). These students were selectively divided into 33 teams of five to eight students, according to gender, destination and nature of activities, to ensure the most diversified combination of members.

D. Data Sources, Collection and Analysis

1) Level of activity: At the end of the first academic year, we evaluated the students’ online activity by analysing the usage data collected through the Application Programming Interface (API) of Workplace from June 2018 to May 2019. These showed the frequency of activity in terms of students, mentors and teams who posted, commented, replied, and reacted on the platform.

2) Social network interaction: Social network analysis is a method for studying the structure of relationships and the effect this social structure has on the attitudes, behaviour, and performance of the individual members of a group (Saqr et al., 2018). We extracted the Workplace data using Workplace Graph API, which allowed us to create objects by nodes and joined along edges, and developed a web tool (PHP +Vue.js+JQuery) to export data from Workplace. We focused our analysis on team members’ position and role in teams. The extracted data were imported to the open source software, Gephi that generated a graph for social network analysis. The software used nodes and edges to represent the connections between each member of the team and presented the interactions within the social network in terms of the size, gradient, and direction of the communication (Bastian et al., 2009).

3) Content of posts: The content of posts by each team was analysed for common themes based on the type of messages posted on the platform. Initial codes were generated based on the purpose of the posts and then categorised to find the essence of each theme. This allowed us to identify how students were using the platform and thereby understand the basic functions of the virtual community of learning.

4) Feedback and focus group discussion: We conducted FGD with students and mentors from March – June 2019. There were 13 FGD with 30 mentors and three with 9 students. Participation in FGD was voluntary and no monetary incentive was given to students or mentors. For mentors, the FGD was part of the evaluation, feedback and engagement effort to encourage mentors to continue their involvement in their project which is why all mentors were invited and most participated. Therefore, the participation rate was high. For the students’ sessions, there was a purposive selection of subjects based on student volunteers who were keen to share their experience and deliberate invitation to those who were comparatively inactive in the project. Each interview session lasted for 60 to 90 minutes. A semi-structured interview guide with pre-determined questions was used to focus the conversation on desired themes. The questions for both mentors and students were similar and covered participants’ experiences with connect*ed, using the Workplace platform, challenges and suggestions for improvement. All FGD were recorded by contemporaneous notes that were organised immediately following each session.

III. RESULTS

A. Level of Activity

There was a total of 815 posts, 8198 comments, and 6250 emoticon reactions: like (5843), love (169), haha (152), wow (71), sad (14), and angry (1) by 206 students and 33 mentors as summarised in Table 1.

|

Post (average) |

Comment (average) |

Reaction (average) |

|

Mentor N=33 |

539 (16.3) |

1484 (44.9) |

3017 (91.4) |

|

Students N=206 |

276 (1.3) |

6714 (32.6) |

3233 (15.7) |

|

Total |

815 |

8198 |

6250 |

Table 1. Summary of online interactions in 2018-19

B. Social Network Interaction

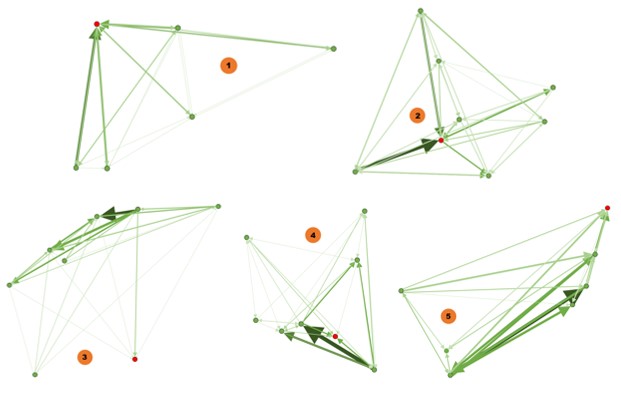

The pattern of interactions was visually represented in a social network analysis by Gephi. In the diagram, the red node represents the mentor, the green node represents the students. The edge between the nodes represents the interactions. The thicker and darker colour of the edge represents more interaction. The arrow represents the direction of the communication.

We categorised the patterns according to the number of responses of mentor and students. By comparing the frequency of responses (posts, comments, and reactions), we found that there were five common patterns of interaction that were reflected in all teams, regardless of their level of activity as summarised in Table 2.

Pattern |

Frequency of Posts |

Frequency of Comments |

Frequency of Reactions |

Team identifier |

|

1 |

High (from mentor) |

High (from mentor) |

High (from mentor) |

1, 9, 10, 11, 17, 21, 25, 26, 33, |

|

2 |

High from mentor |

High from mentor |

Average/Low from mentor |

2, 19, 31 |

|

3 |

High from mentor |

Low from mentor |

Average/Low from mentor |

3, 7, 14, 18, 28 |

|

4 |

High from mentor |

Low from mentor |

High from mentor |

4, 6, 12, 13, 15, 20, 23, 27, 29 |

|

5 |

High from mentor |

Average from mentor and students |

Any frequency |

5, 8, 16, 22, 24, 30, 32 |

Remark: H=high participation compare to team average; A=average participation that mentors are having similar amount of participation as students; L= low participation compare to team average

Table 2. Patterns of interactions among teams

In general, all mentors were more active than students as teachers initiated new posts and were often keen to share information with students (Appendix 3). Even when students were encouraged to create new posts, they tended to focus on completing the tasks in the Inquiry Pods.

Diagram 1: Patterns of online interactions by teams

1) Pattern 1: Mentor degree centrality: We found that the number of responses from mentors were much higher than the students. For example, in Team 1, the mentor made 113 posts, 236 comments, and 227 reactions, while the five students made between 1-5 posts, 38-54 comments, and 14-43 reactions. Mentors were the centre point and driving force of the interaction. Students interact with others in response to mentor facilitation making the degree centrality towards to the mentor. The thickness of the edges is evenly distributed indicating a consistent level of interaction among all team members.

2) Pattern 2: Mentor degree centrality: Similar to Pattern 1, mentors were also active in posting and commenting, but gave much fewer reactions than students. The centre point is towards the mentor, and also the most active students in the team as shown by the two thick edges in the diagram. For instance, in team 2, the mentor posted 43 posts, 127 comments, and 19 reactions, while the seven students posted 1 to 12 post, 28 to 65 comments, and 8 to 66 reactions respectively. In this pattern, mentor was also the centre point, however, some nodes of students shared thicker edges.

3) Pattern 3: Student degree centrality: Team 3 is such an example, showing that the thick arrows are pointing towards students, meaning that the interaction is initiated by students. The mentor took a less important role in the conversations. The degree centrality is towards students and the mentor was outside of the interaction centre. For instance, in team 3, the mentor posted 12 posts, 19 comments, and 20 reactions, while the seven students posted 1 to 12 post, 17 to 68 comments, and 0 to 64 reactions respectively. In this pattern, mentor was situated outside the conversation circle. The degree of centrality shifted to students.

4) Pattern 4: Student degree centrality: The dynamics of interaction leaned towards active students, which were represented by the thick edges towards certain students. In this pattern, there were usually multiple centre points that did not include the mentor. For instance, in team 4, the mentor posted 11 posts, 27 comments, and 90 reactions, while the seven students posted 0 to 3 post, 28 to 78 comments, and 0 to 39 reactions respectively. The degree of centrality shifted to multiple students.

5) Pattern 5: Diversified degree centrality: Mentors were active in posting, having similar frequency of comments as the students and the number of comments among all members are the same, and having low reactions. In this pattern, there are multiple conversation nodes and most are interactions between students. Those interactions are more student-driven and indicate multi-centred conversation. For instance, in team 5, the mentor posted 18 posts, 36 comments, and 3 reactions, while the six students posted 0 to 3 post, 31 to 62 comments, and 4 to 29 reactions respectively. The degree of centrality shifted to more than one student. In this pattern, there is more interaction between students as shown by the bi-directional arrows. The degree centrality is low with diversified centres.

These patterns show that teams could have single-centred interaction (Pattern 1 & 2) or multi-centred interaction (Pattern 3, 4, & 5) with each representing different team interactions. Team activity, and not the centeredness of the interaction, was associated with the effectiveness of collaboration and the completion of tasks. In addition, most teams demonstrated one-way communication when interacting. That means the reciprocity of a network is low. In Teams 1 and 2, the interaction dynamics favoured the mentors, while in Teams 3, 4, and 5, the dynamics leaned towards active students. In contrast, team 5 demonstrated strong reciprocity.

However, after comparing the patterns of all 33 teams, there is no indication that a certain pattern was better than the others. There was no significant difference in the on-time completion rate for the IP assignments for the five most active teams (86.9%) compared with all 33 teams (85.5%).

C. Content of Posts

In connect*ed, students shared their Enrichment Year experience using text, photo, video, or related links of other websites. The nature of interactions was predominantly text-based, as it is easier to post and interact using the text. However, image-based messages attracted more attention and stimulated a more constructive discussion.

There were three particular areas that generated greater levels of interactivity. Firstly, students were very willing to share and reflect on their personal experiences. Taking the ‘Communicator’ Inquiry Pod as an example, students shared their observations on communication in their respective settings by posting on the team wall:

“There is a huge contrast here, where students actively ask questions even if the setting involves 80+ students. I suppose the background behind the two nationalities have a huge role in it, as Asians tend to be a bit more shy compared to the extrovertiveness commonly shared by Westerners. While we should embrace who we were and are, I think it is also beneficial to observe others and learn from such observations.”

Student A (studied abroad)

This text-based conversation thread compared and contrasted effective classroom communication in different countries. It enabled students to reflect and to draw on their own experiences to benefit all team members.

The second area of interest for students was social support. One of the most popular activities was the posting of photos and videos about their Enrichment Year activities including when they are performing social service missions, cooking a gourmet meal or joining group gatherings during festive occasions. Those posts generated numerous responses and reactions indicating a keen interest in reaching out and maintaining social connectedness.

Thirdly, students were more active online when there was information being shared related to the medical practice and they are more willing to discuss their views as shown in this sequence from Team 3:

“Being a MBBS student, people around may ask us for medical advice. They think we are knowledgeable to make a diagnosis based on their description and believe we are able to help. However, as we are not yet qualified, it is inappropriate for us to give any professional opinion. Sometimes, I would like to share what I have learnt and suggest some possible solutions. Nevertheless, at the same time, I am afraid my opinion would affect their health seeking behaviour, for instance, they might just follow what I share instead of seeing a doctor.”

Student B

“It’s true that we are not knowledgeable enough to give medical advice and it will be misleading to our relatives and friends if they take our opinions as professional advice and decide not to seek proper medical opinions. Thus, we should always remind ourselves of the role as medical students and think about the impacts of our words.”

Student C

“I understand your feeling as my relatives and friends also ask me for medical advice. It will be safer to advise them to seek help from medical professionals for diagnosis or other serious health issues. However, as a medical student, I think it is possible for us to give them some lifestyle advice without causing harmful consequences, for instance, smoking cessation, diet with lower cholesterol content and moderate exercise. Although we are not qualified to make a diagnosis at the moment, we can still use our medical knowledge as a way to promote public health and arise their health conscious.”

Student D

Students more actively express their opinions when the topics under discussion are related to the profession they are aiming to join.

D. Feedback and Focus Group Interviews

Although there were only 9 student interviewees, we found repeated themes and content suggesting data saturation. This may be because connect*ed comprised only 10% of the overall Enrichment Year and students did not pay particular attention to this component resulting in little variation in responses during the FGD.

The main theme that arose from the FGD with students and mentors was about the most rewarding aspect of the online interaction in connect*ed. Both groups indicated that this was the social connectivity attained through student sharing of day-to-day life during the Enrichment Year.

“The photo and video did not need much effort to share with others, but they are more interesting and can let me know more about how others were doing during their Enrichment Year.”

Student E

“I am very interested in knowing the life of others in other universities. I hope they can share more and we could see others’ videos.”

Student F

“Sharing things we learned with the team could help us to be more socialized.”

Student G

Mentors also enjoyed knowing more about students’ Enrichment Year life and believed that students should enjoy themselves while learning.

“The connect*ed is a good example helping students to bridge their knowledge and core value. The sharing of experience (related to the Enrichment Year) is important, it engages students in the discussion”

Mentor X

“The platform support each other very well. This is a platform for socializing and communicating. I know what students are doing if they posted on the team wall.”

Mentor Y

The value of social connectivity for support was further emphasized by students suggesting that the platform was more useful for social networking than learning.

“connect*ed provided a platform for us sharing the struggles and support each other when I was having my Enrichment Year.”

Student I

This view was echoed by mentors who believed that connect*ed provided necessary support for students especially those who were overseas. Mentors used the chat function on Workplace to have personalized communication with their members and to offer advice.

“I used the chat function on the Workplace, which is more personal and can support each other very well…. I can have immediate interaction with students.”

Mentor Z

Students found mentors were motivating and encouraged them to interact in teams which led to some mentor-centric team interactions.

“Our mentor is very motivating and encourages all members to participate in the discussion. She guided us through to complete the inquiry pods.”

Student J

“In my team, there are some inactive members who have demotivated me to interact. If there is an active member, I think it would help.”

Student K

The findings also indicated that proactivity by student members, participation by the mentor, responsiveness, and social/non-academic discussions fostered a positive team dynamic and a positive online learning experience, regardless of whether the team interaction was primarily single-centred or multi-centred.

IV. DISCUSSION

This study examined the nature, pattern, and content of online interactions among medical students within a virtual community of learning among the inaugural cohort of the Enrichment Year to identify features that support learning and personal growth.

Our results found that students favoured online interactions that were less structured, image-rich and focused on sharing of experience to learn from each other and to support one another. Multimedia content, especially images, attracted more attention and stimulated discussion that was more constructive. This is consistent with findings in the literature that show that images have a positive influence on learning and engagement (Chan & Unsworth, 2011; Stuijfzand et al., 2016). Sharing of personal experience helped students to reflect on their own experience and explore how others experienced their Enrichment Year. The results support previous studies that suggest self-reflection and community building enhances experiential learning (Arnold & Paulus, 2010; Pai, 2016). This builds a virtual community that allows students to share their struggles which students found to be a crucial aspect of giving and receiving social support. The use of the different modes of communication available on Workplace, including the text messaging and voice calls as well as social media posting provided flexible avenues of support to students. The finding is very similar to the outcomes of a project involving a mobile application for experiential learning activities (Schnepp & Rogers, 2017). We also observed that the number of positive reactions (like, love, haha or wow) far exceeded the number of negative reactions (sad and angry). This is a visual form of encouragement from mentors and peers that reflected their interest in engaging with each other.

From the patterns derived from our social network analysis, we found that the interaction could be uni-directional or bi-directional, but there was no correlation of the interaction with team effectiveness in completing tasks on time. As seen in the social network patterns identified, active mentors can drive team interaction. However, in contrast with other findings in the literature, the degree centrality and reciprocity do not affect the team interactional dynamic (Jan & Vlachopoulos, 2019). Regardless of the directionality of the predominant interaction, if there are active members in the team or the mentors are motivating, these individuals are the key to generating more interaction and enhancing online learning experience of students.

Ideally, both mentors and all students should be active, but having at least one or two more active students, can raise the team dynamic. Once some students are willing to share their experience and give timely responses, it can stimulate others to join. Continued encouragement of active members and mentors can promote a positive team dynamic. In terms of degree centrality, the observation that no pattern of interaction was superior to the others suggests that a single leader is not always necessary to for the team to be effective.

This study suggests that interactions will occur most naturally when students are doing what they feel is useful such as maintaining social support with each other and their mentor. In order to be accepted, learning initiatives such as linking learning with experiential activities will need to be less formal and integrate more smoothly with students’ demonstrated desire for social support and interest to share experience. In addition, attention to team formation and ensuring opportunity develop team cohesion would be essential as students in the FGD, commented that when there were members they do not know well, it will be a hindrance for interaction. As the connect*ed is one of the graded components of the Enrichment Year, we observed that the assessment could serve as an external motivator encouraging students to contribute to the work and support their team. However, it could also have a negative impact as it is perceived as an additional burden and may pressure some students to participate for the sake of participating and doing so in an inauthentic way.

V. CONCLUSION

The online virtual community, connect*ed, to support experiential learning for medical students is still at an early stage. Features of connect*ed that facilitated learning and personal growth included a focus on student support and sharing especially with multimedia, less structured interactions, and teams with active members and/or mentor. It is important to note that interaction does not equate to learning (Jan & Vlachopoulos, 2019), and so the use of an online network that adopts these features may better support learners but the effectiveness of achieving formal learning outcome should be further studied. We will continue to modify and evaluate the functionality of the connect*ed community to ensure it is fit-for-purpose to support students’ needs and learning.

Notes on Contributors

Pauline Luk and Julie Chen contributed to the design and implementation of the research, analysis of the results and writing of the manuscript. PL drafted the manuscript, and JL edited and contributed to the intellectual content of the manuscript and provided overall supervision of the project. Both authors and approved the final manuscript.

Ethical Approval

This research received approval from the HKU Institutional Review Board (UW18-121). Consent was obtained from participants for the research study.

Acknowledgements

The authors sincerely appreciate the support from the mentors and students who participated in this study, the collaboration with the Education University of Hong Kong, and the administrative and technical support rendered by Mr. Francis Tsoi and Miss Joyce Tsang throughout the project.

Funding

This project was funded by the Hong Kong University Grants Committee (UGC) Funding Scheme for Teaching and Learning Related Proposals (2016-19 Triennium).

Declaration of Interest

The authors report no conflicts of interest.

References

Alamodi, A. A., Abu-Zaid, A., Eshaq, A. M., & Al-Kattan, K. (2018). The summer enrichment program: A multidimensional experiential enriching experience for junior medical students. The American Journal of the Medical Sciences, 356(2), 185-186. https://doi.org/10.1016/j.amjms.2018.05.005

Arnold, N., & Paulus, T. (2010). Using a social networking site for experiential learning: Appropriating, lurking, modeling and community building. The Internet and Higher Education, 13(4), 188-196. https://doi.org/10.1016/j.iheduc.2010.04.002

Bastian, M., Heymann, S., & Jacomy, M. (2009). Gephi: an open source software for exploring and manipulating networks. In the International AAAI Conference on Weblogs and Social Media. 361-362. Retrieved April 1, 2020, from https://vbn.aau.dk/ws/files/328840013/154_3225_1_PB.pdf

Chan, E., & Unsworth, L. (2011). Image–language interaction in online reading environments: Challenges for students’ reading comprehension. Australian Educational Researcher, 38(2), 181-202. https://doi.org/10.1007/s13384-011-0023-y

Greenberg, G. (2006). Can we talk? Electronic portfolios as collaborative learning spaces. In A. Jafari & C. Kaufman (Eds.), Handbook of Research on ePortfolios. Idea Group Inc.

HKU. (2017). Educational aims and institutional learning outcomes. Retrieved April 1, 2020, from http://www.handbook.hku.hk/ug/full-time-2017-18/important-policies/educational-aims-and-institutional-learning-outcomes

Jan, S., & Vlachopoulos, P. (2019). Social network analysis: A framework for identifying communities in higher education online learning. Technology, Knowledge and Learning, 24(4), 621-639. https://doi.org/10.1007/s10758-018-9375-y

Kallail, K. J., Shaw, P., Hughes, T., & Berardo, B. (2020). Enriching medical student learning experiences. Journal of Medical Education and Curricular Development, 7, 4. https://doi.org/10.1177/2382120520902160

Lave, J., & Wenger, E. (1991). Situated learning: legitimate peripheral participation. Cambridge University Press

Lyons, Z. (2017). Establishment and implementation of a psychiatry enrichment programme for medical students. Australasian Psychiatry, 25(1), 69-72. https://doi.org/10.1177/1039856216671663

Ma, L. (2012). Some philosophical considerations in using mixed methods in library and information science research. Journal of the American Society for Information Science and Technology, 63(9), 1859-1867. https://doi.org/10.1002/asi.22711

Pai, H. C. (2016). An integrated model for the effects of self-reflection and clinical experiential learning on clinical nursing performance in nursing students: A longitudinal study. Nurse Education Today, 45, 156. https://doi.org/10.1016/j.nedt.2016.07.011

Saqr, M., Fors, U., Tedre, M., & Nouri, J. (2018). How social network analysis can be used to monitor online collaborative learning and guide an informed intervention. PLoS One, 13(3). http://dx.doi.org/10.1371/journal.pone.0194777

Schnepp, J., & Rogers, C. (2017). Evaluating the acceptability and usability of EASEL: A mobile application that supports guided reflection for experiential learning activities. Journal of Information Technology Education: Innovations in Practice, 16, 195.

Stuijfzand, B. G., van Der Schaaf, M. F., Kirschner, F. C., Ravesloot, C. J., van Der Gijp, A., & Vincken, K. L. (2016). Medical students’ cognitive load in volumetric image interpretation: Insights from human-computer interaction and eye movements. Computers in Human Behavior, 62, 394-5632.

Vygotsky, L. S. (1978). Mind in Society. Harvard University Press. https://doi.org/10.2307/j.ctvjf9vz4

*Pauline Luk

5/F William MW Mong Block

21 Sassoon Road,

Pokfulam, Hong Kong

Email: pluk@hku.hk

Submitted: 21 August 2020

Accepted: 12 November 2020

Published online: 4 May, TAPS 2021, 6(2), 57-65

https://doi.org/10.29060/TAPS.2021-6-2/OA2378

Nicholas Beng Hui Ng1,2, Mae Yue Tan1,2, Shuh Shing Lee3, Nasyitah binti Abdul Aziz3, Marion M Aw1,2 & Jeremy Bingyuan Lin1,2

1Khoo Teck Puat-National University Children’s Medical Institute, National University Health System Singapore; 2Department of Paediatrics, Yong Loo Lin School of Medicine, National University of Singapore, Singapore; 3Centre for Medical Education (CenMED), Yong Loo Lin School of Medicine, National University of Singapore, Singapore

Abstract

Introduction: The coronavirus disease 2019 (COVID-19) pandemic has brought about additional challenges beyond the usual transitional stresses faced by a newly qualified doctor. We aimed to evaluate the impact of COVID-19 on interns’ stress, burnout, emotions, and implications on their training, while exploring their coping mechanisms and resilience levels.

Methods: Newly graduated doctors interning in a Paediatric department in Singapore, who experienced escalation of the pandemic from January to April 2020, were invited to participate. Participants completed the Perceived Stress Scale (PSS), Maslach’s Burnout Inventory (MBI), and Connor Davidson Resilience Scale 25-item (CD-RISC 25) pre-pandemic and 4 months into COVID-19. Group interviews were conducted to supplement the quantitative responses to achieve study aims.

Results: Response rate was 100% (n=10) for post-exposure questionnaires and group interviews. Despite working through the pandemic, interns’ stress levels were not increased, burnout remained low, while resilience remained high. Four themes emerged from the group interviews – the impacts of the pandemic on their psychology, duties, training, as well as protective mechanisms. Their responses, particularly the institutional mechanisms and individual coping strategies, enabled us to understand their unexpected low burnout and high resilience despite the pandemic.

Conclusion: This study demonstrated that it is possible to mitigate stress, burnout and preserve resilience of vulnerable healthcare workers such as interns amidst a pandemic. The study also validated a multifaceted approach that targets institutional, faculty as well as individual levels, can ensure the continued wellbeing of healthcare workers even in challenging times.

Keywords: COVID-19, Stress, Burnout, Resilience, Junior Doctor, Intern

Practice Highlights

- Intern doctors face additional and unique challenges in a pandemic, besides the usual stresses of their school-to-work transition.

- Our study shows that a multi-faceted approach that target institution, faculty and individual can lead to reduced burnout and preserved resilience in these doctors.

I. INTRODUCTION

With the coronavirus disease 2019 (COVID-19) pandemic, there are new stressors contributing to burnout in healthcare workers. We were particularly interested in evaluating the impact of COVID-19 on newly qualified doctors doing their internship, also known as House Officers or post-graduate year 1 doctors in Singapore. This is a particularly vulnerable group of healthcare workers as the school-to-work transitional year is traditionally a challenging period with high reports of burnout (Low et al., 2019; Sturman et al., 2017).

In Singapore, our first case of COVID-19 was on 23 January 2020. By February 2020, Singapore had one of the highest numbers of cases out of China (Chia & Moynihan, 2020). A global pandemic was declared on 12 March 2020. In early April 2020, the government tightened local measures with a ‘Circuit Breaker’, akin to the lockdowns in many countries (Ministry of Health Singapore, 2020).

Newly graduated doctors in Singapore complete a 12-month training period (4-month rotations in 3 different disciplines) prior to full medical registration. The period of January to April 2020 was during their third block and coincided with the full evolution of the pandemic, which came with multiple unexpected changes in work within the hospital. These included new protocols for personal protection, team segregation and mechanisms to cope with the increase in COVID-19 cases. In our department, interns and residents were divided into active and passive teams rotating fortnightly, where the active team had to shoulder the responsibility of caring for at risk or COVID-19 paediatric patients, with an intense overnight call duty schedule, different from the weekly frequency in the non-pandemic setting. In addition to work changes, there were also cancellation of overseas leave as well as cessation of scheduled teaching sessions.

With these changes, we aimed to evaluate the impact of the COVID-19 pandemic on interns in our department, focusing on their psychological well-being in terms of stress and burnout, and impact on clinical training. Our secondary aim was to explore the interns’ resilience, coping mechanisms and identify systemic measures they perceived as helpful during this pandemic.

II. METHODS

A. Study Design and Sample

This was a mixed-methods quantitative and qualitative study involving interns who worked from January to April 2020, in a paediatric department at a tertiary academic hospital that actively admitted COVID-19 patients. Informed consent was obtained from all participants for both the quantitative and qualitative components of the study.

B. Quantitative Data Methodology

Pre-pandemic data on perceived stress, burnout and resilience levels were collected a priori in early January 2020, when the interns first joined the department. This was part of a baseline evaluation of a separate study. We employed validated scales: the Perceived Stress Scale (PSS) (Cohen et al., 1983), the Maslach Burnout Inventory (MBI) for Health Services Survey (Maslach & Leiter, 2016), and the Connor-Davidson Resilience Scale 25-item (CD-RSIC 25) (Connor & Davidson, 2003) to measure stress, burnout and resilience respectively. The PSS measures the perception of stress, and is designed to tap how unpredictable, uncontrollable, and overloaded respondents find their lives. Scores ranging from 0-13, 14-26, and 27-40 are mild, moderate, and high perceived stress, respectively. The MBI is a 22-item inventory with scores in 3 domains of burnout: emotional exhaustion (EE), depersonalization (DP), and low personal accomplishment (PA) based on multiple questions for each of these subscales. We used a strict definition of burnout as having fulfilled criteria in all 3 domains of the MBI (i.e. high EE ≧ 27, high DP ≧ 10, and low PA ≦ 33). A liberal definition (i.e. high EE ≧ 27 and high DP ≧ 10 with or without a low PA) was also measured as both definitions are widely adopted in literature (Rotenstein et al., 2018). The CD-RISC 25-item (English version) is a validated scale to measure resilience. It gives a score ranging from 0 to 100, with higher scores reflecting greater resilience. On completion of the posting in end April 2020, the interns repeated the same set of questionnaires.

C. Qualitative Data Methodology: Group Discussions

We conducted group interviews to further evaluate the responses obtained from the questionnaires and to better understand the impact on the interns. Invitation emails were sent to all interns; participation was voluntary. The questions were developed to explore the challenges, emotions, psychological states and reflections of their coping mechanisms and supportive measures of the interns while working in the pandemic. The questions were developed and refined by the authors after discussion and consensus (Appendix 1). Two group interviews were conducted on separate days by the same interviewer, to maintain team segregation and physical distancing. Each group had 5 participants. The sessions were recorded and subsequently transcribed by an independent party.

D. Data Analysis

Quantitative data on the validated scales were scored according to the corresponding manuals. Descriptive and comparative analysis was done with SPSS, Version 23. For the interviews, thematic analysis was conducted. Two of the authors (SS & NAA) read the transcripts to understand fully the data, generated the initial codes independently. Next, codes with consistently similar content were grouped into sub-categories, and similar sub-categories were then combined into categories to form themes. In the event there were differing views on the coding or theme, they re-examined the primary data and further discussed to achieve consensus.

III. RESULTS

A. Quantitative Results

We had a 90% response rate (n=9) for the pre-exposure and 100% (n=10) for the post-exposure questionnaires. There was no change in PSS scores among the interns despite the pandemic, with both median scores in the moderate stress category at 17.5 post-exposure and 17 pre-exposure. There was no high perceived stress in all interns post-exposure. Using the strictest definition of burnout, burnout remained low at 20% post-exposure, compared to 11.1% pre-exposure (Table 1). When a more liberal definition of burnout is used as discussed in the methodology section, only 20% of participants were burnout post-exposure, compared to 66.7% of participants pre-exposure. High resilience levels were maintained, with median score of 74 pre-exposure and 72.5 post-exposure.

|

Measures |

Pre-exposure, (n=9) |

Post-exposure, (n=10) |

p value |

|

Perceived Stress Scale (PSS) |

|||

|

Median (SD) |

17 (6.75) |

17.50 (5.70)

|

N.A |

|

Low stress, n (%) |

4 (44.4%) |

3 (30%)

|

0.65 |

|

Moderate stress, n (%) |

4 (44.4%) |

7 (70%)

|

0.37 |

|

High stress, n (%) |

1 (11.1%) |

0 (0%)

|

0.474 |

|

Maslach Burnout Inventory (MBI) |

|||

|

No burnout, n (%) |

3 (33.3%) |

4 (40.0%) |

0.999 |

|

Strict definition of burnout, n (%) |

1 (11.1%) |

2 (20.0%)

|

0.999 |

|

Liberal definition of burnout, n (%) |

6 (66.7%) |

2 (20%) |

0.09 |

Table 1: Quantitative results showing scores on the Perceived Stress Scale and Maslach Burnout Inventory of the interns pre-pandemic, compared with scores post-exposure. (SD= Standard Deviation).

B. Qualitative Results

We had 100% participation in the group interviews (n=10). Four themes emerged from the qualitative analysis – psychological impact (feelings), impact on duties, impact on teaching and learning as well as preventive measures and support system. These are summarised in Table 2.

|

Key Theme 1: Psychological Impact (Feelings) |

|

|

Sub-themes |

Sample of quotations |

|

a) Loss of control coping with many changes

b) Emotional exhaustion (fear, burnout, uncertainty, loneliness)

c) Positive feelings |

“…throughout the pandemic, there were a lot of unexpected changes and uncertainty among the junior doctors especially the PGY1s (referring to interns)…”

“…COVID gives people much stress due to the uncertainty in a lot of things…” “the thought of COVID patients is scary” “…if I really contract this (COVID-19) I wouldn’t have too much concern (but) I was more scared I would pass it on to my family “…stress stemming from fear” “… cannot help but experienced feelings of isolation and loneliness… I avoided my mother, who is immunocompromised as I worry about passing the infection to her even when I am off active COVID-care duty…” “feeling of being protected alleviated stress and concerns related to contracting the virus” “…months during pandemic (in the posting) were enriching and enjoyable…” “working during pandemic is deemed as “a badge of honour” “felt the months during pandemic situation was a ‘good learning experience’”

|

|

Key Theme 2: Impact on Duties |

|

|

Sub-themes |

Sample of quotations |

|

a) Changes in clinical duties

b) Dealing with rapidly changing protocols

|

“felt that manpower shortage coupled with more frequent on-call duties within two weeks causes early burnout”

“…I think on the ground level the protocol is always bleak, for example who to swab and when…” “delayed updating of protocol online led to a bit of confusion” “not getting updated instantaneously and lack of accessible to the information” |

|

Key Theme 3: Impact on Teaching and Learning |

|

|

Sub-themes |

Sample of quotations |

|

a) Clinical exposure

b) Changes in teaching approaches |

“…in terms of the variety of cases in posting, it is significantly affected due to pandemic that changed demographic of attendees”

“…there wasn’t much teaching on-going until recently when we got the online platforms which I do feel is more helpful…” “due to having lesser patients, feels consultants have more time to teach” “while there is no group teaching, there is more teaching of cases on wards” |

|

Theme 4: Protective Measures and Support System |

|

|

Sub-themes |

Sample of quotations |

|

a) Rotation system which ensured sufficient manpower and rest

b) Institutional measures for personal protection against COVID-19 infection

c) Seniors, Peers and Staff support

d) Self-adaptability and resilience

|

“…we have enough manpower to actually toggle between the rotations for COVID-care and non-COVID services…”

“…PGY1s (Interns) are protected as we don’t swab the patients and we don’t have to expose ourselves to the possible aerolisation of the secretions, so I think that really protected us and relieved our stress…”

“… regular meetings (with) seniors that sat down to uncover our worries… seniors were open to taking feedback about rostering and manpower…” “…I really think it’s the support that has been given by the department and the institution, and the seniors especially have been very supportive…”

“…think of the hardships faced by other health professionals, one’s situation will not compare to theirs” “…stay strong, persevere, and that everyone will get through it together by supporting each other” “…remember that it was a choice and that it is also a privilege to be in medicine…” |

Table 2: Summary of key themes and sub-themes as well as verbatim quotations from our interns, from the group interviews.

1) Theme 1 – Psychological Impact (Feelings): Most interns perceived that the pandemic had caused drastic changes in their personal and work lives, with various psychological impacts. They expressed increased emotional exhaustion such as stress and burnout, that is mainly related to changes in their clinical duties (Theme 2). The interns also shared about risks of COVID-19 infection to self and especially to family and loved ones, increasing their worries and stress. Interns followed physical distancing measures and team segregation at work, but several interns avoided their loved ones at home, especially the elderly and immunocompromised. For these interns, they further shared feelings of isolation and loneliness. Positive emotions such as feeling secure, valued and protected existed simultaneously and were mainly associated with the protective measures and support systems (Theme 4) in the workplace. Some also reported that the posting was still enjoyable and felt proud to be working in the pandemic.

2) Theme 2 – Impact on Duties: The interns highlighted there were many changes in institutional work processes and their duties due to the pandemic. Due to manpower changes, there were pervasive reports of physical fatigue. There were however those who felt the workload was still manageable. Interns also raised the issue of non-timely information and unclear protocols which often led to confusion and uncertainty in their work.

3) Theme 3 – Impact on Teaching and Learning: There were mixed comments on this. As a result of strict physical distancing and team segregation, initial planned teaching sessions on general paediatrics were cancelled and the interns felt they “missed out” on their clinical training. Sessions were subsequently conducted using web-based platforms, which many found helpful. All interns felt that learning was restricted in the pandemic. Although it was beneficial to learn about pandemic response and management of suspected or affected COVID-19 patients, they felt their exposure to general paediatrics was reduced due to the limited variety of ward cases. However, there were some who felt there was better quality of teaching on the ward rounds as consultants had more time to teach with fewer elective and non-urgent cases in the rotations of non-COVID care.

4) Theme 4 – Preventive Measures and Support Systems: Despite the impacts on the interns’ psychology, duties and learning, they also shared on the various protective measures and support systems they perceived helped them cope. This was also the main reason for reported positive feelings of protection and support. Departmental and institutional work processes were implemented to take care of the interns’ physical and psychological welfare such as a rotational system of team segregation, which they reported provided a strict work-rest cycle as well as respite from COVID-care. In addition, seniors and faculty also ensured interns were competent and comfortable dealing with COVID-19 patients prior to taking on high risk duties such as swabbing patients. Support from multiple levels (seniors, department, institution) helped them through. In particular, the seniors and faculty provided support to the interns through regular “check-in” meetings where they could share concerns and provide feedback. The interns also shared that as a result of the strong support received, they were able to develop adaptability, perseverance and resilience, and they were even grateful to be in healthcare at this time.

IV. DISCUSSION

According to the demand-control-support model (Thomas, 2004), occupational stress causes burnout when job demands are high, individual autonomy is low and when job stress interferes with home life (Campbell et al., 2001; Linzer et al., 2001). On that note, we hypothesised that with the COVID-19 pandemic, interns would have increased stress and burnout, in addition to their routine difficulties in the transition from student to doctor. The pandemic-related concerns our interns had were similar to many healthcare workers globally – including the fear of contracting COVID-19 and more so transmitting it to vulnerable loved ones (Chen et al., 2020). Physical fatigue was also seen in our interns given the more intensive work schedule (Sasangohar et al., 2020). Although the total amount of admissions during the period was reduced to 40% of the usual load, the need for team segregation had led to a smaller pool of interns covering each clinical area. In addition, each intern had to do more in-house night calls while on active service. Segregation also meant that there would be less cross-coverage of duties where interns would receive less support from peers who would otherwise have been able to help with the workload on the ground. Another important aspect that had led to reported stress among many was the frequent changes in clinical workflows coupled with the lack of timely and reliable information (Wu et al., 2020). Many interns also highlighted concerns with regards to compromise and interference with their paediatric internship training (Liang et al., 2020). Despite all these, objectively the interns’ perceived stress was maintained without increase in burnout.

Burnout is known to be inversely related to resilience – this pattern is also reflected in our results. Resilience is the process of adapting well in the face of adversity, trauma, tragedy, threats or even significant sources of stress (Southwick et al., 2014). Our interns had high resilience scores, above what has previously been published among physicians (McKinley et al., 2020). One reason for this may be the development of resilience through a time of crisis, a phenomenon well encapsulated by the Crisis Theory: during a crisis or disequilibrium such as the current pandemic, people make attempts to adapt and seek solutions to restore stability. (Brooks et al., 2017; Caplan, 1964). The development of resilience is increasingly emphasised as an integral strategy to combat burnout. Potentially, the mitigating factors, coping mechanisms and support shared by our interns in the interviews, could explain their low burnout and high resilience.

Our interns perceived many systemic measures helped them cope with the pandemic – giving testament to the importance of institutional leadership in implementing safeguards for psychological health (Dewey et al., 2020; Wu et al., 2020). Protocols relating to staff protection, availability of personal protective equipment (Rasmussen et al., 2020) were some of the measures common to institutions worldwide. Furthermore, interns being the most junior member of the team, were spared from doing aerosolising procedures such as intubation, nebulisation administration and airway suctioning that were deferred to clinicians with prior experience and training. This allowed interns time to learn and improve in their competency and confidence prior to assuming these responsibilities. The interns were also thankful for the protected work-rest cycles (Wu et al., 2020), and that they were allowed to take paid leave – which is essential, more so in the pandemic to reduce fatigue and allowed time for rejuvenation.

Other than institutional support, direct support from seniors and faculty were significant in our interns’ responses in helping them, supporting the importance of mentorship (Ramanan et al., 2006). Despite feeling that they might not have reliable and timely access to important updates, they felt supported under the direct guidance of seniors who took the lead on the ground. Regular fortnightly ‘check-in’ sessions were conducted to elicit concerns, obtain feedback, and ensure continual wellbeing. This channel of communication was well received by interns: they appreciated the faculty’s concerns, had the autonomy of being able to input and contribute to the care of patients, the opportunity to air grievances confidentially and importantly, had closure on concerns they have raised regarding their rotations and training (Fischer et al., 2019). The enhanced collegiality between interns, support from seniors and improved cooperation among healthcare workers during this time of crisis naturally also contributed to reduced burnout levels, a finding well established in literature.(Li et al., 2013)

In terms of the impact of training, teaching sessions were initially discontinued to maintain physical distancing. Moreover, the interns had a higher proportion of time spent in the provision of COVID-19 care, which meant traditional general paediatric exposure was compromised. However, within 4 weeks of the pandemic, departmental teaching activities were restored via web-based sessions which interns found useful. The role of faculty in persisting with academic continuity, is again important in mitigating the impact of the pandemic on learning – some interns felt they had more teaching on the wards as consultants had more time to teach for each patient.

We believe that the perceived continual institutional and senior support for our interns allowed them to maintain high personal resilience, that could have mitigated their stress and burnout. In this pandemic, interns demonstrated adaptability and perseverance to the many changes, ability to persevere as well as finding gratitude amidst the challenges and focusing on their goal to help patients and fight the pandemic, which are all known features of resilience (Bird & Pincavage, 2016; Zwack & Schweitzer, 2013).

To our knowledge, this is the first research study in the pandemic that objectively evaluated the impact of the COVID-19 on interns’ psychological state, resilience and training. However, we recognise our study limitations. The small population would mean that it would be difficult to derive statistical comparisons in the pre- and post-exposure results. However, we believe the temporal exposure of the pandemic for this group of interns during their posting, made the pre- and post-pandemic results valid. The results were further supported by qualitative findings from a good group interview participation (100%) and in-depth discussion, that provided substantial explanations to the trend of results. We recognise that 2-4 months might be a short duration for negative psychological effects such as stress, and burnout to set in. Nonetheless, the amount of unprecedented changes and intensity of work for the interns involved within this period, were undoubtedly high. Another study limitation is the inclusion of Paediatric interns only and the possible lower exposure to COVID-19 as compared to their adult counterparts due to decreased disease morbidity and mortality in children. Although this factor could potentially result in less impact on the psychological factors studied, we believe other interns are likely to face similar concerns and challenges in the pandemic, due to their similar backgrounds and job scopes across most departments and disciplines.

This study elucidated the impact of the pandemic on interns in terms of their stress, burnout, as well as clinical duties and training. Despite increasing concerns on the psychological well-being of healthcare workers in the pandemic, our study has demonstrated that it is possible to mitigate their stress, burnout and preserve resilience, even in vulnerable new medical graduates. Our findings objectively validated the importance and effectiveness of the multi-faceted approach that target institution, faculty as well as the individual level, to build resilience and combat burnout in healthcare providers in this pandemic and beyond.

Notes on Contributors

Nicholas BH Ng contributed to conception and design of study, interpretation of data, drafting and critical revising of the article. Mae Yue Tan contributed to analysis and interpretation of data, drafting and critical revising of the article. Shuh Shing Lee contributed to analysis and interpretation of data, drafting and critical revising of the article. Nasyitah bte Abdul Aziz contributed to analysis and interpretation of data, drafting of the article. Marion M Aw contributed to interpretation of data, drafting and critical revising of the article. Jeremy BY Lin contributed to conception and design, interpretation of data, drafting and critical revising of the article. All authors gave final approval of the version to be published.

Data Availability

The data for this study can be found at https://doi.org/10.6084/m9.figshare.12924029.v1. The access to these datasets are available for use subject to approval of the authors of this article.

Ethical Approval

Ethics approval was obtained from the NHG Domain Specific Review Board (DSRB), with NHG DSRB reference number of 2020/00392.

Acknowledgement

The authors would like to thank the interns who participated in this study.

Funding

Funding for this study was obtained from NUHS Fund Limited – Medical Affairs (Education) Fund.

Declaration of Interest

All authors have no conflicts of interest to declare.

References

Bird, A., & Pincavage, A. (2016). A curriculum to foster resident resilience. MedEdPORTAL, 12, 10439. https://doi.org/10.15766/mep_2374-8265.10439

Brooks, S. K., Dunn, R., Amlôt, R., Rubin, G. J., & Greenberg, N. (2017). Social and occupational factors associated with psychological wellbeing among occupational groups affected by disaster: A systematic review. Journal of Mental Health, 26(4), 373-384. https://doi.org/10.1080/09638237.2017.1294732

Campbell, D. A., Jr., Sonnad, S. S., Eckhauser, F. E., Campbell, K. K., & Greenfield, L. J. (2001). Burnout among American surgeons. Surgery, 130(4), 696-702; discussion 702-695. https://doi.org/10.1067/msy.2001.116676

Caplan, G. (1964). Principles of preventive psychiatry. Basic Books.

Chen, Q., Liang, M., Li, Y., Guo, J., Fei, D., Wang, L., He, L., Sheng, C., Cai, Y., Li, X., Wang, J., & Zhang, Z. (2020). Mental health care for medical staff in China during the COVID-19 outbreak. Lancet Psychiatry, 7(4), e15-e16. https://doi.org/10.1016/s2215-0366(20)30078-x

Chia, R., & Moynihan, Q. (2020, February 20). This alarming map shows where the coronavirus has spread in Singapore, one of the worst-hit areas outside of China Business Insider Singapore. Business Insider. https://www.businessinsider.com/coronavirus-singapore-map-shows-spread-worst-hit-outside-china-2020-2?IR=T.

Cohen, S., Kamarck, T., & Mermelstein, R. (1983). A global measure of perceived stress. Journal of Health and Social Behaviour, 24(4), 385-396.

Connor, K. M., & Davidson, J. R. (2003). Development of a new resilience scale: The Connor-Davidson resilience scale (CD-RISC). Depression and Anxiety, 18(2), 76-82. https://doi.org/10.1002/da.10113

Dewey, C., Hingle, S., Goelz, E., & Linzer, M. (2020). Supporting clinicians during the COVID-19 pandemic. Annals of Internal Medicine, 172(11), 752-753. https://doi.org/10.7326/M20-1033

Fischer, J., Alpert, A., & Rao, P. (2019). Promoting intern resilience: Individual chief wellness check-ins. MedEdPORTAL, 15, 10848. https://doi.org/10.15766/mep_2374-8265.10848

Li, B., Bruyneel, L., Sermeus, W., Van den Heede, K., Matawie, K., Aiken, L., & Lesaffre, E. (2013). Group-level impact of work environment dimensions on burnout experiences among nurses: A multivariate multilevel probit model. International Journal of Nursing Studies, 50(2), 281–291. https://doi.org/10.1016/j.ijnurstu.2012.07.001

Liang, Z. C., Ooi, S. B. S., & Wang, W. (2020). Pandemics and their impact on medical training: Lessons From Singapore. Academic Medicine. https://doi.org/10.1097/acm.0000000000003441

Linzer, M., Visser, M. R., Oort, F. J., Smets, E. M., McMurray, J. E., & de Haes, H. C. (2001). Predicting and preventing physician burnout: results from the United States and the Netherlands. The American Journal of Medicine, 111(2), 170-175. https://doi.org/10.1016/s0002-9343(01)00814-2

Low, Z. X., Yeo, K. A., Sharma, V. K., Leung, G. K., McIntyre, R. S., Guerrero, A., Lu, B., Lam, C. C. S. F., Tran, B. X., Nguyen, L. H., Ho, C. S., Tam, W. W., & Ho, R. C. (2019). Prevalence of burnout in medical and surgical residents: A meta-analysis. International Journal of Environmental Research and Public Health, 16(9). https://doi.org/10.3390/ijerph16091479

Maslach, C. J. S., & Leiter, M. P. (2016). Maslach burnout inventory manual. Mind Garden Inc.

McKinley, N., McCain, R. S., Convie, L., Clarke, M., Dempster, M., Campbell, W. J., & Kirk, S. J. (2020). Resilience, burnout and coping mechanisms in UK doctors: A cross-sectional study. British Medical Journal Open, 10(1), e031765. https://doi.org/10.1136/bmjopen-2019-031765

Ministry of Health (MOH), Singapore. (2020). Circuit breaker to minimise further spread of COVID-19. https://www.moh.gov.sg/news-highlights/details/circuit-breaker-to-minimise-further-spread-of-covid-19. (Retrieved April 3, 2020)

Ng, N. B. H (2020). The COVID-19 Pandemic: Impact on Paediatric Postgraduate Year One Doctors [Data set]. Figshare. https://figshare.com/s/74c81ca193638a553ea2

Ramanan, R. A., Taylor, W. C., Davis, R. B., & Phillips, R. S. (2006). Mentoring matters. Mentoring and career preparation in internal medicine residency training. Journal of General Internal Medicine, 21(4), 340-345. https://doi.org/10.1111/j.1525-1497.2006.00346.x

Rasmussen, S., Sperling, P., Poulsen, M. S., Emmersen, J., & Andersen, S. (2020). Medical students for health-care staff shortages during the COVID-19 pandemic. The Lancet, 395(10234), e79-e80. https://doi.org/10.1016/s0140-6736(20)30923-5

Rotenstein, L. S., Torre, M., Ramos, M. A., Rosales, R. C., Guille, C., Sen, S., & Mata, D. A. (2018). Prevalence of burnout among physicians: A systematic review. The Journal of the American Medical Association, 320(11), 1131-1150. https://doi.org/10.1001/jama.2018.12777

Sasangohar, F., Jones, S. L., Masud, F. N., Vahidy, F. S., & Kash, B. A. (2020). Provider burnout and fatigue during the COVID-19 pandemic: Lessons learned from a high-volume intensive care unit. Anesthesia and Analgesia, 131(1), 106–111. https://doi.org/10.1213/ane.0000000000004866

Southwick, S. M., Bonanno, G. A., Masten, A. S., Panter-Brick, C., & Yehuda, R. (2014). Resilience definitions, theory, and challenges: Interdisciplinary perspectives. European Journal of Psychotraumatology, 5,(1), 25338. https://doi.org/10.3402/ejpt.v5.25338

Sturman, N., Tan, Z., & Turner, J. (2017). “A steep learning curve”: Junior doctor perspectives on the transition from medical student to the health-care workplace. BMC Medical Education, 17(1), 92. https://doi.org/10.1186/s12909-017-0931-2

Thomas, N. K. (2004). Resident burnout. The Journal of the American Medical Association, 292(23), 2880-2889. https://doi.org/10.1001/jama.292.23.2880

Wu, P. E., Styra, R., & Gold, W. L. (2020). Mitigating the psychological effects of COVID-19 on health care workers. Canadian Medical Association Journal, 192(17), E459-e460. https://doi.org/10.1503/cmaj.200519

Zwack, J., & Schweitzer, J. (2013). If every fifth physician is affected by burnout, what about the other four? Resilience strategies of experienced physicians. Academic Medicine, 88(3), 382-389. https://doi.org/10.1097/ACM.0b013e318281696b

*Jeremy Bingyuan Lin

1E Kent Ridge Road,

NUHS Tower Block Level 12,

Singapore 119228

Tel: (65) 6772 4847

Email: jeremy_lin@nuhs.edu.sg

Submitted: 28 July 2020

Accepted: 18 November 2020

Published online: 4 May, TAPS 2021, 6(2), 48-56

https://doi.org/10.29060/TAPS.2021-6-2/OA2367

Oscar Gilang Purnajati1, Rachmadya Nur Hidayah2 & Gandes Retno Rahayu2

1Faculty of Medicine, Universitas Kristen Duta Wacana, Yogyakarta, Indonesia; 2Department of Medical Education, Faculty of Medicine, Universitas Gadjah Mada, Yogyakarta, Indonesia

Abstract

Introduction: Objective Structured Clinical Examination (OSCE) examiners come from various backgrounds. This background variability may affect the way they score examinees. This study aimed to understand the effect of background variability influencing the examiners’ score agreement in OSCE’s procedural skill.

Methods: A mixed-methods study was conducted with explanatory sequential design. OSCE examiners (n=64) in the Faculty of Medicine Universitas Kristen Duta Wacana (FoM-UKDW) took part to assess two videos of Cardio-Pulmonary Resuscitation (CPR) competence to get their level of agreement by using Fleiss Kappa. One video portrayed CPR according to performance guideline, and the other portrayed CPR not according to performance guidelines. Primary survey, CPR procedure, and professional behaviour were assessed. To confirm the assessment results qualitatively, in-depth interviews were also conducted.

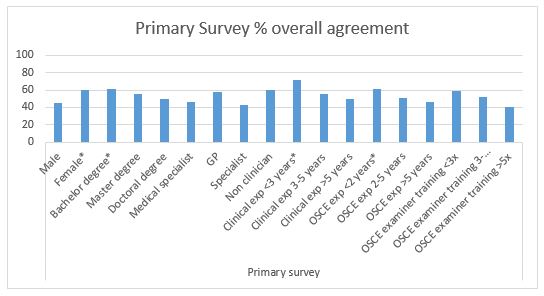

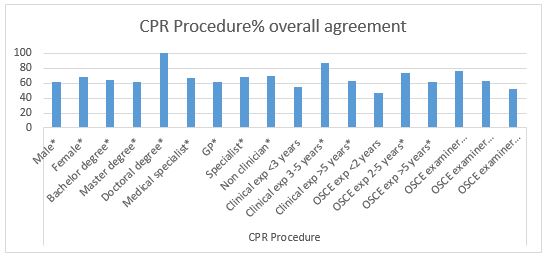

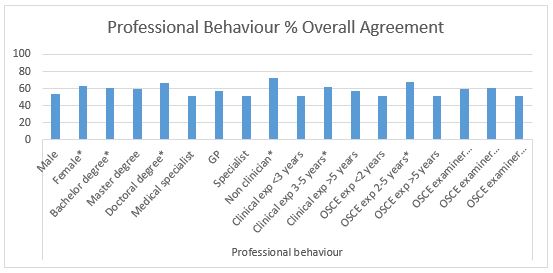

Results: Fifty-one examiners (79.7%) completed the assessment forms. From 18 background categories, there was a good agreement (>60%) in: Primary survey (4 groups), CPR procedure (15 groups), and professional behaviour (7 groups). In-depth interviews revealed several personal factors involved in scoring decisions: 1) Examiners use different references in assessing the skills; 2) Examiners use different ways in weighting competence; 3) The first impression might affect the examiners’ decision; and 4) Clinical practice experience drives examiners to establish a personal standard.

Conclusion: This study identifies several factors of examiner background that allow better agreement of procedural section (CPR procedure) with specific assessment guidelines. We should address personal factors affecting scoring decisions found in this study in preparing faculty members as OSCE examiners.

Keywords: OSCE Score, Background Variability, Agreement, Personal Factor

Practice Highlights

- The examiners’ background variability influences the OSCE scoring agreement results.

- The reason for assessment inaccuracy remains unclear regarding the score agreement.

- The absence of assessment instruments that could provide a loophole for examiners to improvise.

- Personal factors affecting scoring decisions found in this study should be addressed in preparing OSCE examiners.

I. INTRODUCTION

To assess medical students’ competencies in a variety of skills, most medical schools in Indonesia implement the Objective Structured Clinical Examination (OSCE) both as a clinical skills examination at the undergraduate stage and as a national exit exam (Rahayu et al., 2016; Suhoyo et al., 2016). Most OSCE stations test both communication domains and specific clinical skills that will be assessed based on rubrics and scoring checklists which relies on examiners’ observations (Setyonugroho et al., 2015). The OSCE has a challenge in its complexity to standardise the scores, which are very depend on OSCE examiners’ perceptions (Pell et al., 2010). In a well-designed OSCE the examinees performance should only influence the examinees’ score, with minimal effects from other sources of variance (Khan et al., 2013). Research showed that there are influences of examiner’s background variability on OSCE results although they have been asked to standardise their behaviour (Pell et al., 2010) The decision and behaviour of OSCE examiners will affect the quality of assessment, including making a pass or fail decision, considering the complexity of knowledge, skill, and attitude in medical education (Colbert-Getz et al., 2017; Fuller et al., 2017).

Examiners’ observations also rely on their clinical practice experience, OSCE examining experience, and gender conformity (Mortsiefer et al., 2017). Even in OSCE that is held in the most standard conditions, the examiner factor has the biggest role in scoring inaccurately (Mortsiefer et al., 2017). However, the reason for this inaccuracy remains unclear since there are concerns regarding the scoring agreement of examiners in OSCE and how the result might be affected by this issue. There is a need to consider the influence of examiners’ background variability (gender, educational level, clinical practice experiences, length of clinical practice experiences, OSCE experience, and OSCE training experience) when preparing teachers as OSCE examiners. This study aimed to understand background variability as a factor influencing examiners’ scoring agreement in assessing students’ performance in procedural skill, as the first step of faculty development program to ensure the standard quality for examiners.

II. METHODS

A. Study Design

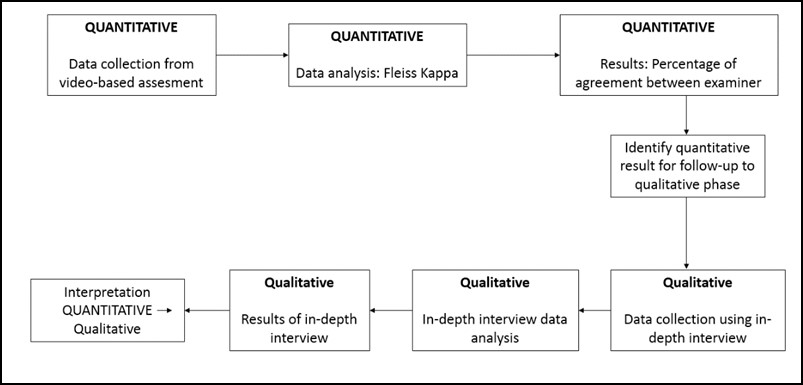

This mixed-method study used a sequential explanatory design. This mixed-method approach is expected to provide more comprehensive results and better understanding than using a separated method (Creswell & Clark, 2018).

This study comprised of 2 sequential phases of data collection and analysis (QUANTITATIVE: qualitative) using sequential design. First, quantitative data were collected as a cross-sectional study of the examiners’ strength of agreement using Fleiss Kappa while assessing the clinical skill performance recorded in the 2 videos: one video portrayed CPR according to performance guideline and the other portrayed CPR not according to performance guideline. We used these 2 videos in order to portray more comprehensively how the consistency of OSCE examiner agreement both on good and poor clinical skill performance.

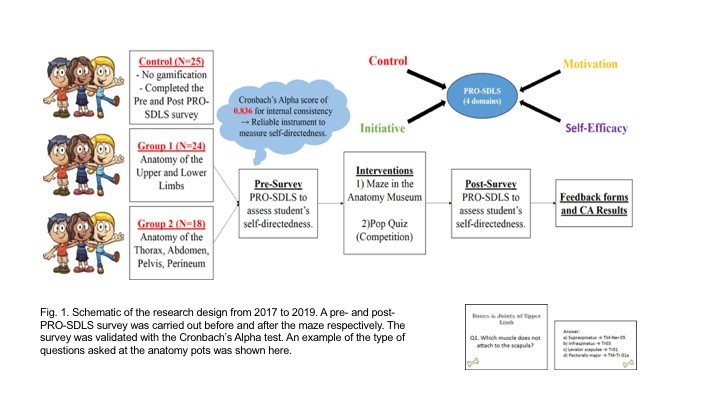

Figure 1 Mixed method explanatory design

In the second phase, in-depth interviews were used to complement the quantitative results to gain more information and a detailed confirmation about how the scores were decided (Stalmeijer et al., 2014). In this stage of study, researchers explored and explained the examiners’ OSCE experiences and behaviour when they give a score on a clinical skill examination and the influences on their scoring regarding their backgrounds.

B. Materials and/or Subjects

The strength of agreement of the videos’ score came from 64 OSCE examiners FoM UKDW. Mortsiefer et al., (2017), explained that more subjects are better when investigate examiner characteristics associated with inter-examiner reliability (Mortsiefer et al., 2017). In the second phase, in-depth interviews were conducted with 6 examiners of FoM UKDW, selected by purposive sampling regarding their scores and how they represented their own unique background (Table 1).

Researcher (OGP) provided all the participants with written information about this research and addressed ethical issues in an informed consent form. Researcher ensured participants understand the research protocol and clarified any questions regarding this study. Participants who agreed to take part, sign the informed consent form prior to the data collection.

We held interviews in FoM UKDW with maximum 30 minutes of duration each interview. The inclusion criteria for examiners who were selected for this study were involved as full-time faculty members, had over 4 times OSCE examination experience, and had done OSCE examiner training, expecting that they had enough interaction with other faculty members and had influences from medical doctor education (Park et al., 2015). The exclusion criteria were participant did not answer the research invitation and did not fill the assessment form completely. Main researcher (OGP) conducted the interview. Main researcher was a male, student of Master of Health Profession Education Universitas Gadjah Mada, and the staff of FoM UKDW.

C. Statistics

1)Quantitative data analysis: We grouped examiners into 18 groups based on their background which were gender, educational level, clinical practice experiences, length of clinical practice experiences, OSCE experience, and OSCE training experience as shown in Table 1. We analysed all gathered data using IBM SPSS Statistics 25 and Microsoft Office Excel 365 (IBM Corp., Chicago). We presented quantitative data as a strength of agreement in percentage. The strength of agreement was calculated using Fleiss Kappa to determine the agreement between each group of each examiner background on whether CPR performances (primary survey, CPR Procedure, and professional behaviour), that portrayed in those 2 videos, were exhibiting score either “0”, “1”, “2”, or “3” based on the assessment guideline and rubric’s criteria (Purnajati, 2020). Based on recent research, agreement above 60% was considered as a substantial and adequate agreement (Stoyan et al., 2017; Vanbelle, 2019).

2) Qualitative data analysis: In-depth interviews were analysed using thematic analysis. We prepared a structured list of questions. It consisted of one key question: What was your experience in scoring the OSCE? The other additional questions evaluated the experiences of examiners in OSCE scoring including: the use of other references, differences in assessment weighting, use of own decision, clinical practice experience affecting the decision, and gender related decision making. Next, the collected data resulting from in-depth interviews were recorded using audio file recorder, read, and categorised into themes whenever they were related. The transcripts and identified themes were then given to an external coder in this study. This step was followed by our agreement for each theme. There was no repeated interview.

III. RESULTS

A. Quantitative Data Result

We deposited both quantitative and qualitative data in an online repository (Purnajati, 2020). The study participants in this quantitative phase were 64 OSCE examiners who are full-time faculty members. Twelve participants were excluded because did not fulfil the inclusion criteria. Fifty-one (79.7%) examiners who returned the completed assessment form are described below in Table 1.

|

Quantitative Phase Participant |

|||

|

Background |

Groups |

Number of Participant (N=51) |

|