The need for researching the utility of R2C2 model in Cross-Cultural and Cross-Disciplinary settings

Submitted: 26 May 2022

Accepted: 10 June 2022

Published online: 4 October, TAPS 2022, 7(4), 86-87

https://doi.org/10.29060/TAPS.2022-7-4/LE2816

Tomoko Miyoshi1, Fumiko Okazaki2, Jun Yoshino3, Satoru Yoshida4, Hiraku Funakoshi5, Takayuki Oto6 & Takuya Saiki7

1Department of General Medicine, Kurashiki Educational Division, Graduate School of Medicine Dentistry and Pharmaceutical Sciences, Okayama University, Japan; 2Center for Medical Education, The Jikei University School of Medicine, Japan; 3Department of Physical Therapy, Faculty of Health and Medical Science, Teikyo Heisei University, Japan; 4Emergency and Critical Care Medical Center, Niigata City General Hospital, Japan; 5Department of Emergency and Critical Care Medicine Tokyobay Urayasu Ichikawa Medical Center, Japan; 6Department of General Dental Practices, Kagoshima University Hospital, Japan; 7Medical Education Development Center, Gifu University, Japan

Dear Editor,

We are delighted to report that the Japanese translated version of R2C2 (relationship, reaction, content, coaching) was published in the Journal of Medical Education in Japan, under kind permission of the author and Journal of Academic Medicine. The R2C2 model, developed by Sargeant et al. (2015), promotes behavior change through reflection and feedback, while incorporating coaching. The effectiveness and influencing factors have been demonstrated in supervisor–resident pairs in various residency programs (family medicine, psychiatry, internal medicine, surgery, and anesthesiology) in the U.S., Canada, and the Netherlands. The R2C2 model is fascinating since it emphasises the relationship and dialogue between the resident and the supervisor, and provides insights into the residents’ in-depth learning.

While we are interested in factors that influence feedback, common across different specialties and contexts, we hypothesise that national culture and health profession disciplines may affect the dialogue and impact of the R2C2 model, especially in bridging the gap between self-assessment and supervisor’s assessment.

Reports of such cultural differences demonstrate the Japanese learning more from their failures, while Westerners learning more from their successes, as well as differences in learners’ self-evaluation. In addition, Hofstede reports that the relationship between learners and teachers in East Asia, including Japan is hierarchical, and feedback is therefore likely to be one-sided. Regarding mentoring/coaching, we have revealed that Japanese physician–scientist relationships are dependent on trust in mentors, and the cultural influence of acceptance of paternalistic mentoring (Obara et al., 2021) suggests the need for building trusting relationships. Furthermore, we as multidisciplinary author teams are keen to explore how different health profession disciplines shape the different perspectives on effective feedback and supervisor–learner relationship. We expect this topic to become more apparent as modern health services are becoming more multi-professional and the discourse may develop in a multi-professional relationship.

The Japanese version has been cautiously translated and published to overcome any issue involving translation. Although we had successfully conducted the nationwide workshop on R2C2 in Gifu, Japan in 2021 to disseminate its philosophy, we realised variety of factors should affect when we conduct R2C2 in our context. Our future goal is to examine the utility of R2C2 model in cross-cultural settings as well as cross-disciplinary situations in order to generate findings that will contribute to the glocalisation of medical education and multi-disciplinary education.

Notes on Contributors

T Miyoshi conceptualised and wrote the manuscript and approved the final version.

F Okazaki conceptualised cultural difference of R2C2 and revised and approved the manuscript.

H Funakoshi conceptualised cultural difference of R2C2 and revised and approved the manuscript.

T Oto conceptualised different health profession disciplines of R2C2 and approved the manuscript.

J Yoshino conceptualised different health profession disciplines of R2C2 and approved the manuscript.

S Yoshida conceptualised different health profession disciplines of R2C2 and approved the manuscript.

Prof T Saiki supervised and edited the manuscript.

Acknowledgement

We would like to acknowledge Rintaro Imafuku, Kaho Hayakawa, Chihiro Kawakami in Gifu University Medical Education Development Center, for writing and editing the Japanese translated version of R2C2 collaboratively.

Funding

There is no funding provided.

Declaration of Interest

There is no conflict of interest, including financial, consultant, institutional or otherwise for the author.

References

Obara H, Saiki T, Imafuku R, Fujisaki K, & Suzuki Y. (2021). Influence of national culture on mentoring relationship: a qualitative study of Japanese physician-scientists. BMC Medical Education, 21, 300. https://doi.org/10.1186/s12909-021-02744-2

Sargeant J, Lockyer J, Mann K, Holmboe E, Silver I, Armson H, Driessen E, MacLeod T, Yen W, Ross K, & Power M. (2015). Facilitated Reflective Performance Feedback: Developing an Evidence- and Theory-Based Model That Builds Relationship, Explores Reactions and Content, and Coaches for Performance Change (R2C2). Academic Medicine, 90(12), 1698-1706. https://doi.org/10.1097/ ACM.0000000000000809

*Tomoko Miyoshi

2-5-1 Shikata-cho, Kita-ku,

Okayama, Japan, 700-8558

+81-86-235-7342

Email: tmiyoshi@md.okayama-u.ac.jp

Submitted: 9 May 2022

Accepted: 3 August 2022

Published online: 4 October, TAPS 2022, 7(4), 83-85

https://doi.org/10.29060/TAPS.2022-7-4/CS2808

Chi Sum Chong1 & Woei Yun Siow2

1Yong Loo Lin School of Medicine, National University of Singapore, Singapore, 2Raffles Hospital, Singapore

I. INTRODUCTION

The AO foundation aims to improve patient outcomes in the surgical treatment of trauma and musculoskeletal disorders and promote education and research. Yearly, approximately 30,000 Orthopaedics surgeons worldwide attend AO foundation courses. To ensure that the planned curriculum is delivered, the AO foundation requires its surgeon-faculty to attend the Faculty Education Program (FEP) before teaching at regional and international courses.

FEP participants are AO member-surgeons who are actively teaching within their own countries. They are selected by their local AO committees and invited to attend. Every participant is encouraged to teach at regional and international courses thereafter.

II. METHODS

Course structure:

- Five weeks of online learning

This includes a self-assessment. Thereafter, participants learn through reading assignments, case studies and peer discussion at their own pace. These provide a problem-based and collaborative approach to learning. Most participants experience the same planned curriculum. Participants from locations with poor internet signals require a modified delivery of the curriculum e.g. email and hard copies.

- One-and-a-half days of live event

This begins with a group discussion to derive the core principles of effective learning from one’s learning experiences. This is followed by an “introduction to the Pendleton method of giving and receiving feedback”. Thereafter, each participant presents a lecture, conducts a small group discussion and demonstrates teaching of a practical session through role playing. For each activity, each participant receives feedback from the other participants and the faculty (Benton & Young, 2018). The event concludes with feedback to evaluate the course. Face-to-face learning activities are contextual and allow for learning of knowledge and skills of teaching strategies in a collaborative fashion. The online and face-to-face curriculum follow the SPICES model and align with the learning outcomes (Harden et al., 1984).

- One week of online follow-up with a post-course self-assessment.

The learning outcomes are:

- Prepare and present a lecture

- Moderate a small group discussion

- Instruct in practical exercises

- Receive and give feedback

- Evaluate one’s own teaching

- Work with outcomes in teaching strategies

- Set expectations of a teaching or learning activity

- Use information about learners e.g. learners’ needs and cultural context in the educational process

- Motivate learners

- Encourage interaction among learners

The outcomes encompass knowledge and skills in teaching and awareness of best practice guidelines in teaching strategies i.e. attitudinal domain. They are specific, relevant and timely for the participants who are young surgeons interested in teaching (Harden et al., 1999).

Some outcomes are easily measurable e.g. prepare and present a lecture, moderate a small group discussion, instruct in practical exercises and receive and give feedback. Participant performance is measured against a set of guidelines (Kogan et al., 2009). Some outcomes are embedded within the learning activities e.g. set outcomes and expectations in learning activities, motivate learners and encourage interaction among learners and evaluate one’s own performance. Some outcomes are not easily measurable e.g. using learner information to plan learning activities. Overall, Kirkpatrick’s level three achievement is met in most outcomes.

For outcomes that cannot be easily measured during the course, longitudinal assessment of the participants will allow these outcomes to be measured i.e. when they teach at future AO courses after the FEP. Thus, entrustable professional activities from the FEP are aligned with the course outcomes (Shorey et al., 2019).

Feedback was gathered from participants attending the FEP courses where the author Siow was one of the faculty. All participants verbally consented to give feedback. A total of 103 participants attended six FEP courses between 2016 to 2019. The response rate was 100%. Achievement of course outcomes was measured using three categories ranging from “not achieved” to “fully achieved”. Faculty effectiveness, content relevance and overall course impact were assessed using five categories ranging from “not at all effective” to “very effective”.

According to the Canton Zurich Ethical commission, this study does not require an authorisation from the ethics committee (BASEC-Nr. Req-2022-00536).

III. RESULTS

Eighty percent or more of graduates agreed that the following outcomes were fully achieved: prepare and present a lecture, moderate a small group discussion, instruct in practical exercise, encourage interaction, work with outcomes in teaching strategies, set expectations and evaluate one’s own teaching.

Seventy-five to seventy-eight percent of graduates agreed that the following outcomes were fully achieved: motivate learners, receive and give feedback and manage time and logistics.

Sixty-six percent of graduates agreed that the following outcome was fully achieved: using learner’s information in the educational process.

Ninety-five to ninety-eight percent of graduates agreed that the faculty, the course content and the overall course impact were very effective.

IV. DISCUSSION

A large majority of the participants were able to fully achieve these outcomes: prepare and present a lecture, moderate a small group discussion, instruct in practical exercise, encourage interaction, work with outcomes in teaching strategies, set expectations and evaluate one’s own teaching. This is likely because these outcomes are more familiar to the participants.

Seventy-five to seventy-eight percent of graduates agreed that the following outcomes were fully achieved: motivate learners, receive and give feedback and manage time and logistics. The achievement rate for this group of outcomes is slightly lower than the previous group of outcomes possibly because these outcomes are less familiar to the participants. Furthermore, the AO method of giving and receiving feedback presents a new concept and practice to many participants.

Sixty-six percent of graduates agreed that the following outcome was fully achieved: using learner’s information in the educational process. One reason for this lower score may be because the application of this outcome was not specifically highlighted and explained to the participants. This outcome was strictly adhered to and applied in the planning and the execution of the very FEP course attended by the participants, but the manner in which participant’s information was used to do so was not clearly explained to the participants themselves.

V. CONCLUSION

The FEP is a rare opportunity for surgeon-educators to learn about scholarly teaching. Feedback from the courses support the continuation of these courses to help faculty improve their teaching skills.

Notes on Contributors

Chi Sum Chong reviewed the literature, performed data analysis and developed the manuscript. Woei Yun Siow reviewed the literature, designed the study, performed the data collection and wrote the manuscript. All authors read and approved the final manuscript.

Funding

This work has not received any external funding.

Declaration of Interest

All authors declare that there are no conflicts of interest.

References

Benton, S. L., & Young, S. (2018). Best practices in the evaluation of teaching. IDEA paper No. 69.

Harden, R. M., Crosby, J. R., & Davis, M. H. (1999). AMEE Guide No. 14: Outcome-based education: Part 1-An introduction to outcome-based education. Medical Teacher, 21(1), 7-14. https://doi.org/10.1080/01421599979969

Harden, R. M., Sowden, S., & Dunn, W. R. (1984). Educational strategies in curriculum development: The SPICES model. Medical Education, 18(4), 284-297.

Kogan, J. R., Holmboe, E. S., & Hauer, K. E. (2009). Tools for direct observation and assessment of clinical skills of medical trainees. A systematic review. Journal of the American Medical Association, 302(12), 1316-1326.

Shorey, S., Lau, T. C., Lau, S. T., & Ang, E. (2019). Entrustable professional activities in health care education: A scoping review. Medical Education, 53(8), 766-777.

*Woei Yun Siow

Raffles Hospital,

585 North Bridge Road,

Singapore 188770

Email: siowwoeiyun@gmail.com

Submitted: 14 April 2022

Accepted: 3 August 2022

Published online: 4 October, TAPS 2022, 7(4), 76-82

https://doi.org/10.29060/TAPS.2022-7-4/CS2780

Eusni RM Tohit1, Fauzah A Ghani1, Hizmawati Madzin3, Intan N Samsudin1, Subashini C Thambiah1, Siti Z Zakariah2 & Zainina Seman1

1Department of Pathology, Faculty of Medicine & Health Sciences, Universiti Putra Malaysia, Malaysia; 2Department of Medical Microbiology, Faculty of Medicine & Health Sciences, Universiti Putra Malaysia, Malaysia; 3Department of Multimedia, Faculty of Computer Science & Information Technology, Universiti Putra Malaysia, Malaysia

I. INTRODUCTION

Twenty first century learning requires analytical thinking and problem solving; hence, medical educators must design suitable model to prepare learners for challenges in future. Medical teaching and learning are moving towards this direction and use of technology in education is embedded in the process. The role of laboratory testing in patients care is recognised as a critical component of modern medical care (Smith et al., 2010). Ability of practicing physicians to appropriately order and interpret laboratory tests is declining and little attention was given to appropriate medical student education in pathology (Smith et al., 2010).

Clinical Pathology (CP) is a module recently introduced in our medical programme. In depth learning of pathology requires learners to identify appropriate tests and specimen containers, interpret patients’ results with consideration of other factors that may influence them.

Design thinking skills (DTS) is a guided process of thinking where learners’ work in a team and work through to identify problems (patient case), analyse through collaborative learning, provide justification for investigation, interpretation of results, and outline relevant effective management. Experiential learning emphasises the central role of the learners in the educational process by allowing the learner to draw own conclusions and ruminate on meaning of the learned material (Clem et al., 2014). Blending DTS and experiential learning creates a holistic approach to the learning of CP.

II. METHODS

A pilot study was executed amongst year 3 medical students in the Faculty of Medicine and Health Sciences, University Putra Malaysia, Serdang, Selangor, Malaysia. The study was approved by Ethics Committee for Research Involving Human Subjects, Universiti Putra Malaysia, (JKEUPM-2019-387). It was conducted over a span of two months outside students’ formal teaching and learning. Inclusion criteria include students who in clinical years and never been expose to Clinical Pathology module. Students were divided into small groups of either 4 or 5 students, and all were equipped with the CP app (Appendix 1) in Android smartphone together with DTS task book. Each group had a clinical pathologist facilitating the four hybrid sessions (physical and online) due to the global pandemic. In brief, phases involved introduction to CP (empathy), case findings (define), laboratory workup (ideation), results interpretation (solution), case approach (prototype), critical analysis (reflection and post-mortem). [Details in Appendix 2]. These were then presented in the final phase of DTS in a simulated grand ward round. Learners went through pre and post-test in CP and were asked to evaluate their experiences using a modified 28 items questionnaire (Appendix 3) using Likert scale score; adapted from a validated experiential learning questionnaire (Clem et al., 2014).

III. RESULTS

Twenty students from Medicine and Surgery posting participated in this pilot study, conducted from 27th April 2021 to 26th June 2021. In general, students were very satisfied with the experiential learning project. Responses of experiential learning and score marks were tabulated in Table 1. The 28 items were divided into 4 subheadings; as for the type of environment used, 66% agreed to the hybrid approach used in running of the project. Seventy-five percent agreed on the active participation in different phases of DTS. Eighty-six percent agreed with the relevance of the content of CP in their teaching and learning towards being a medical professional. Over two third of respondents agreed on utility of the CP learning experience be adapted in their future learning. As per for students’ performance (n=20) in pre and post-test OSCE in pathology, students scored significantly higher mark in all items evaluated as seen in Table 1.

Encouraging responses were recorded from some of the respondents as stated below:

“I enjoyed it very much. I received a lot of clarity on how important clinical pathology is after the session. Even after all these sessions, I even read again and again the clinical pathology notes that I have. I feel I can slowly relate my prior knowledge when it comes to clinical.”

Respondent 1

“In my opinion, I think this research project has given me a lot of benefits such as I can know how to correctly fill in the form to order the lab investigation, understand how to choose the correct tube for each lab investigation. I like this project very much as it can help me in this medical field”

Respondent 2

“I am grateful for being part of this research since I learnt a lot from the sessions. I have learnt about the type of lab investigations and blood tube, the sequence of taking blood as well as the phlebotomy techniques from the sessions which may help me in my future medical career.”

Respondent 3

|

Subheading I |

Agree (%) |

Neutral (%) |

Disagree (%) |

|

|

On the environment of Clinical Pathology used in the experiential learning |

66 |

15 |

19 |

|

|

On the active participation and learning of Clinical Pathology |

75 |

14 |

11 |

|

|

On the relevance of the content of Clinical Pathology module |

86 |

3 |

11 |

|

|

On the utility of Clinical Pathology experience in future learning |

68 |

3 |

29 |

|

|

Subheading II |

Pre-test (/5) |

Post-test (/5) |

||

|

Correct selection of specimen container |

0.6 |

3.5 |

||

|

Correct order of blood draw |

2.5 |

4.0 |

||

|

Correct preanalytical variables identified |

0.3 |

3.0 |

||

|

Relevant information in the laboratory form |

2.3 |

4.0 |

||

|

Interpretation of laboratory tests |

3.5 |

4.5 |

||

Table 1. Responses to the questionnaire, pre and post-test score for OSCE in Clinical Pathology

IV. DISCUSSION

The pilot study conducted has shown to be beneficial for the clinical students who participated in the research.

Using Kirkpatrick model (Kirkpatrick & Kirkpatrick, 2021), students in this pilot study achieved level 2 of the model outcome. As Clinical Pathology is a new subject in the amended curriculum, ‘sensitising’ the students to the importance of Clinical Pathology (CP) is achieved.

Small group teaching practised in this pilot study is in line with other schools who used small group teaching which resulted in close relationship between students & facilitator (Smith et al., 2010). The CP app provided self-directed learning on information about laboratory tests which able to improve students’ performance (Smith et al., 2010). When students worked through their own clinical case, this create inquisitive learners as they were able to do clinical correlation with the laboratory findings of their patients.

Disagreement showed by some of the students’ implied the need to improve implementation and running of the project. Students’ learning preferences varies from visual, aural, reading, and kinaesthetic (VARK) and a suitable approach need to be designed to suit spectrum of students.

Post-test OSCE scores showed improvement in common pathology knowledge required from students. This general knowledge will assist them in other clinical postings in future. CP app provided earlier will be useful as self-directed learning. However, there’s still challenges in developing a standardised approach to assessing students’ knowledge and skills in this area (Smith et al., 2010) which is an avenue for future research.

V. CONCLUSION

Students developed more confidence in CP which is useful for future learning experience in other disciplines and future career.

Notes on Contributors

ERT designed the research, developed the CP app storyboard, created the DTS task book, analysed the results, wrote the manuscript. HM developed the CP application, edited the manuscript. FAG, INS, SCT, SZZ, ZS revised the protocol, CP app story board, DTS task book, facilitated the project, edited the manuscript.

Acknowledgement

The authors would like to acknowledge Sufi Firdaus and Rubhan AL Chandran on technical help in assisting the development of CP application and DTS task book.

Funding

This work was supported by Geran Inovasi Pengajaran Pembelajaran2018 /Universiti Putra Malaysia/ Centre of Academic Development (800-2/2/15).

Declaration of Interest

All authors declared there is no conflict of interest, including financial, consultant, institutional and other relationships that might lead to bias or a conflict of interest.

References

Clem, J. M., Mennicke, A. M., & Beasley, C. (2014). Development and validation of the experiential learning survey. Journal of Social Work Education, 50, 490-506. https://doi.org/10.1080/1043 7797.2014.917900

Kirkpatrick, J., & Kirkpatrick, W. K. (2021). Introduction to the New World Kirkpatrick model. Kirkpatrick Partners. Retrieved June 7, 2022, from https://www.kirkpatrickpartners.com/wp-content/uploads/2021/11/Introduction-to-the-Kirkpatrick-New-World-Model.pdf

Smith, B. R., Aguero-Rosenfeld, M., Anastasi, J., Baron, B., Berg, A., Bock, J. L., Campbell, S., Crookston, K. P., Fitzgerald, R., Fung, M., Haspel, R., Howe, J. G., Jhang, J., Kamoun, M., Koethe, S., Krasowski, M. D., Landry, M. L., Marques, M. B., Rinder, H. M., . . . Wu, Y. (2010). Educating medical students in laboratory medicine: A proposed curriculum. American Journal of Clinical Pathology, 133(4), 533–542. https://doi.org/10.1309/AJCPQCT9 4S FERLNI

*Eusni Rahayu binti Mohd.Tohit

Department of Pathology,

Faculty of Medicine & Health Sciences,

University Putra Malaysia,

43400 Serdang, Selangor

+60397692379

Email: eusni@upm.edu.my

Submitted: 11 March 2022

Accepted: 10 June 2022

Published online: 4 October, TAPS 2022, 7(4), 73-75

https://doi.org/10.29060/TAPS.2022-7-4/CS2783

Kiyotaka Yasui, Maham Stanyon, Yoko Moroi, Shuntaro Aoki, Megumi Yasuda, Koji Otani & Yayoi Shikama

Centre for Medical Education and Career Development, Fukushima Medical University, Fukushima, Japan

I. INTRODUCTION

Educational strategies that are effective in one culture may not elicit the expected response when transferred across cultures. For instance, discussion-based learning methods such as problem-based learning, which were developed in Western contexts to foster self-directed lifelong learning (Franbach et al., 2019), are not easy for Asian students to adapt to. The quietness of Asian students, noted in multi-national contexts, is not always due to linguistic or cultural literacy barriers (Remedios et al., 2008) and requires contextual deconstruction to enable effective solution generation. In a Japanese context, we have observed how quietness manifests through insufficient question generation and a lack of spontaneous opinion expression in class. Such attitudes may be interpreted by western standards as lacking initiative and critical thinking (Tavakol & Dennick, 2010) but are in line with Japanese social norms and traditional views of learning. Because effective learning through discussion requires cognitive conflict to facilitate conceptual transformation (De Grave et al., 1996), it is necessary to ease the psychological burden experienced by our students when deviating from inherited cultural habits so that they can comfortably express opinions to embrace such conflicts. In this case study we share how we created a supportive environment to enable Japanese medical students to embrace this behavioural change.

Through our understanding of Japanese cultural norms, we hypothesised that student quietness could be attributed to the following: 1) belief that their question is insignificant and a desire not to impose on the time of others; 2) reluctance to express different opinions which might cause conflict; and 3) risk aversion to making incorrect statements. Reasons 1) and 2) reflect Japanese social norms requiring people to always act with consideration for others, while 3) is related to a Confucian-affected traditional view of learning that values humility for one’s imperfection as a driving force to self-cultivation which potentially reinforces embarrassment when giving incorrect statements. We aimed to address the above points by introducing environmental changes to boost student confidence in the significance of their questions and minimise the psychological burden of expressing their opinions during a class on ethical dilemmas.

II. METHODS

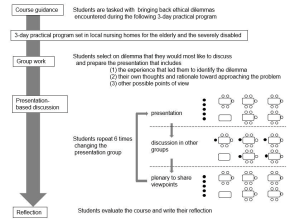

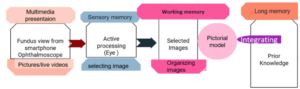

The class was undertaken by 256 first-year medical students at Fukushima Medical University in 2018 and 2019, as shown in Figure 1.

Figure 1. Flow diagram explaining the class: The closed circles represent the presenting group members and their interaction with the rest of the class (open circles) during the discussion and plenary session

A. Building Student Confidence

To minimise the risk aversion and associated anxiety of voicing incorrect opinions, we tasked students to reflect on ethical dilemmas with no clear answer, that they encountered during a 3-day placement in local nursing homes which was presented in groups of 5-6 to the rest of the class. Through removing the expectation of a right answer from the start, we created an atmosphere where students felt comfortable in generating multiple questions rather than being focused on reaching a single ‘correct’ answer.

B. A Conducive Environment for Cognitive Conflict

To break down the barriers of students seeking conformity and agreement during their presentations, we refocused the objective of the session onto the reasoning process of how they considered their ethical dilemma. This reframing supported students to embrace conflicting perspectives without worrying about achieving a consensus.

C. Nurturing a Diversity of Opinions

To facilitate the voicing of minority opinions, we harnessed a positive psychological trait in Japanese culture where pleasure is felt in acting as a collective. Therefore, when opinions were presented to the class, the entire group embraced ownership of the discussion, allowing the individuals who raised the points to remain anonymous. This reduced the potential for personal conflict and allowed diverse opinions to be aired without a loss of face.

At the end of the class, students were asked to evaluate the class using a 4-point Likert scale (good, fairly good, not so good, not good) and to write a reflection on the experience in one to two lines.

III. RESULTS

Out of the 245 students who submitted ratings, 89.9% evaluated the course as “good” or “fairly good”. About half mentioned their surprise at the diversity of opinions and their satisfaction with hearing them, acknowledging that hearing the different perspectives deepened their thoughts, broadened their perspectives, and created new ideas. Satisfaction with being able to express one’s thoughts was stated by a small number of students. Some of the students who chose “not so good” or “not good” pointed out that discussion was tough and required getting used to.

IV. DISCUSSION

When adopting a teaching method developed in a different culture, it should be delivered in the context of one’s own culture to optimise student learning. Once given a supportive environment, Japanese students, previously more content to listen than to actively contribute to discussions, exchanged their ideas and positively encountered cognitive conflict, rather than suffer from low confidence and an aversion to personal conflict. This demonstrates their potential to assimilate different perspectives and advance their thinking, akin to undergoing conceptual transformation. Through this work, we show that the standardisation of teaching methods does not equate to the globalisation of education, but how teaching must be adapted with clear implementation strategies and outcome definition, grounded in the culture to which the learners belong.

V. CONCLUSION

Generalising our adaptations outside of a Japanese context is limited, because of the cultural diversity within Asian countries that brings different challenges to discussion-based learning methods. However, vast numbers of students migrate across cultures in higher education and healthcare training. For universities and clinical training institutions with international students, understanding the barriers and supporting ‘quiet’ students to learn effectively through discussion alongside inherited cultural norms is a priority. This study aids in this understanding by providing an example from a Japanese medical undergraduate context.

Notes on Contributors

Kiyotaka Yasui designed and conducted the course, analysed the student reflections and wrote the manuscript.

Maham Stanyon analysed student reflctions and wrote the manuscript.

Yoko Mori conducted the course facilitation and supported the contextualisation of the results and discussion.

Shuntaro Aoki conducted the course facilitation and supported the contextualisation of the results and discussion.

Megumi Yasuda conducted the course facilitation and supported the contextualisation of the results and discussion.

Koji Otani conducted the course facilitation and supported the contextualisation of the results and discussion.

Yayoi Shikama planned and conducted the course as a course supervisor, analysed the student course ratings and reflections, and wrote the manuscript.

Acknowledgement

The authors would like to thank Dr. Rintaro Imafuku (Gifu University, Gifu, Japan) for constructive advice given during the medical education and research mentoring program sponsored by the Japan Society of Medical Education and Oliver Stanyon for editing a draft of this manuscript.

Funding

This study did not receive any funding.

Declaration of Interest

The authors have no conflict of interest to declare.

References

De Grave, W. S., Boshuizen, H. P. A., & Schmidt, H. G. (1996). Problem based learning: Cognitive and metacognitive processes during problem analysis. Instructional Science, 24, 321-341. http://doi.org/10.1007/BF00118111

Franbach, J. M., Talaat, W., Wasenitz, S., & Martimianakis, M. A. (2019). The case for plural PBL: An analysis dominant and marginalized perspectives in the globalization of problem-based learning. Advances in Health Sciences Education, 24, 931-942. http://doi.org/10.1007/s10459-019-09930-4

Remedios, L., Clarke, D., & Hawthorne, L. (2008). The silent participant in small group collaborative learning contexts. Active Learning in Higher Education 9(3), 201-216. http://doi.org/10.1177/1469787408095846

Tavakol, M., & Dennick, R. (2010). Are Asian international medical students just rote learners? Advances in Health Sciences Education, 15, 369-377. http://doi.org/10.1007/s10459-009-9203-1

*Kiotaka Yasui

1 Hikarigaoka,

Fukushima 960-1295,

Japan

Email: taka-y@fmu.ac.jp

Submitted: 23 November 2021

Accepted: 10 May 2022

Published online: 4 October, TAPS 2022, 7(4), 59-70

https://doi.org/10.29060/TAPS.2022-7-4/OA2714

Deepthi Edussuriya1, Sriyani Perera2, Kosala Marambe3, Yomal Wijesiriwardena1 & Kasun Ekanayake1

1Department of Forensic Medicine, Faculty of Medicine, University of Peradeniya, Sri Lanka; 2Medical Library, University of Peradeniya, Sri Lanka; 3Department of Medical Education, Faculty of Medicine, University of Peradeniya, Sri Lanka

Abstract

Introduction: Emotional Intelligence (EI) is especially important for medical undergraduates due to the long undergraduate period and relatively high demands of the medical course. Determining associates of EI would not only enable identification of those who are most suited for the discipline of medicine but would also help in designing training strategies to target specific groups. However, there is diversity of opinion regarding the associates of EI in medical students. Aim of the study was to determine associates of EI in medical students.

Methods: The databases MEDLINE, CENTRAL, Scopus, EbscoHost, LILAC, IMSEAR and three others were searched. It was followed by hand-searching, cited/citing references and searching through PQDT. All studies on the phenomenon of EI and/or its associates with medical students as participants were retrieved. Studies from all continents of the world, published in English were selected. They were assessed for quality using Q-SSP checklist followed by narrative synthesis on selected studies.

Results: Seven hundred and ninety-two articles were identified of which 29 met inclusion criteria. One article was excluded as its full text was not available. Seven articles found an association between ‘EI and academic performance’, 11 identified an association between ‘EI and mental health’, 11 found an association between ‘EI and Gender’, 6 identified an association between ‘EI and Empathy’ while two have found an association with the learning environment.

Conclusion: Higher EI is associated with better academic performance, better mental health, happiness, learning environment, good sleep quality and less fatigue, female gender and greater empathy.

Keywords: Emotional Intelligence, Associates of Emotional Intelligence, Medical Students, Mental Wellbeing, Empathy

Practice Highlights

- Higher emotional intelligence is associated with better academic performance.

- Higher emotional intelligence is associated with better mental health.

- Higher emotional intelligence is associated with female gender.

- Higher emotional intelligence is associated with greater empathy.

I. INTRODUCTION

Emotional intelligence (EI) is defined as “the ability to perceive emotions accurately, appraise, and express emotion; the ability to assess and/or generate feelings when they facilitate thought; ability to understand emotions and emotional knowledge, and to regulate emotions to promote emotional and intellectual growth” (Mayer & Salovey, 1997). Studies have found that there is a positive effect between EI and academic as well as professional success (Suleman et al., 2019). It has been reported that people and college students with good EI show better social functioning and interpersonal relationship and peers have identified them as less antagonistic and conflictual (Petrovici & Dobrescu, 2014).

Several tests and instruments that have been used to assess the Emotional intelligence of medical students were identified through the literature. These include standard EI tests, modified versions of standard EI tests, and authors’ assessment methods of their own. Schutte self-report EI test, TEIQue questionnaire and Bar-on’s emotional intelligence questionnaire ((EQ-i) 2.0) have been used frequently. Each of these instruments has different advantages and disadvantages of their own.

The Emotional Quotient Inventory (EQ-i) 2.0 is a revision of the EQ-I (Bar-On, 2004). The Emotional Quotient Inventory (EQ-I) 2.0 measures the interaction between an individual and their environment. Since the EQ-i 2.0 is a revision of the original Emotional Quotient Inventory (EQ-I) the standard platform of the EQ-i validation remains intact.

The Schutte Self-Report Emotional Intelligence Test (SSEIT) is a method of measuring general Emotional Intelligence (EI), using four sub-scales: emotion perception, utilising emotions, managing self- relevant emotions, and managing others’ emotions (Schutte et al., 1998). The SSEIT model is closely associated with the EQ-I model of Emotional Intelligence. It has a reliability rating of 0.90. The EI score, overall, is fairly reliable for adults and adolescents. However, the utilising emotions sub-scale has shown poor reliability (Ciarrochi et al., 2001). Also, they report a mediocre correlation of the SSREI with self-estimated EI, the Big Five EI scale, and life satisfaction (Petrides & Furnham, 2000). However, SSREI correlated poorly with well-being and EI criteria.

The Trait Emotional Intelligence Questionnaire (TEIQue), is an openly accessible instrument developed to measure global trait emotional intelligence. Based on the Trait Emotional Intelligence Theory, a significant number of research has been conducted regarding emotional intelligence (EI) (Mikolajczak et al., 2007). The TEIQue is available in long form and short forms. Internal consistency and test-retest both indicated scale reliabilities of 0.71 and 0.76. High correlations between the TEIQue with Shrink’s Emotional Intelligence Scale showed validity in measuring emotional intelligence and the “Big Five” Personality Traits.

Apart from those assessment methods, Genos Emotional Intelligence Assessment, Mayer-Salovey-Caruso Emotional Intelligence Test, TMMS-24 data and DASS-21 scale, Bradbury-Graves’s Emotional Intelligence and Siberia Schering’s Emotional Intelligence Questionnaire have also been used by the authors to assess the EI.

A comprehensive survey in medicine states that EI had a positive contribution in doctor-patient relationship, increased empathy, teamwork, communication skills, stress management, organisational commitment and leadership (Arora et al., 2010). EI is invariably important to medical professionals as it is associated with self-monitoring which would not only ensure adapting to clinical situations appropriately and having desirable interpersonal relations but also result in a favorable outcome for the patient and the wellbeing of the practitioner.

Few studies suggest that EI training can help medical students to build their leadership and empathy skills, as they enter the clinical years (Austin et al., 2005; Dolev et al., 2019). Literature surveys on emotional intelligence and medicine, and physician leadership qualities concludes that EI correlates with many of the competencies that modern medical curricula seek to deliver including leadership (Mintz & Stoller, 2014; Reshetnikov et al., 2020). Other studies indicate that age and gender are associated with emotional intelligence. However, some studies showed that EI at medical school admission could not reliably predict academic success in later years (Reshetnikov et al., 2020). These studies have all looked at the associates in an isolated sense. However, it would also be interesting to reflect on the concept of EI in a broader sense as it is inevitable that there would be an interaction of factors.

The medical course extends over a period of five years as opposed to most undergraduate degrees which are shorter. Medical training involves close interactions with different categories of people including patients, doctors of different grades and the paramedical staff. Training includes long hours of work in stressful environments where some situations could be emotionally challenging. This long undergraduate period and relatively high demands of the medical course would require medical students to possess a high degree of EI. As findings of different studies on EI are sometimes diverse in opinion, it would be useful to conduct a systematic review to identify the associates of EI in order to design training strategies which target specific groups.

Even though EI is considered a trainable trait, the extent of trainability depends on many personal and institutional factors (Mattingly & Kraiger, 2019). Völker (2020) expresses that trainability in emotional intelligence is subjected to acquired knowledge which is situational and may depend on accumulating relevant experience.

In the Sri Lankan context, the sole criteria for selection of students to a medical course is the academic excellence at the Advanced level examination, which alone may not reflect their suitability to follow a profession like medicine (University Grants Commission, 2022).

However, since EI is an essential trait especially for medical practice many universities worldwide use different tools to assess EI in their applicants. Furthermore, different universities adopt varying techniques to develop EI of their students throughout the course. It is envisaged that this review would not only help determine what additional factors could be considered in the selection of applicants for a medical course but would also help teachers design training strategies to target specific groups of students and also ensure a more enjoyable and productive learning experience for the students as a whole. There is no doubt that these selection and intervention programs would produce doctors with more favourable qualities which would not only produce greater benefits to the patient but would prevent burn out among doctors.

A. Objective

The objective of this study is to find out, the associates of Emotional Intelligence in Medical students based on available literature in English from 2015 to 2020.

II. MATERIALS AND METHODS

The research question was defined based on the PICOS (Population, Intervention, Comparison, Outcomes and Setting) format. The review protocol was developed according to PRISMA-P 2015 (Preferred reporting items for systematic review and meta-analysis protocols) statement (Moher et al. 2015) by all three authors DE, KM and SP and was registered in the PROSPERO Registry (CRD42021227877). The methodology for the systematic review (SR) followed the guidelines and standards of IOM (Institute of Medicine) (Eden et al., 2011) and PRISMA-2015 for reporting.

A. Search Strategy

A Systematic and comprehensive search was conducted by SP in April 2020 and references were managed using the software Mendeley. The search explicitly aimed to identify all published and unpublished relevant studies in order to limit bias in the searching process. The key search terms were identified with the aid of a search-term-harvesting table by KM and DE. A combination of relevant medical subject headings and search terms tagged with other appropriate search fields were used in the literature search. The following databases were searched:

CDSR (Cochrane Database of Systematic Reviews), DARE (The Database of Abstracts of Reviews of Effects), MEDLINE (1950- 2020) via Pubmed (See supplemental Appendix 1 for search strategy), CENTRAL (The Cochrane Central Register of Controlled Trials, 1948 – 2020), Scopus, EbscoHost, LILAC, IMSEAR (Index Medicus for South East Asian region) and WHO International Clinical Trials Registry Platform (ICTRP). In addition to electronic searches, two key journals (2015-2020) were hand-searched, and cited & citing references of all included studies were screened for further relevant articles. Searches were limited to studies published between the years 2015-2020. Searching other resources included grey literature such as PQDT (ProQuest Dissertations and Thesis database) and Global health (via WHO).

B. Selection Criteria

After removal of duplicates from the retrieved articles, the remaining articles with abstracts were uploaded to the Web application, Rayyan (Quzzani et al., 2016) for the purpose of screening. The criteria for selection of articles were based on the PICOS elements. The studies were from all continents of the world and limited to those published in English. All studies focusing on the phenomenon of EI and/or its associates with medical students as participants were considered for inclusion in the review.

The authors DE, KM, SP and KE independently screened the uploaded articles in Rayyan, using the above eligibility criteria. In the first phase, title and abstract of each article were reviewed by any of the two authors independently for its candidacy. Following this initial evaluation, the full text of all those selected articles were retrieved and further examined by KM and DE independently (second phase), for the final verification before inclusion in the review. Any disagreements regarding eligibility of studies were resolved by consulting a third author (SP). Reviews, systematic reviews, editorials, letters and comments were removed. Articles which met the eligibility criteria were selected for inclusion in the review. Excluded studies were marked with the ‘reason’ in Rayyan.

C. Data Extraction and Quality Assessment

Data from all included studies were extracted by the review authors YW and KM using a data extraction table developed for the purpose of this review (Appendix 2). Data extracted were cross-checked by SP for any errors. Information recorded included: study details (author, year, country of origin), participants (number of participants, gender, level of undergrad program, etc.), methods (study aim, design, total study duration, tools used), study type (phenomenon /context studied) and outcomes (all relevant findings related to primary and secondary outcomes).

SP and YW independently assessed the quality of those selected studies using Quality Assessment Checklist for Survey Studies in Psychology (Q-SSP) (Protogerou & Hagger, 2020) Results of the quality assessments were compared (Appendix 3); any disagreements were resolved by consensus. Articles which met the required quality criteria were selected for inclusion in the review.

D. Strategy for Data Synthesis

Due to the heterogeneity between the included studies, a quantitative synthesis was not considered. A narrative synthesis of the findings from individual included studies was carried out by DE, based on the characteristics of the targeted populations and the type of outcome such as association/correlation of EI with academic performance, professional success, social functioning, interpersonal relationship, empathy, teamwork spirit, communication skills, stress management, organizational commitment, leadership quality, self-monitoring, mental health and emotional well-being.

III. RESULTS

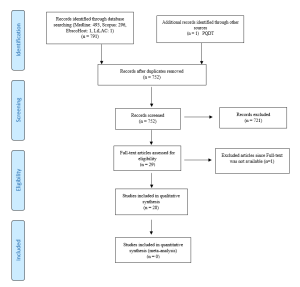

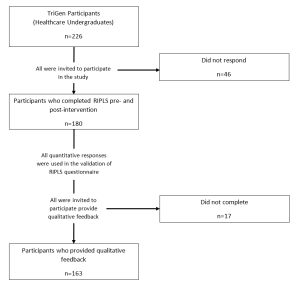

A total of 792 articles were retrieved during the literature search. After removing the duplicates, 752 articles were considered for screening using the eligibility criteria. Initial evaluation of articles through title and abstract resulted in only 29 articles meeting the selection criteria. During the full-text evaluation, one article (Parijitham, 2018) was removed, as its full-text article could not be found even after contacting the author. The data that support the findings of this study are openly available at https://doi.org/10.6084/m9.figshare.15564210 (Edussuriya et al., 2021). Twenty-eight articles were finally selected for quality assessment. Flow diagram of the selection of studies is shown in Figure 1.

Figure 1. Flow diagram illustrating included and excluded studies in the systematic review

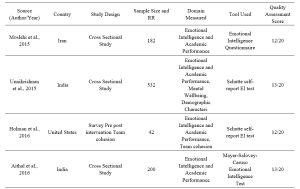

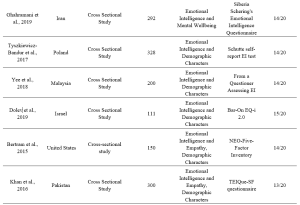

The study design of the selected studies comprised of 26 cross sectional (majority), one longitudinal and one quasi-experimental. However, all studies used standard validated survey questionnaires to collect data. Therefore, to assess the quality of selected studies, Quality Assessment Checklist for Survey Studies in Psychology (Q-SSP) was selected as the best, ‘applicable to all’ tool in this review, considering its relevance also to the trait emotional intelligence since emotions, thoughts and mental processes are aspects of psychology. The quality of the studies was determined by the extent to which the items on above checklist were met by each of the articles. There were 20 checklist items in the tool out of which one item (item-19 – Debriefing participants at the end of data collection) could be justifiably waived; one reason being none of the included studies used it in the methodology. Thus 19 items were considered to be applicable in this review (Appendix 4).

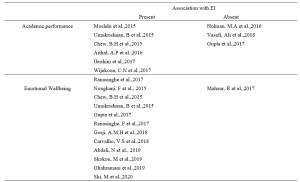

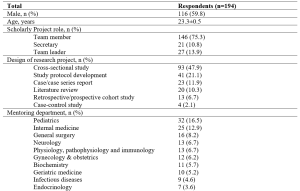

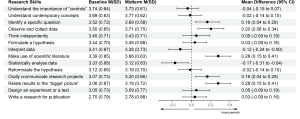

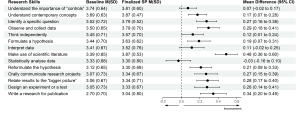

Table 1. Characteristics of included studies

Table 2. Categorisation of findings of the studies

A. Findings of Studies and Data Analysis

1) EI and academic performance: According to studies, a positive correlation was identified between EI and academic performance (Aithal, et al., 2016, Ibrahim et al. 2017; Moslehi et al., 2015, Wijekoon et al., 2017) while (Ranasinghe et al., 2017; Unnikrishnan et al., 2015) also found a significant association between EI and academic performance. These studies indicated that students with higher EI intend to perform better in their academic work. A cross-sectional study done by Chew et al. (2015) showed that medical students with less emotional intelligence were largely unaware of their anxiety, which was associated with lower academic performance. According to studies done by Holman et al., 2016, Gupta et al., 2017 and Vasefi et al., 2018 there was no correlation of EI with academic performance. A study by Othman et al., 2020 revealed that EI showed a significant positive effect on intuitive decision-making style and a negative effect on avoidant and dependent decision-making styles which may explain better academic performance of medical students with high EI.

2) EI and mental health (emotional wellbeing): A direct relationship between EI and academic satisfaction was found in studies done by Rouhani et al., 2015, Unnikrishnan et al., 2015 and Carvalho et al., 2018. Further, Carvalho et al., 2018 reported that a positive relationship was observed between EI and academic-related well-being which accounts for both academic performance and mental health. It was seen that medical students with less emotional intelligence were largely unaware of their anxiety (Chew et al., 2015) and those with higher emotional intelligence perceived lesser stress (Gupta et al., 2017 and Ranasinghe et al., 2017). Shi and Du (2020) found that EI was strongly and negatively associated with Personal Distress. Heidari Gorji et al. (2018) identified a direct relationship between emotional intelligence and mental health while a study done by Mahaur et al. (2017) did not find a significant relationship between the two. Ghahramani et al. (2019) identified a significant positive relationship of EI with happiness while Abdali et al. (2019) showed a positive correlation with sleep quality and a negative correlation with general fatigue.

3) EI and demographic characters: Higher EI in females compared to males was found (Aithal et al., 2016, Bertram et al., 2015, Ibrahim et al., 2017, Khan et al., 2016, Raut & Gupta, 2019 Sundararajan and Gopichandran, 2018, Tyszkiewicz-Bandur et al., 2017, Unnikrishnan et al., 2015 and Wijekoon et al., 2017). Irfan et al. (2019) suggests that female medical students had significantly higher empathic behavior and emotional intelligence than male students. However, Skokou et al. (2019) did not find any difference in EI in males and females. Vasefi et al. (2018) and Abe et al. (2018) too did not find a significant relationship between EI and gender. However, Abe et al. (2018) revealed that females showed significantly higher Neuroticism, Agreeableness and Empathy scores than males. According to Ibrahim et al. (2017) increasing age resulted in higher EI. However, Yee et al. (2018) did not find a significant association of EI with age. According to Yee et al. (2018) there was no significant association of EI with ethnicity.

4) EI and empathy: Significant correlation between EI and Empathy was identified (Bertram et al., 2015, Irfan et al., 2019 Khan et al., 2016; Sundararajan & Gopichandran, 2018). Shi and Du (2020) suggests that EI helps medical professionals to establish a better association with the patient.

5) Learning environment: Relationship between EI and academic background was identified by both Irfan et al. (2019) and Sundararajan and Gopichandran (2018). According to Sundararajan and Gopichandran (2018), students who attended government schools for high school education had greater emotional intelligence than students from private schools. But Irfan et al. (2019) suggests that medical students of private medical schools showed higher level of empathy as compared to public medical schools. Dolev et al. (2019) reveals that there are no differences in EI levels between first-year and sixth-year medical students.

IV. DISCUSSION

The review included studies conducted in South and Southeast Asian, European, Arabian, North American and South American countries. Majority of studies on Asian students revealed a high association between EI and academic performance. However, two studies on Asian students and one on US students failed to observe such associations. The impact of EI on academic performance may be explained by the fact that being aware of one’s anxiety relieved stress and those with high EI experienced greater mental wellbeing and satisfaction with their programs; which may contribute to better academic performance. Furthermore, the fact that EI showed a positive correlation with better mental health/wellbeing, less perceived stress/distress, happiness, good sleep quality and less fatigue may account for the better academic performance of students with high EI.

Empathy is an important aspect in the delivery of high-quality healthcare. Several researchers from different regions of the world reported strong association between empathy and high EI scores. Therefore, assessment of EI may be useful in admitting students for medical degrees. However, since EI is considered as a “trainable trait”, the role that EI plays in admitting students to medical schools is debatable. Therefore, all efforts must be taken by medical schools to include activities that enhance EI, during the medical course, irrespective of the EI levels of students on admission. The fact that EI did not improve with seniority does not purely support the fact that EI is not trainable but it maybe those students were not exposed to and not sensitised to activities which enhance EI.

Evidence indicated a positive association between high EI scores and female gender. It maybe postulated that the “nurturing and caring” role assigned by society to the females influence their upbringing. Thereby improving their emotional intelligence.

In conclusion it must be stated that since a majority of studies revealed that higher EI is associated with better academic performance, better mental health and greater empathy and since EI is considered a trainable trait, curricular need to be developed with a view to improving EI.

In order to develop EI, curricular should contain programs on general leadership development, self-care/ wellness and burn-out prevention (Monroe & English, 2013). Small-group experiential learning activities and meeting with trained mentors throughout the years would be helpful. Debriefing sessions and maintaining a journal are some other techniques that need to be considered. It may be helpful to discuss change management and quality improvement with students (Audra et al., 2020). Exposure of students to skills of self-awareness and self-management through discussion, exposure to theories of conflict management, mindfulness practice, leadership training, discussions on learning styles, discussions on power and influence, identification of team dynamics, exposure to high-functioning inter-professional teams, peer coaching, health care leader interview and shadowing of experienced clinicians are some techniques that could be adopted in attempting to develop EI among students (Kozlowski & Ilgen, 2006). It would be beneficial to evaluate acquisition based on completion of an EI inventory, feedback from peers and staff, project presentations, reflective writing, measurement of achievement of professional and personal development benchmarks and milestones, performance on simulated scenarios and small-group exercises (Pan & Allison, 2010).

During the study it was observed that there is paucity of longitudinal studies on Associates of EI. Therefor it would be beneficial to conduct longitudinal studies which may help identify some aspects with regard to the trainability of EI in medical students.

V. CONCLUSION

Through this review it was revealed that higher EI is associated with

- better academic performance,

- better mental health including less perception of stress and distress, happiness, good sleep quality and less fatigue,

- female gender, and

- greater empathy.

No significant association was found between age, ethnicity, and seniority in the medical course, and emotional intelligence. No conclusions could be made about the association between the nature of the educational institute (private or state) and emotional intelligence.

A. Limitations

In this review, it was found that authors of included studies which used several different tools to assess the EI of medical students. Each of these tools have their own advantages and disadvantages which cause comparison difficult. It could not be assumed that, each and every one of these methods provide results in the same level.

B. Recommendation

Since high EI has shown a positive correlation with academic performance and better mental wellbeing of students and since it has been identified as a “trainable trait” all efforts should be made to enhance EI of medical students during their undergraduate training.

Notes on Contributors

Edussuriya D.H (DE) was the Principal Investigator of the study. Protocol drafting, study selection, analysis and interpretation of data, synthesis of findings of individual studies and the drafting of manuscript was done by the author.

Perera S. (SP) facilitated the methodology, involved in drafting the protocol and retrieved selected articles, since the author has previous experience in conducting systematic reviews. Reference management in Mendeley and Rayyan, cross-checking the extracted data, assessed quality of selected studies and final review of draft was also done by the author.

Marambe K.N (KM) was involved in drafting the protocol, involved in article selection and extracted data from the selected articles.

Wijesiriwardena W.M.S.Y (YW) extracted data from selected articles, assessed the quality of selected articles and finalised the manuscript.

Ekanayake E.M.K.B (KE) has screened the uploaded articles in Rayyan.

Ethical Approval

The review is registered in PROSPERO – The International Prospective Register of Systematic Reviews under the registration number CRD42021227877 for the systematic review.

Data Availability

Data set that support the findings of this study are openly available in Figshare repository https://doi.org/10.6084/ m9.figshare.15564210

Acknowledgement

The authors acknowledge Information Officers of National Science Library and Resources Center, National Science Foundation, Sri Lanka for support in Scopus searches and staff of Medical Library of Faculty of Medicine, University of Peradeniya for the assistance in finding full text articles of the included studies in the review.

Funding

No funding sources are associated with this study.

Declaration of Interest

No conflicts of interest are associated with this paper.

References

Abdali, N., Nobahar, M., & Ghorbani, R. (2019). Evaluation of emotional intelligence, sleep quality, and fatigue among Iranian medical, nursing, and paramedical students: A cross-sectional study. Qatar Medical Journal, 2019(3), 15. https://doi.org/10.5339/qmj.2019.15

Abe, K., Niwa, M., Fujisaki, K., & Suzuki, Y. (2018). Associations between emotional intelligence, empathy and personality in Japanese medical students. BMC Medical Education, 18, Article 47. https://doi.org/10.1186/s12909-018-1165-7

Aithal, A. P., Kumar, N., Gunasegeran, P., Sundaram, S. M., Rong, L. Z., & Prabhu, S. P. (2016). A survey-based study of emotional intelligence as it relates to gender and academic performance of medical students. Education for Health, 29(3), 255–258.

Arora, S., Ashrafian, H., Davis, R., Athanasiou, T., Darzi, A., & Sevdalis, N. (2010). Emotional intelligence in medicine: A systematic review through the context of the ACGME competencies. Medical education, 44(8), 749–764. https://doi.org/10.1111/j.1365-2923.2010.03709.x

Audra, V. W., O’Brien, T. C., Varvayanis, S., Alder, J., Greenier, J., Layton, R. L., Stayart,C. A., Wefes, I., & Brady, A. E. (2020). Applying experiential learning to career development training for biomedical graduate students and postdocs: Perspectives on program development and design. CBE—Life Sciences Education, 19(3), 1-12. https://doi.org/10.1187/cbe.19-12-0270

Austin, E. J., Evans, P., Goldwater, R., & Potter, V. (2005). A preliminary study of emotional intelligence, empathy and exam performance in first year medical students. Personality and Individual Differences, 39(8), 1395-1405. https://doi.org/10.1016/j.paid.2005.04.014

Bar-On, R. (2004). The Bar-On Emotional Quotient Inventory (EQ-i): Rationale, description and summary of psychometric properties. In G. Geher (Ed.), Measuring Emotional Intelligence: Common Ground and Controversy (pp. 115–145). Nova Science Publishers.

Bertram, K., Randazzo, J., Alabi, N., Levenson, J., Doucette, J. T., & Barbosa, P. (2016). Strong correlations between empathy, emotional intelligence, and personality traits among podiatric medical students: A cross-sectional study. Education for Health (Abingdon), 29(3), 186–194.

Carvalho, V. S., Guerrero, E., & Chambel, M. J. (2018). Emotional intelligence and health students’ well-being: A two-wave study with students of medicine, physiotherapy and nursing. Nurse Education Today, 63, 35–42. https://doi.org/10.1016/j.nedt.2018.01.010

Chew, B. H., Hassan, F., & Zain, A. M. (2015). Medical students with higher emotional intelligence were more aware of self-anxiety and scored higher in continuous assessment: A cross-sectional study. Medical Science Educator, 25(4), 421-430. https://doi.org/10.1007/s40670-015-0168-9

Ciarrochi, J., Chan, A. Y., & Bajgar, J. (2001). Measuring emotional intelligence in adolescents. Personality and Individual Differences, 31(7), 1105-1119. https://doi.org/10.1016/S0191-8869(00)00207-5.

Dolev, N., Goldental, N., Reuven-Lelong, A., & Tadmor, T. (2019). The evaluation of emotional intelligence among medical students and its links with non-cognitive acceptance measures to medical school. Rambam Maimonides Medical Journal, 10(2), e0010. https://doi.org/10.5041/RMMJ.10365

Eden, J., Levit, L., Berg, A., & Morton. S. (2011). Finding what works in health care: Standards for systematic reviews. National Academies Press. https://doi.org/10.17226/13059

Edussuriya, D., Perera, S., Marambe, K., Wijesiriwardena, Y., & Ekanayake, K. (2021). Emotional intelligence systematic review (Version 3) [Data Set]. Figshare. https://doi.org/10.6084/m9.figshare.15564210.v3

Ghahramani, S., Jahromi, A. T., Khoshsoroor, D., Seifooripour, R., & Sepehrpoor, M. (2019). The relationship between emotional intelligence and happiness in medical students. Korean Journal of Medical Education, 31(1), 29–38. https://doi.org/10.3946/kjme.2019.116

Gupta, R., Singh, N., & Kumar, R. (2017). Longitudinal predictive validity of emotional intelligence on first year medical students perceived stress. BMC Medical Education, 17(1), 139. https://doi.org/10.1186/s12909-017-0979-z

Heidari Gorji, A. M., Shafizad, M., Soleimani, A., Darabinia, M., Goudarzian, A. H. (2018) Path analysis of self-efficacy, critical thinking skills and emotional intelligence for mental health of medical students. Iranian Journal of Psychiatry and Behavioral Sciences. 12(4), e59487. https://doi.org/10.5812/ijpbs.59487

Holman, M. A., Porter, S. G., Pawlina, W., Juskewitch, J. E., & Lachman, N. (2016). Does emotional intelligence change during medical school gross anatomy course? Correlations with students’ performance and team cohesion. Anatomical Sciences Education, 9(2), 143–149. https://doi.org/10.1002/ase.1541

Ibrahim, N. K., Algethmi, W. A., Binshihon, S. M., Almahyawi, R. A., Alahmadi, R. F., & Baabdullah, M. Y. (2017). Predictors and correlations of emotional intelligence among medical students at King Abdulaziz University, Jeddah. Pakistan Journal of Medical Sciences, 33(5), 1080–1085. https://doi.org/10.12669/pjms.335.13157

Irfan, M., Saleem, U., Sethi, M. R., & Abdullah, A. S. (2019). Do we need to care: emotional intelligence and empathy of medical and dental students. Journal of Ayub Medical College, Abbottabad: JAMC, 31(1), 76–81.

Khan, M. A., Niazi, I. M., & Rashdi, A. (2016). Emotional intelligence predictor of empathy in medical students. Rawal Medical Journal, 41(1), 121-124.

Kozlowski, SWJ., & Ilgen. D.R. (2006). Enhancing the effectiveness of work groups and teams. Psychological Science in the Public Interest, 7(3), 77-124. https://doi.org/10.1111/j.1529-1006.2006.00030.x

Mahaur, R., Jain, P., & Jain, A. K. (2017). Association of mental health to emotional intelligence in medical undergraduate students: Are there gender differences? Indian Journal of Physiology and Pharmacology, 61(4), 383-391.

Mattingly, V., & Kraiger, K. (2019). Can emotional intelligence be trained? A meta-analytical investigation. Human Resource Management Review, 29(2), 140-155. https://doi.org/10.1016/j.hrmr.2018.03.002

Mayer, J. D., & Salovey, P. (1997). What is emotional intelligence? Emotional development and emotional intelligence: Educational implications (pp. 3-31). Basic Books.

Mikolajczak, M., Luminet, O., Leroy, C., & Roy, E. (2007). Psychometric properties of the Trait Emotional Intelligence Questionnaire: Factor structure, reliability, construct, and incremental validity in a French-speaking population. Journal of Personality Assessment, 88(3), 338–353. https://doi.org/10.1080/00223890701333431

Mintz, L. J., & Stoller, J. K. (2014). A systematic review of physician leadership and emotional intelligence. Journal of Graduate Medical Education, 6(1), 21–31. https://doi.org/10.4300/JGME-D-13-00012.1

Moher, D., Shamseer, L., Clarke, M., Ghersi, D., Liberati, A., Petticrew, M., Shekelle, P., Stewart, L. A., & PRISMA-P Group (2015). Preferred reporting items for systematic review and meta-analysis protocols (PRISMA-P) 2015 statement. Systematic Reviews, 4(1), Article 1. https://doi.org/10.1186/2046-4053-4-1

Monroe, A. D., & English, A. (2013). Fostering emotional intelligence in medical training: The SELECT program. AMA Journal of Ethics, 13(6), 509-513. https://doi.org/10.1001/virtualmentor.2013.15.6.medu1-1306

Moslehi, M., Samouei, R., Tayebani, T., & Kolahduz, S. (2015). A study of the academic performance of medical students in the comprehensive examination of the basic sciences according to the indices of emotional intelligence and educational status. Journal of Education and Health Promotion, 4, 66. https://doi.org/10.4103/2277-9531.162387

Noughani, F., Bayat, R. M., Ghorbani, Z., & Ramim, T. (2015). Correlation between emotional intelligence and educational consent of students of Tehran University of Medical Students. Tehran University Medical Journal, 73(2), 110-116.

Othman, R., El Othman, R., Hallit, R., Obeid, S., & Hallit, S. (2020). Personality traits, emotional intelligence and decision-making styles in Lebanese universities medical students. BMC Psychology, 8(1), Article 46. https://doi.org/10.1186/s40359-020-00406-4

Ouzzani, M., Hammady, H., Fedorowicz, Z., & Elmagarmid, A. (2016). Rayyan- A web and mobile app for systematic reviews. Systematic Reviews, 5(1), Article 210. https://doi.org/10.1186/s13643-016-0384-4

Petrides, K., & Furnham, A. (2000). On the dimensional structure of emotional intelligence. Personality and Individual Differences, 29(2), 313-320. https://doi.org/10.1016/S0191-8869(99)00195-6

Petrovici, A., & Dobrescu, T. (2014). The role of emotional intelligence in building interpersonal communication skills. Procedia-Social and Behavioral Sciences, 116, 1405-1410. https://doi.org/10.1016/j.sbspro.2014.01.406

Protogerou, C., & Hagger, M. S. (2020). A checklist to assess the quality of survey studies in psychology. Methods in Psychology, 3, 100031. https://doi.org/10.31234/osf.io/uqak8

Ranasinghe, P., Wathurapatha, W. S., Mathangasinghe, Y., & Ponnamperuma, G. (2017). Emotional intelligence, perceived stress and academic performance of Sri Lankan medical undergraduates. BMC Medical Education, 17(1), Article 41. https://doi.org/10.1186/s12909-017-0884-5

Raut, A. V., & Gupta, S. S. (2019). Reflection and peer feedback for augmenting emotional intelligence among undergraduate students: A quasi-experimental study from a rural medical college in central India. Education for Health (Abingdon, England), 32(1), 3–10. https://doi.org/10.4103/efh.EfH_31_17

Reshetnikov, V. A., Tvorogova, N. D., Hersonskiy, I. I., Sokolov, N. A., Petrunin, A. D., & Drobyshev, D. A. (2020). Leadership and emotional intelligence: current trends in public health professionals training. Frontiers in Public Health, 7, 413. https://doi.org/10.3389/fpubh.2019.00413

Schutte, N. S., Malouff, J. M., Hall, L. E., Haggerty, D. J., Cooper, J. T., Golden, C. J., & Dornheim, L. (1998). Development and validation of a measure of emotional intelligence. Personality and Individual Differences, 25(2), 167–177. https://doi.org/10.1016/S0191-8869(98)00001-4

Shi, M., & Du, T. (2020). Associations of emotional intelligence and gratitude with empathy in medical students. BMC Medical Education, 20(1), Article 116. https://doi.org/10.1186/s12909-020-02041-4

Skokou, M., Sakellaropoulos, G., Zairi, N. A., Gourzis, P., & Andreopoulou, O. (2019). An exploratory study of trait emotional intelligence and mental health in freshmen Greek medical students. Current Psychology, 40, 6057–6066. https://doi.org/10.1007/s12144-019-00535-z

Suleman, Q., Hussain, I., Syed, M. A., Parveen, R., Lodhi, I. S., & Mahmood, Z. (2019). Association between emotional intelligence and academic success among undergraduates: A cross-sectional study in KUST, Pakistan. PloS one, 14(7), e0219468. https://doi.org/10.1371/journal.pone.0219468

Sundararajan, S., & Gopichandran, V. (2018). Emotional intelligence among medical students: A mixed methods study from Chennai, India. BMC Medical Education, 18(1), Article 97. https://doi.org/10.1186/s12909-018-1213-3

Tyszkiewicz-Bandur M., Walkiewicz M, Tartas M, Bankiewicz-Nakielska J. (2017). Emotional intelligence, attachment styles and medical education. Family Medicine & Primary Care Review, 19(4), 404–407. https://doi.org/10.5114/fmpcr.2017.70127

University Grants Commission, Sri Lanka. (2022). University Admissions. Retrieved April 21, 2022, from https://www.ugc.ac.lk/index.php?option=com_content&view=article&id=25&Itemid=11&lang=en

Unnikrishnan, B., Darshan, B., Kulkarni, V., Thapar, R., Mithra, P., Kumar, N., Holla, R., Kumar, A., Sriram, R., Nair, N., Juanna, Rai, S., & Najiza, H. (2015). Association of emotional intelligence with academic performance among medical students in South India. Asian Journal of Pharmaceutical and Clinical Research, 8(2), 300-302.

Vasefi, A., Dehghani, M., & Mirzaaghapoor, M. (2018). Emotional intelligence of medical students of Shiraz University of Medical Sciences cross sectional study. Annals of Medicine and Surgery, 32, 26–31. https://doi.org/10.1016/j.amsu.2018.07.005

Völker, J. (2020). An examination of ability emotional intelligence and its relationships with fluid and crystallized abilities in a student sample. Journal of Intelligence, 8(2), 18. https://doi.org/10.3390/jintelligence8020018

Wei, P., & Joseph, A. (2011). Implementing and evaluating the integration of critical thinking into problem based learning in environmental building. Journal for Education in the Built Environment, 6(2), 93-115. https://doi.org/10.11120/jebe.2011.06020093

Wijekoon, C. N., Amaratunge, H., de Silva, Y., Senanayake, S., Jayawardane, P., & Senarath, U. (2017). Emotional intelligence and academic performance of medical undergraduates: A cross-sectional study in a selected university in Sri Lanka. BMC Medical Education, 17(1), Article 176. https://doi.org/10.1186/s12909-017-1018-9

Yee, K. T., Yi, M. S., Aung, K. C., Lwin, M. M., & Myint, W. W. (2018). Emotional intelligence level of year one and two medical students of University Malaysia Sarawak: Association with demographic data. Malaysian Applied Biology, 47(1), 203-208.

*Edussuriya D.H

Department of Forensic Medicine, Faculty of Medicine,

University of Peradeniya, Sri Lanka, 20400

+94711698916

Email: deepthi.edussuriya@med.pdn.ac.lk

Submitted: 27 May 2022

Accepted: 10 June 2022

Published online: 4 October, TAPS 2022, 7(4), 71-72

https://doi.org/10.29060/TAPS.2022-7-4/PV2819

Bhuvan KC1,2 & P Ravi Shankar3

1Faculty of Pharmacy and Pharmaceutical Sciences, Monash University, Parkville, Australia; 2College of Public Health, Medical and Veterinary Sciences, James Cook University, Townsville, Australia; 3IMU Centre for Education, International Medical University, Kuala Lumpur, Malaysia

I. INTRODUCTION

Healthcare systems and medicines operate in a complex landscape and constantly interact with individuals, the environment, and society. In such a complex healthcare delivery system, nonlinearity always exists, and treatments, different healthcare services, and medicines cannot be delivered without factoring in the uncertainty brought about by human, behavioural, system, and societal factors.

A medical doctor prescribes medication/s to treat diseases or healthcare problems following certain treatment protocols and guidelines. However, in the community, several factors affect the adherence and outcomes, such as adverse effects, lifestyle factors, socioeconomic aspects, attitudes, and belief systems, so it is difficult to entirely predict the success of a regimen. These factors that can influence the outcomes of therapy have not received adequate attention. Furthermore, the complexity of healthcare delivery is starker in the treatment of ageing populations or those with chronic diseases.

Our world is becoming increasingly complex. Many uncertainties affect the delivery of healthcare services today. There are inherent challenges within the healthcare system such as lack of adequate funding, ageing population, rising burden of chronic diseases, and overstretched health workforce. In addition, newer challenges such as the impact of climate change on health delivery, the use of digital health technologies, the emergence of new epidemics, and questions regarding sustainability make healthcare delivery complex and uncertain. Healthcare systems operate through a network of subsystems such as hospitals and health systems, clinics, primary healthcare networks, rehabilitation centres, pharmacies, hospices, care homes, families, and patients. They interact with each other in a complex way sometimes producing unintended consequences such as adverse reactions, medication errors, unintended hospitalisations, and hospital-acquired infections. Thus, if we view the health system as a complex entity we can appreciate its dynamic behaviour helping us in delivering health services in a self-organized way (Lipsitz, 2012).